Does Undetectable AI Work? My Honest Thoughts and Tests

How effective is Undetectable AI? Can it bypass AI detectors reliably? And is it even ethical to use? These are the things we’re going to talk about in this article.

John Angelo Yap

Updated August 27, 2025

Reading Time: 5 minutes

Let’s cut straight to it: in 2025, AI detection tools are everywhere. Professors use them, companies rely on them, and publishers quietly run submissions through them before deciding what’s “real” and what’s “AI-generated.”

The problem? These detectors are inconsistent at best and flat-out wrong at worst. I’ve personally seen human-written essays flagged at 99% “AI,” while clunky ChatGPT paragraphs slipped through without a hiccup. That’s where bypass tools step in — trying to level the playing field by making AI-generated content sound more natural.

At the top of that list is Undetectable AI. It’s one of the most talked-about tools in the AI writing space right now. But the question is, does it actually work or is it just hype?

That’s what we’re digging into here. I’ll break down what Undetectable AI is, what it offers in 2025, and how it stacks up against other tools. Then, I’ll share my own hands-on testing.

What is Undetectable AI?

Undetectable AI is, at its core, an AI humanizer. It takes AI-generated text — whether that’s from ChatGPT, Claude, Gemini, any other model, or anything that reads like one — and rewrites it so it passes as human.

But in 2025, it’s more than just a bypasser. Over the last year, it’s evolved into more of a writing platform. Here’s some of what it offers now:

- AI Humanizer: The flagship feature. Paste text, hit convert, and get something that looks, feels, and reads like a person wrote it.

- Style Replicator: Paste samples of your own writing, and the tool learns your style. Perfect if you don’t want your AI outputs to just sound “human,” but to sound like you.

- Smart Cover Letter Generator: Tailors professional cover letters to job posts, but in a way that avoids the usual AI clichés.

In short: it’s not just about slipping past detectors anymore. It’s about producing content that blends seamlessly with your actual writing voice.

Why People Use Undetectable AI in 2025

The use cases have only grown. Here are the most common reasons people turn to it today:

- Students – College and grad students still make up a huge portion of the user base. Detectors like GPTZero and Turnitin have been notorious for false positives, and no one wants to risk their academic standing over something they didn’t even do.

- Freelancers & Writers – Platforms like Upwork and Fiverr now quietly screen content. Writers who use AI for drafts rely on humanizers to make sure their submissions don’t get flagged.

- Job Seekers – With Smart Applier and the cover letter tool, Undetectable AI has become a go-to for people applying to dozens of jobs a week. The idea is simple: use AI without having applications look like a bot wrote them.

- Content Creators – Blogs, newsletters, and social posts are still king. And with Google updating its search guidelines to crack down on low-quality AI spam, tools like this help creators stay in the safe zone.

In other words: it’s not about cheating the system. It’s about avoiding the risk of being punished for using AI responsibly.

Undetectable AI vs. AI Detector

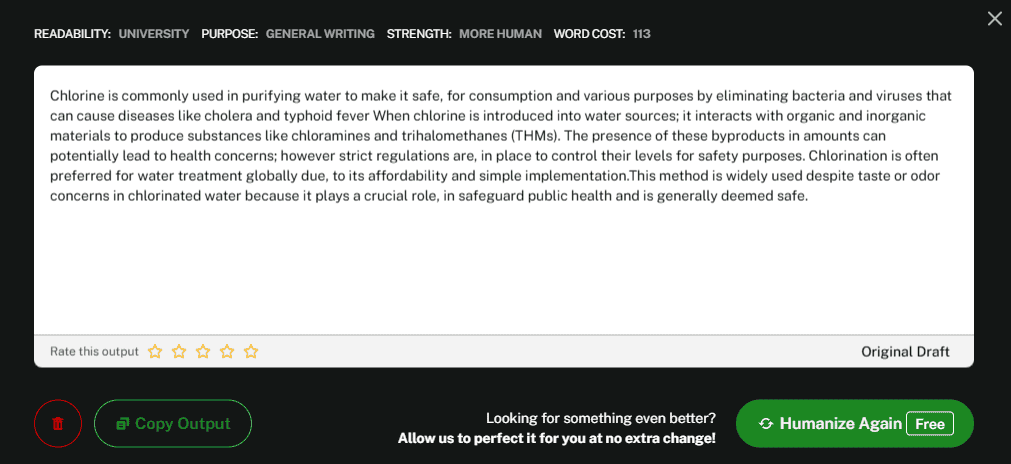

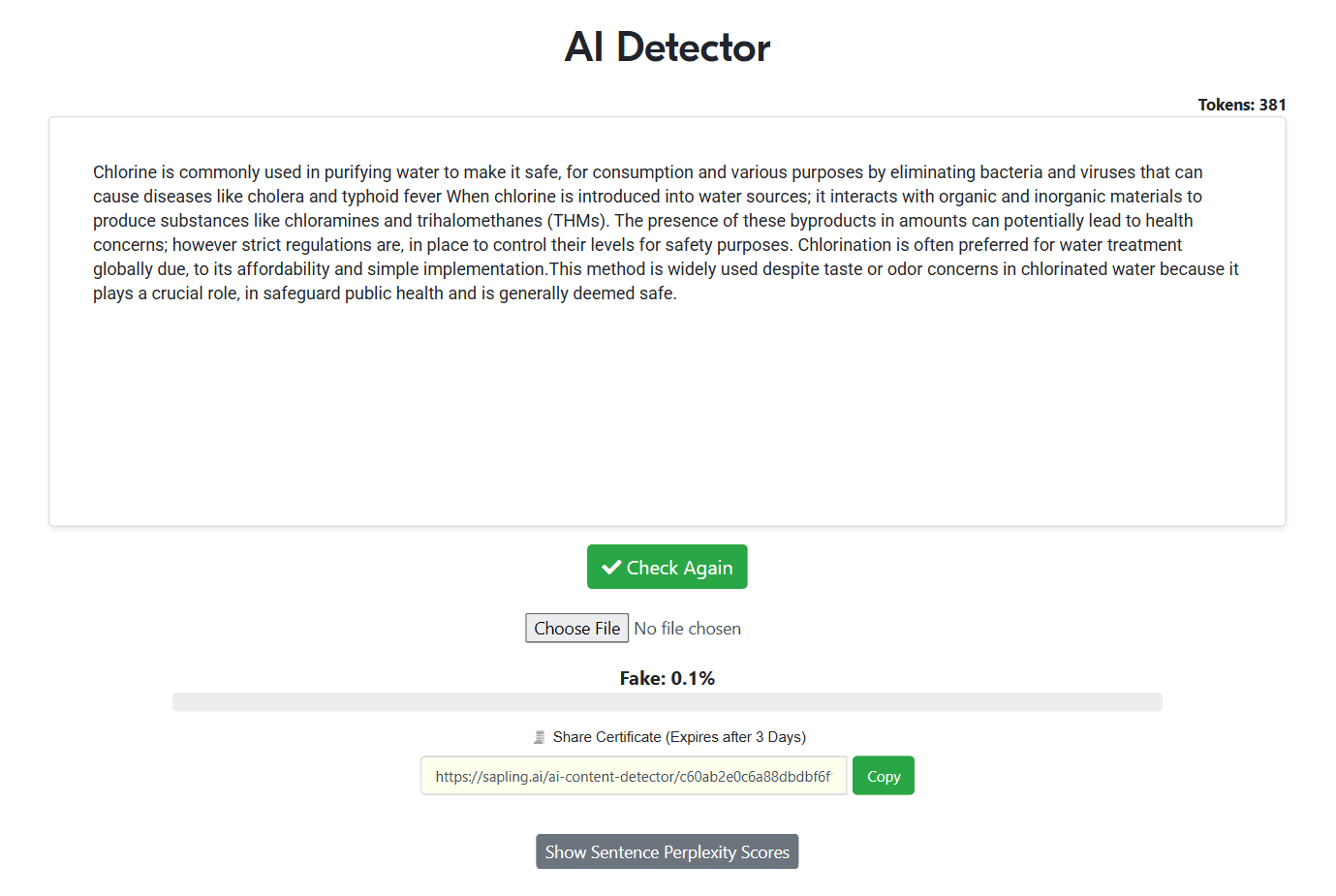

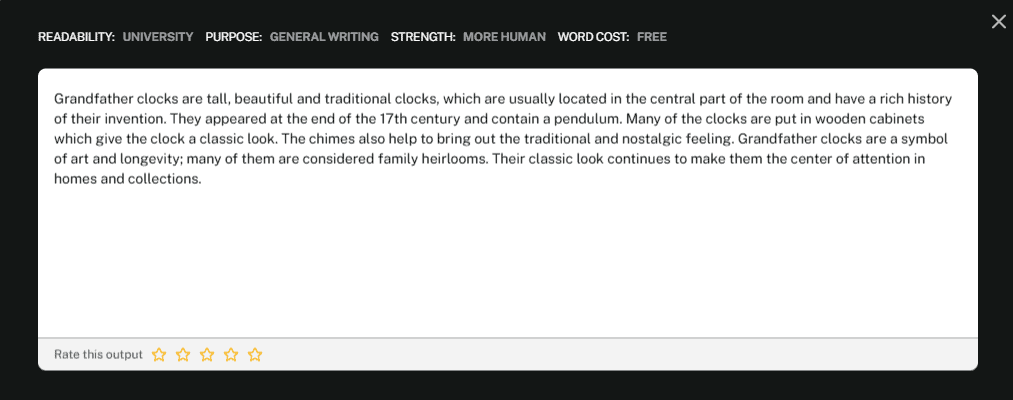

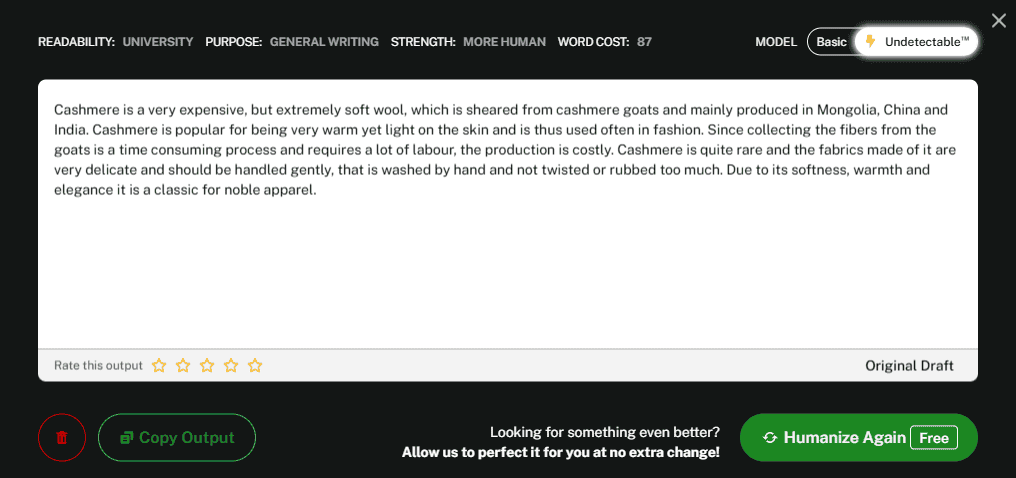

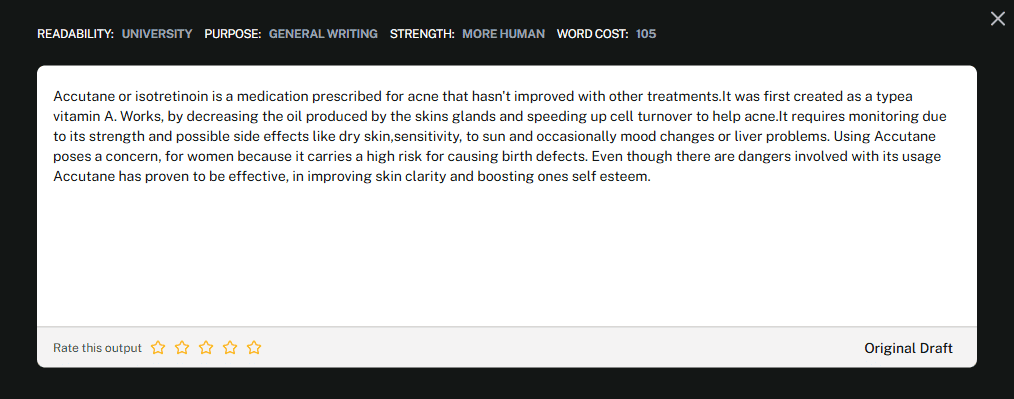

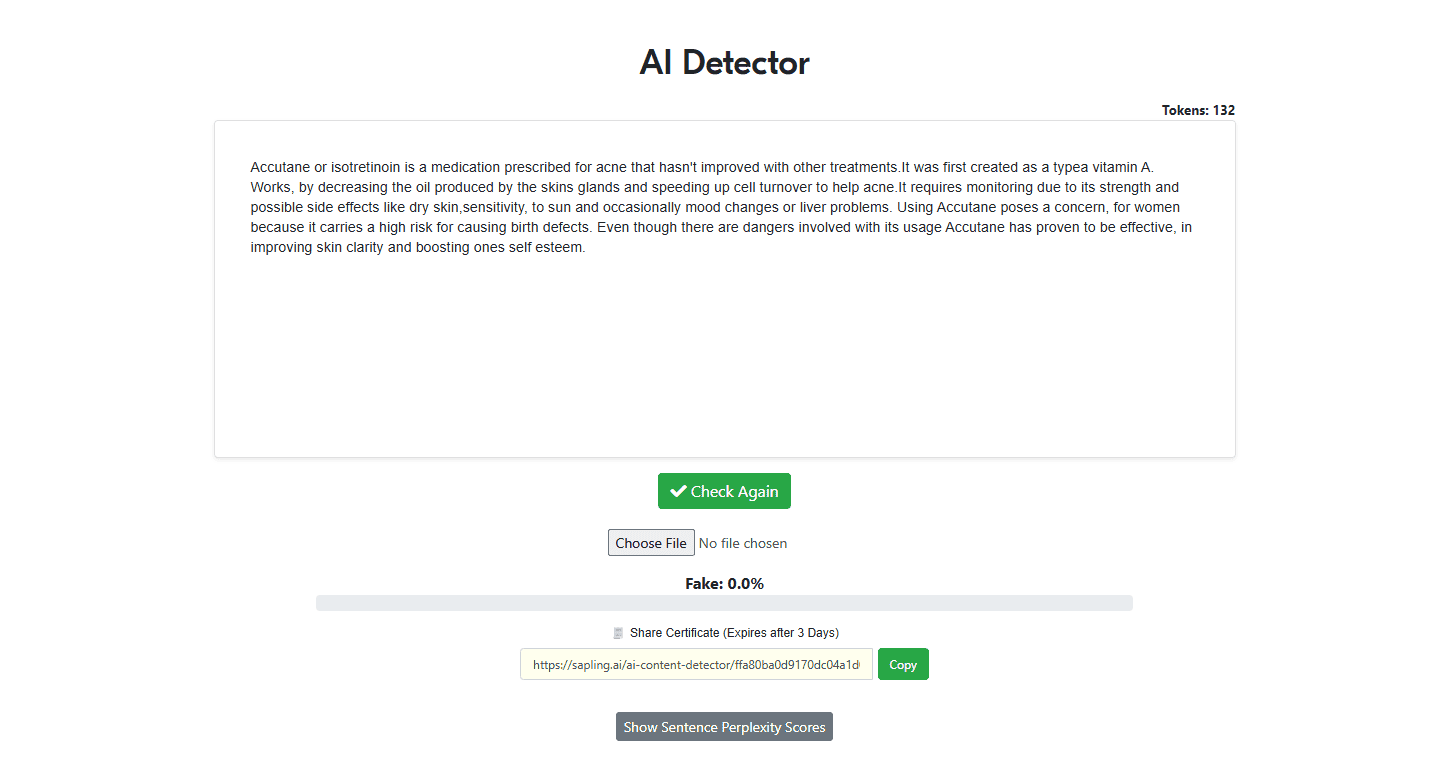

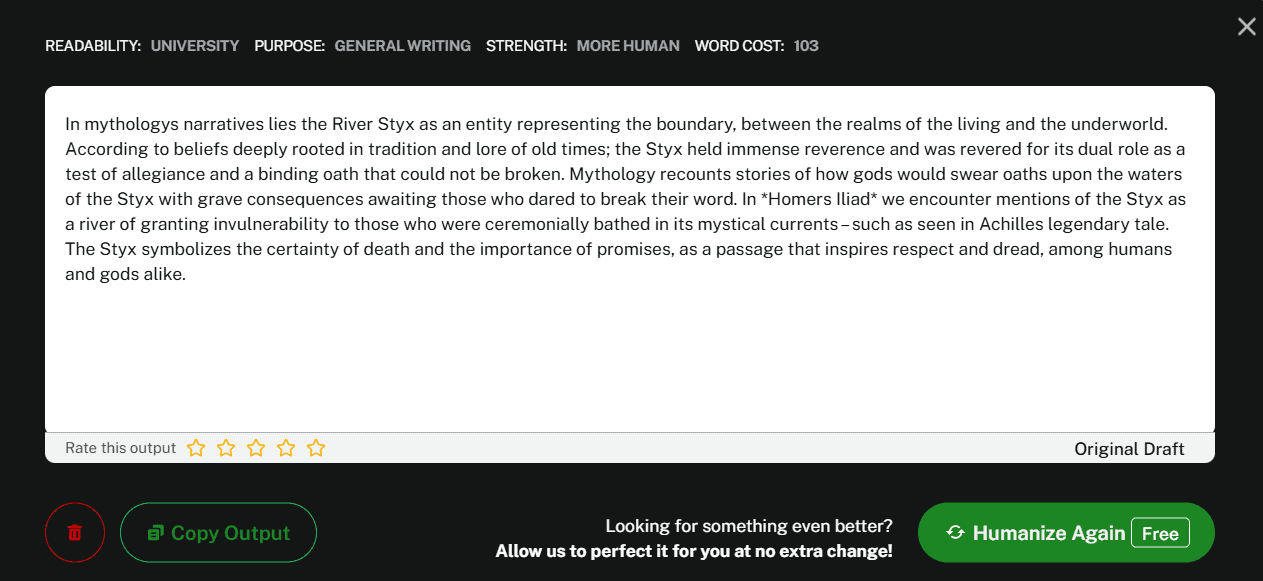

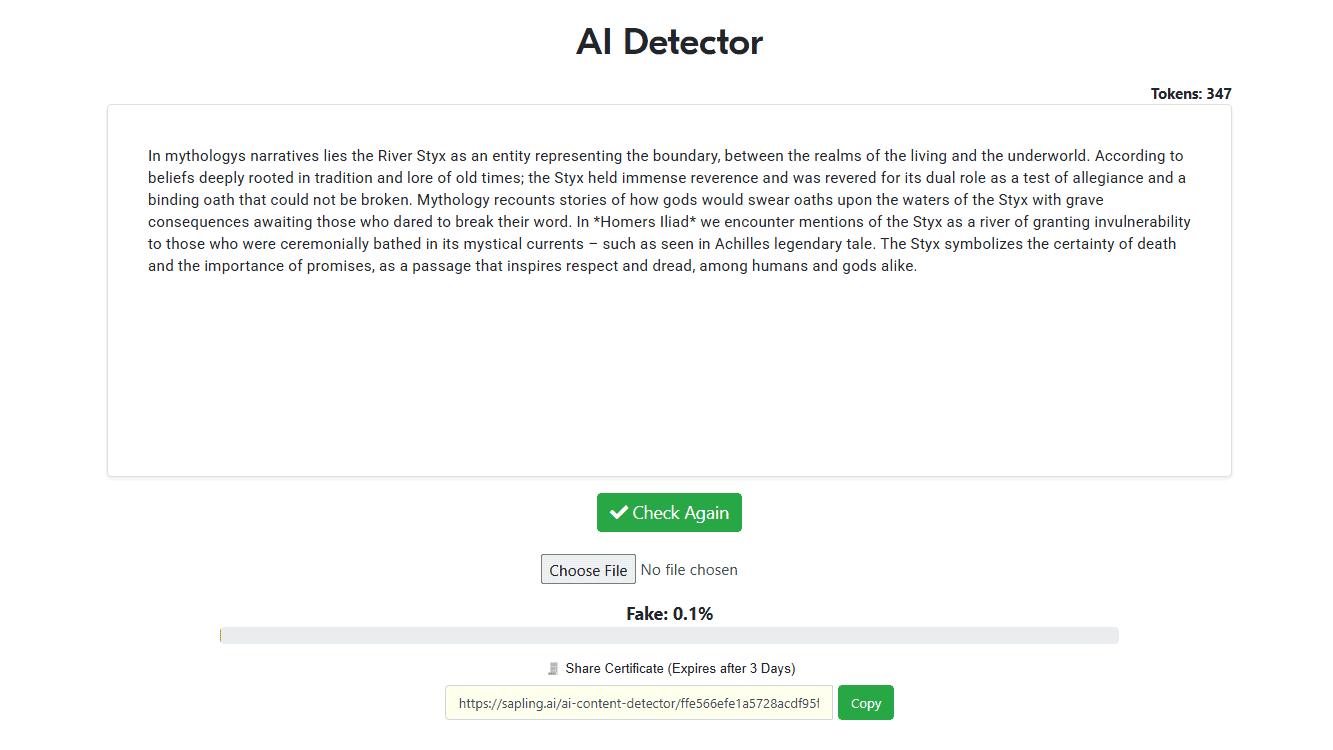

Test #1

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.1%

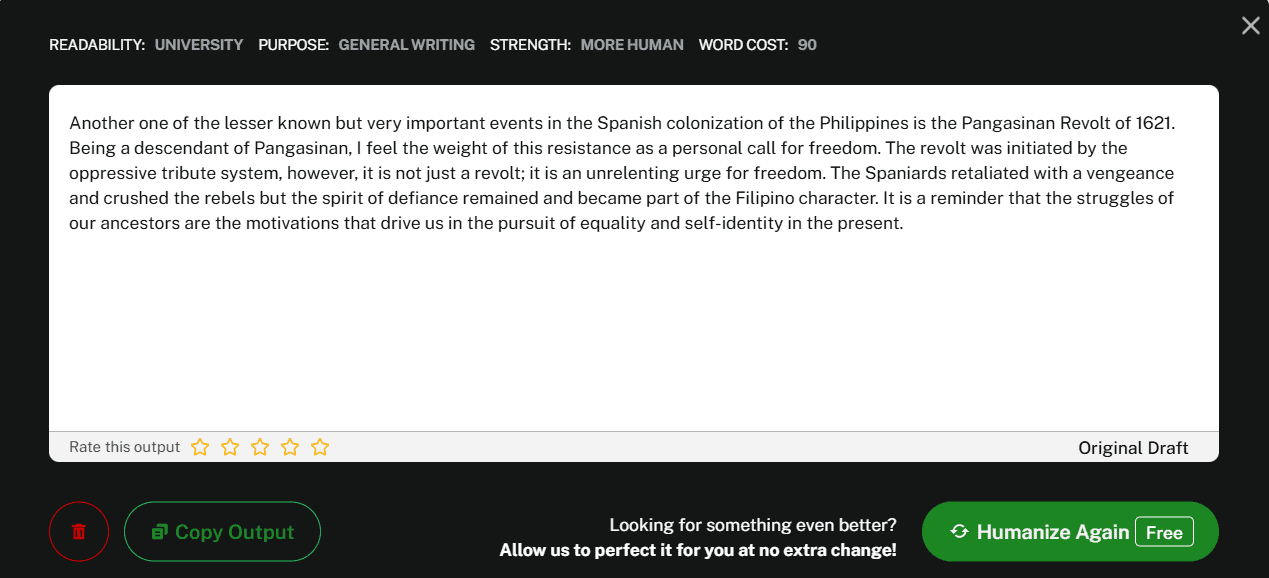

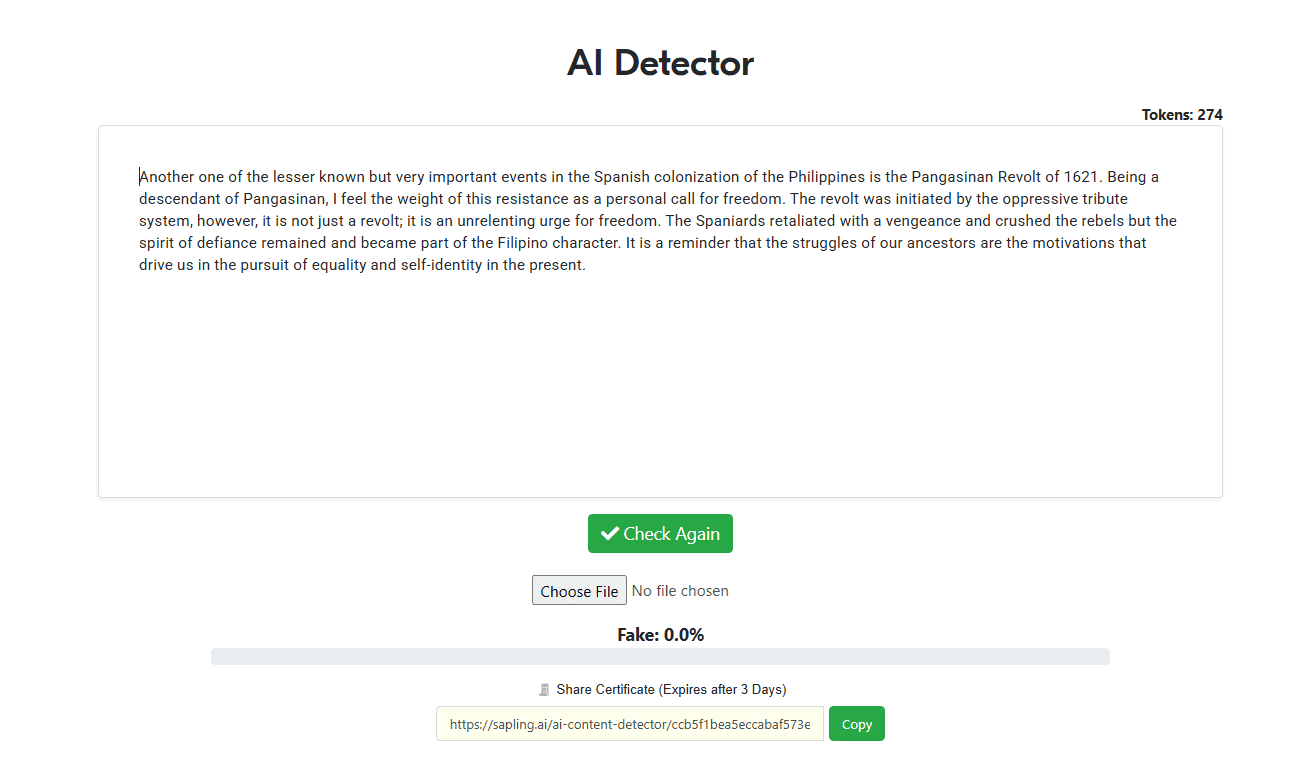

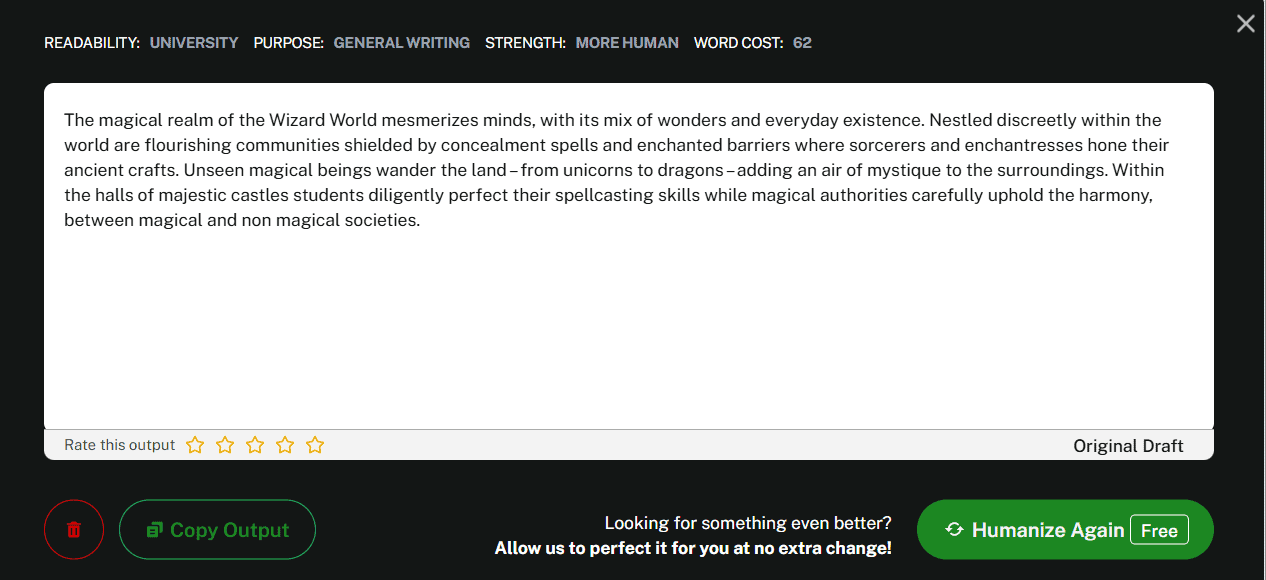

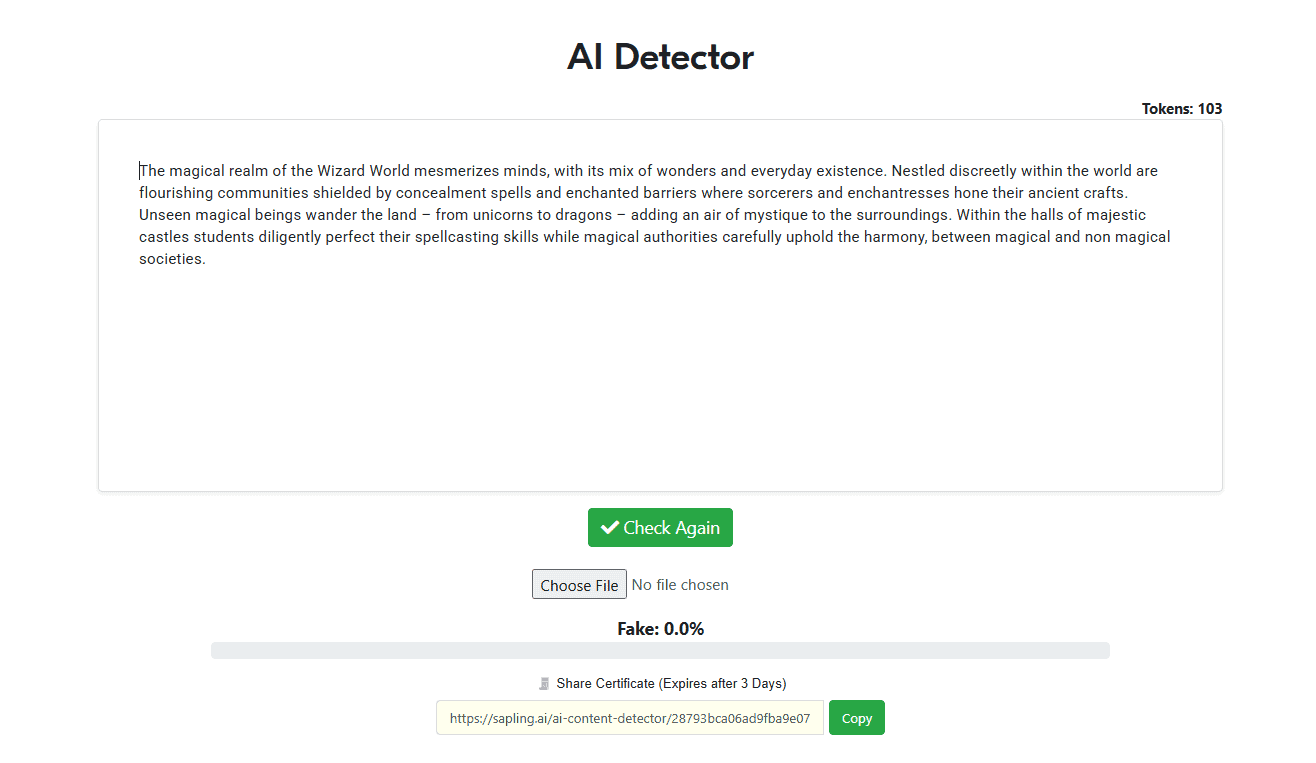

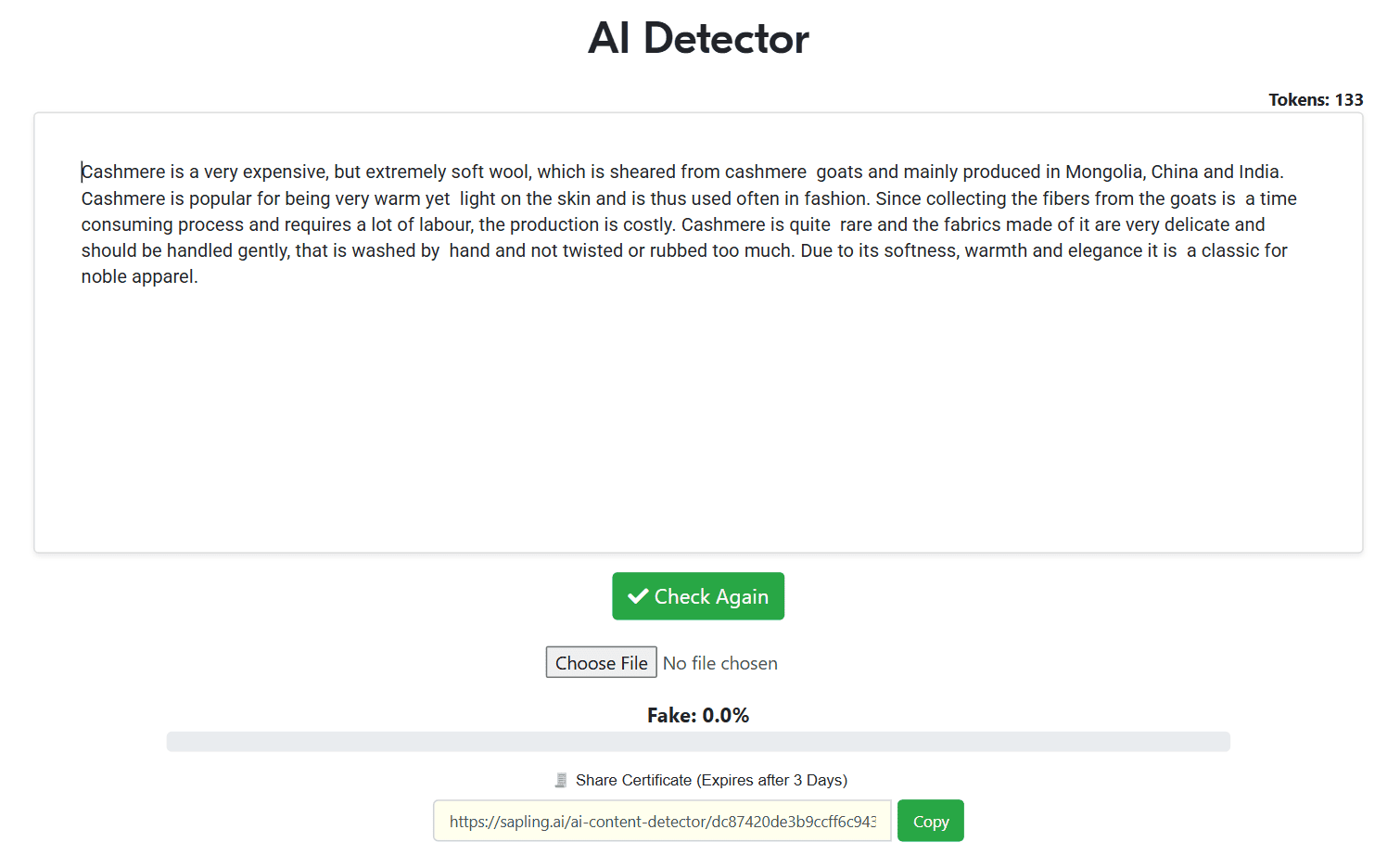

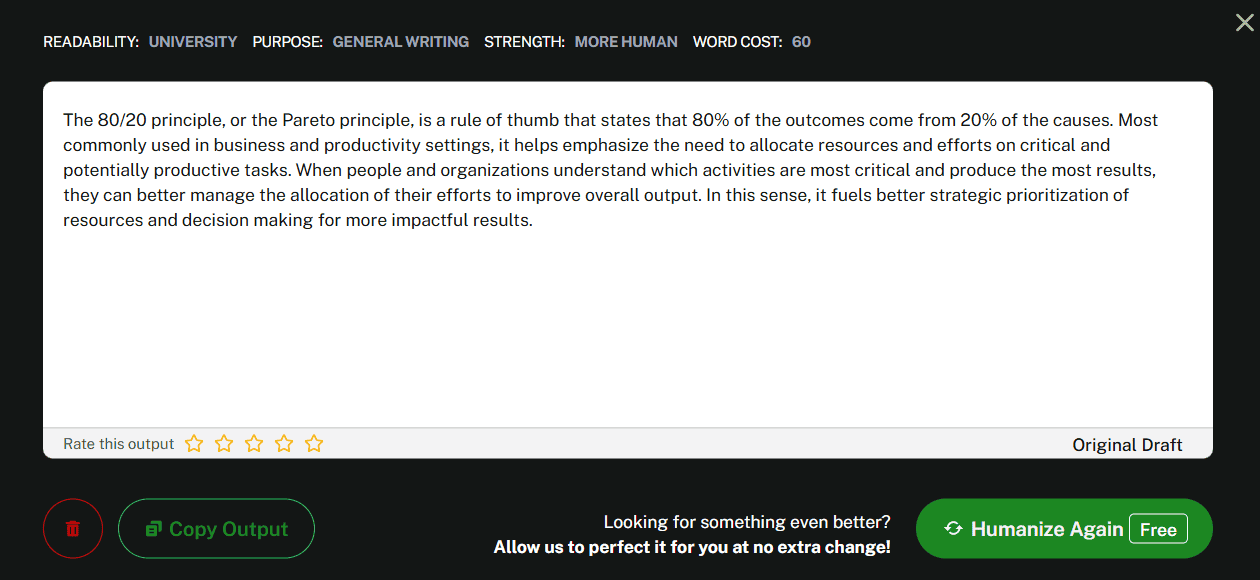

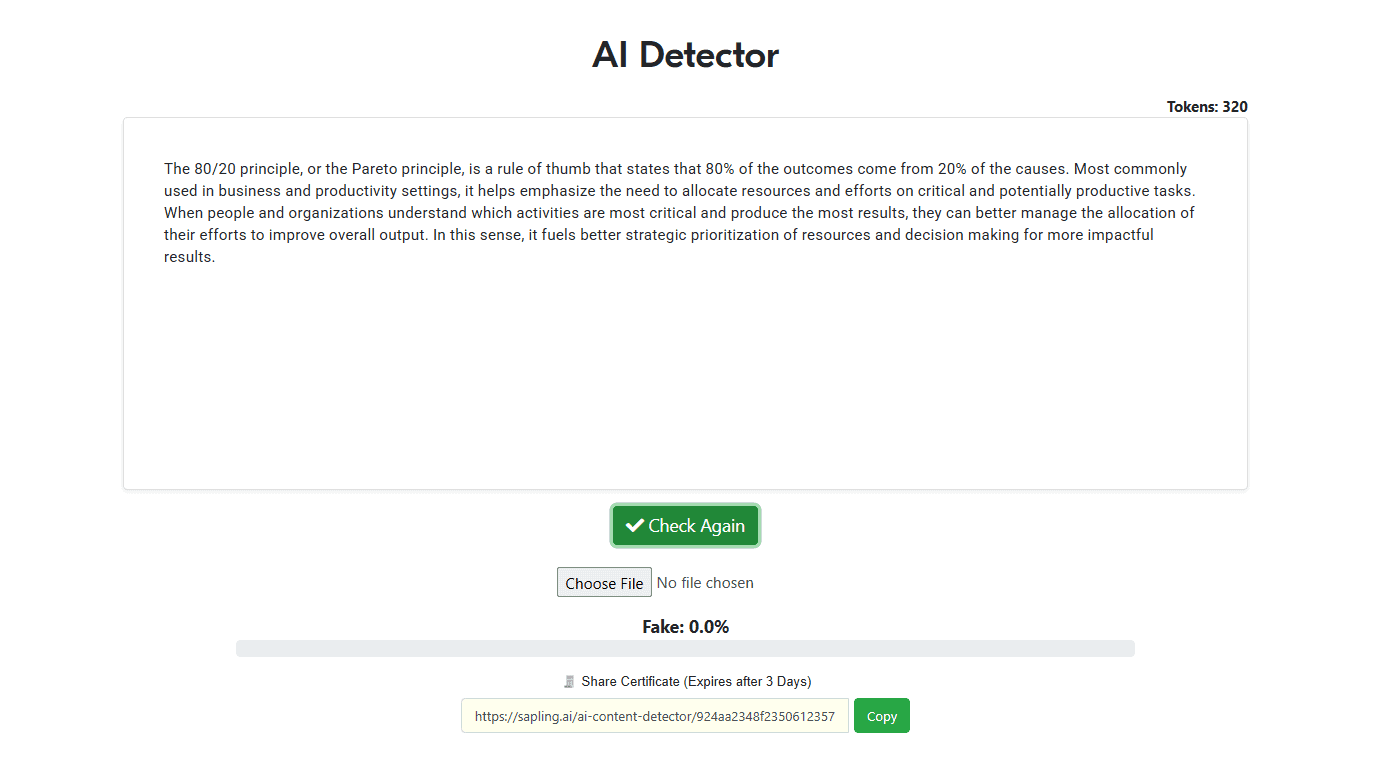

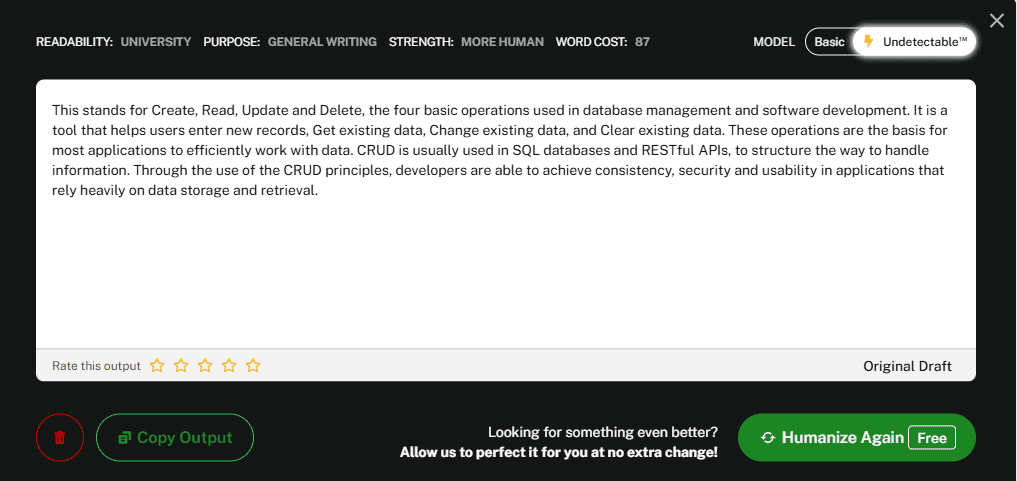

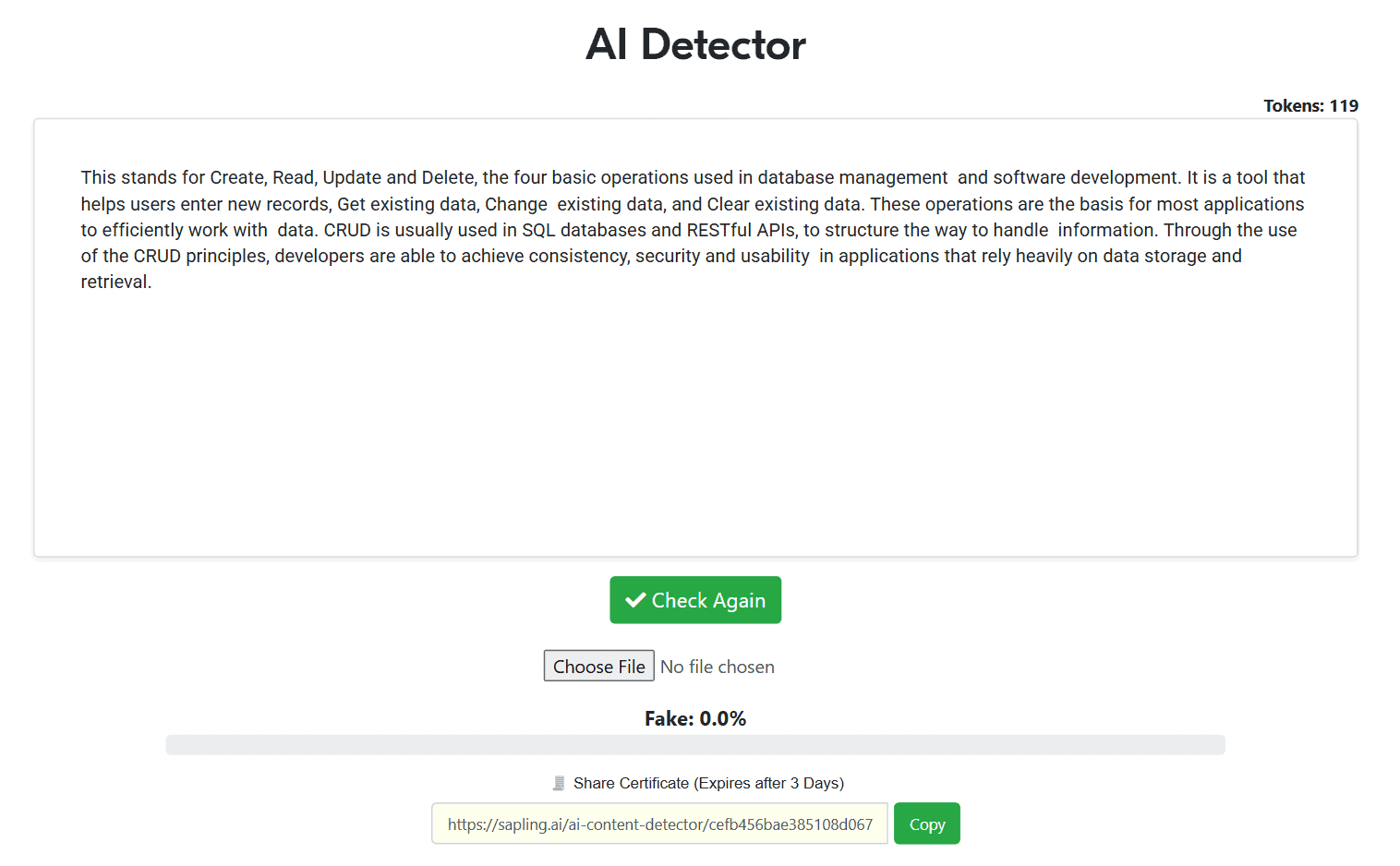

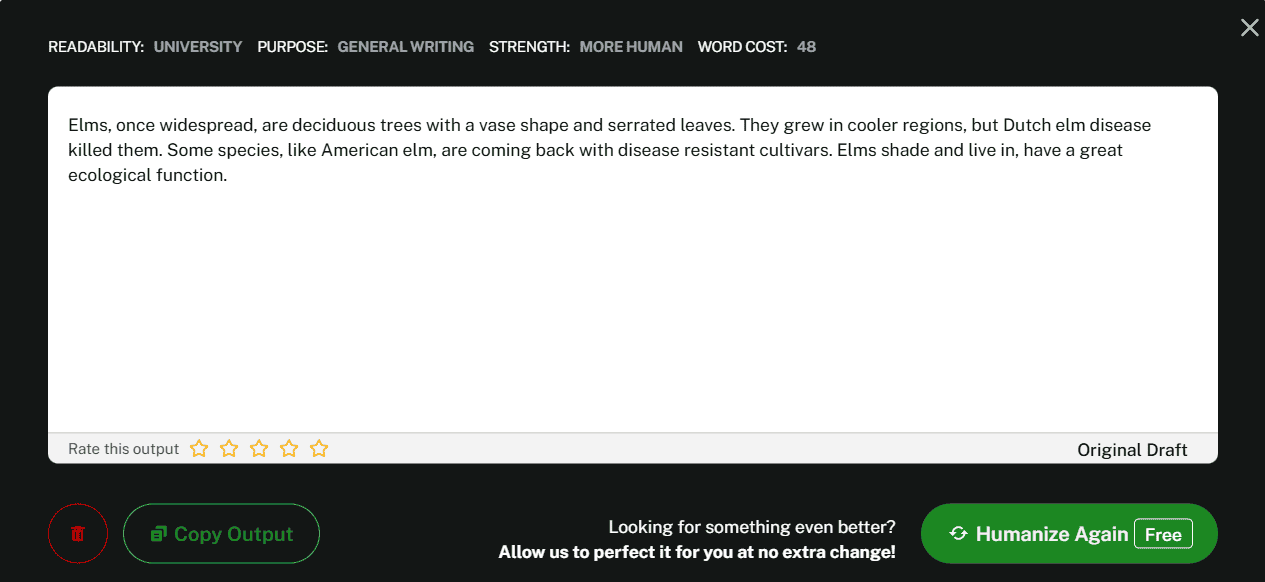

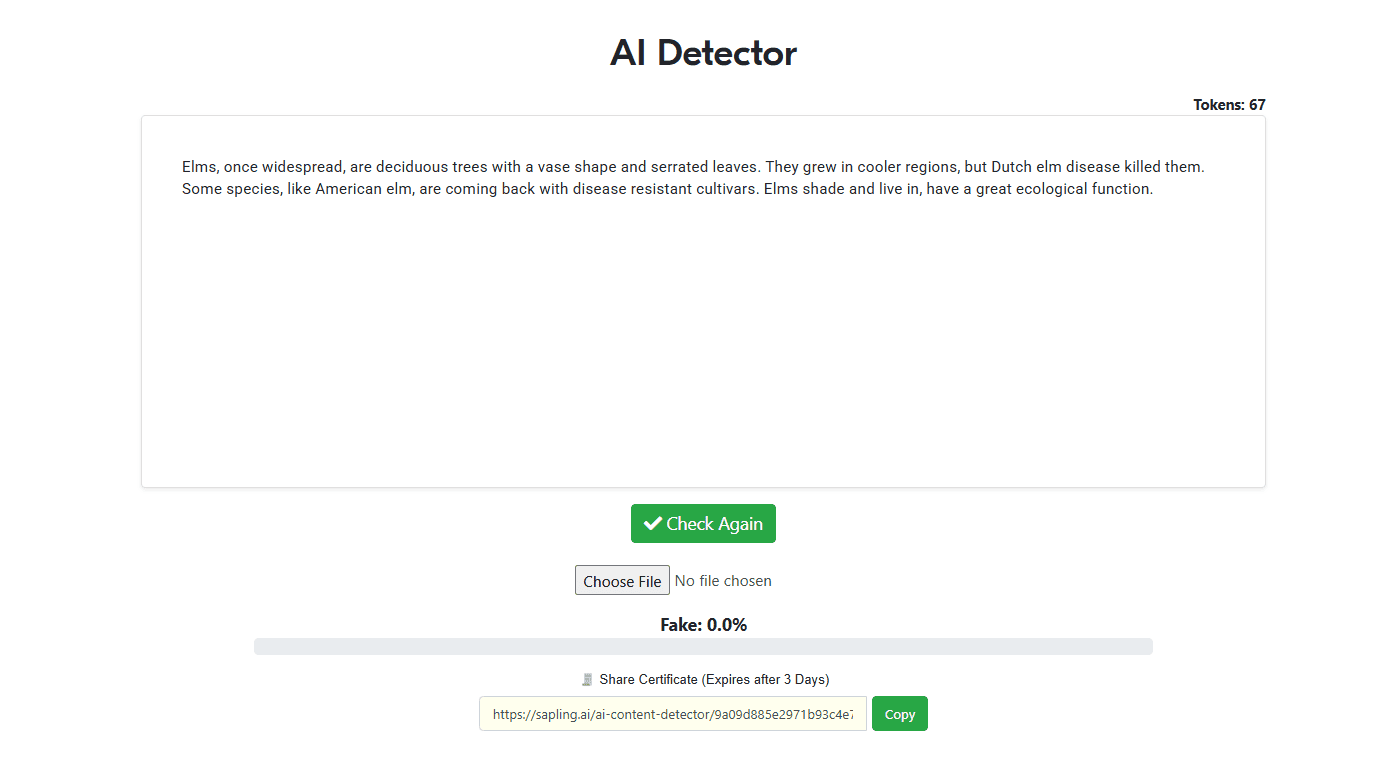

Test #2

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

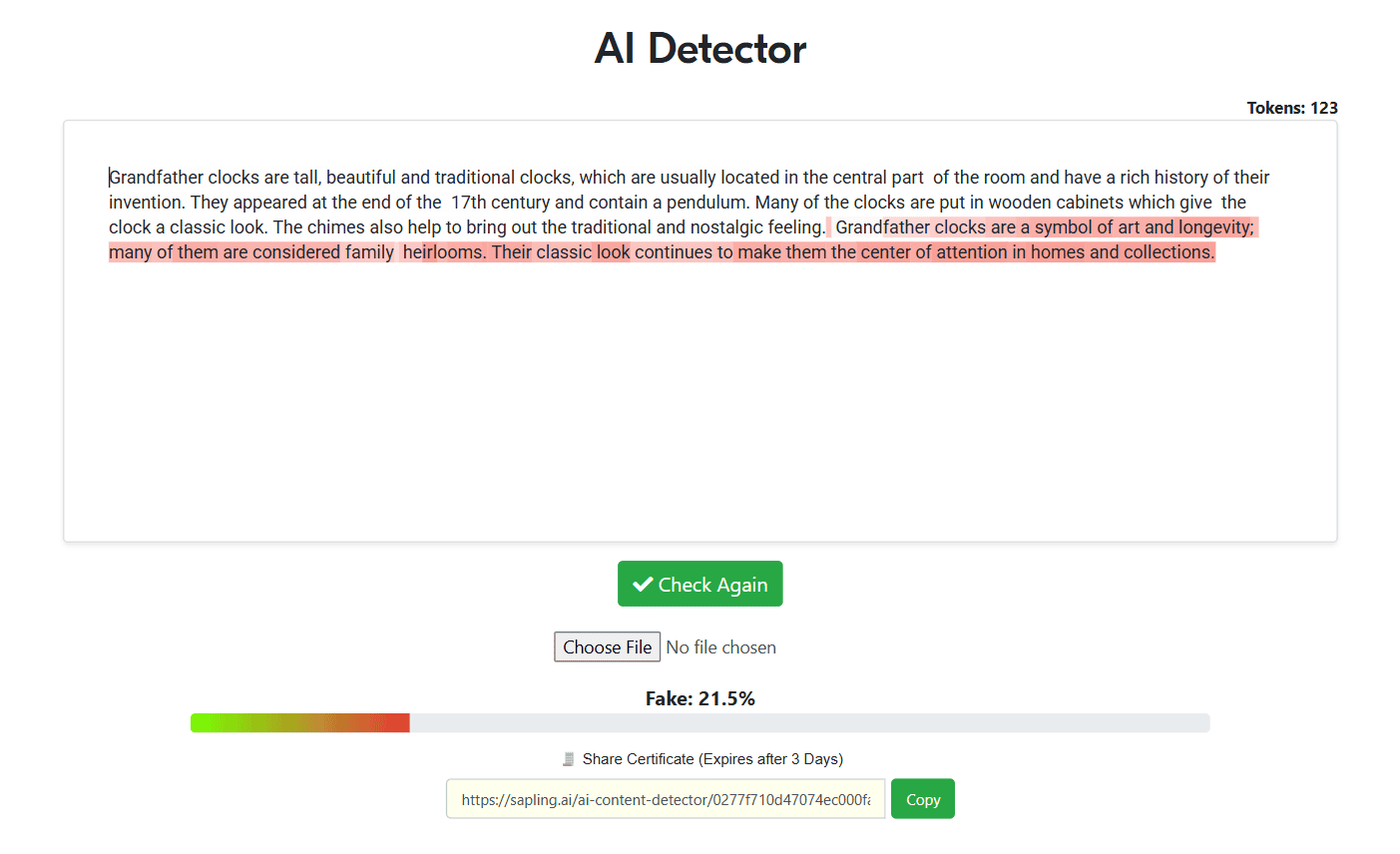

Test #3

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 21.5%

Test #4

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

Test #5

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

Test #6

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

Test #7

Undetectable AI: Passes the AI detection test.

AI Likelihood Score: 0.2%

Test #8

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

Test #9

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.1%

Test #10

Undetectable AI: Successfully passes as human!

AI Likelihood Score: 0.0%

Overall Score

Test Number | Undetectable AI |

#1 | 0.1% |

#2 | 0% |

#3 | 21.5% |

#4 | 0% |

#5 | 0% |

#6 | 0% |

#7 | 0.2% |

#8 | 0% |

#9 | 0.1% |

#10 | 0% |

Score | 2.19% |

The “Question”

Let’s be honest: anytime you talk about “bypassing” something, the ethics come up. Here’s my take.

AI writing itself isn’t unethical. The reality is, most people already use it — whether it’s a brainstorming prompt in ChatGPT, a draft for a cover letter, or even a quick Grammarly polish.

What’s unfair is when detectors mislabel content and penalize people who aren’t even misusing AI in the first place.

Undetectable AI doesn’t create a fake reality. It doesn’t write essays for you or replace actual work. It simply takes AI-written text and makes it sound natural—removing that obvious “AI” edge. In other words, it helps responsible users avoid false positives.

If you’re using it to submit something that’s genuinely yours, or to make AI-assisted writing more authentic, there’s nothing wrong with that. It’s when you use it as a crutch to avoid effort entirely that the problems start.

The Bottom Line

So, does Undetectable AI work? In my opinion: yes, it does—and it’s gotten even better in 2025.

Sometimes you’ll still want to edit the output yourself to match your exact tone or add that last bit of polish. But as a way to reduce risk, increase fluency, and help AI text blend in, it’s one of the strongest tools out there right now.

Compared to Grammarly’s AI Humanizer, Ahrefs’ rewrite tool, or smaller competitors like HideMyAI, Undetectable AI offers the best overall package. It balances usability, features, and consistency, while staying updated against newer detection models.

And for students, freelancers, and job seekers alike, that peace of mind is worth it.

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.