OpenAI Announces Experimental DALL-E 2 Facial & Imaging Models

OpenAI recently released new DALL-2 models that have improved image sharpness and facial generation features. Images are a lot higher quality & show much more detail than before, truly showing a massive jump from previous models.

Justin Gluska

Updated April 13, 2023

a colorful and modern treasure chest sitting in the middle of a dark, barren, empty field

Reading Time: 5 minutes

Can you believe it's almost been a year since DALL-E 2 was announced? It's hard to believe that less than 12 months ago the world was shocked at the text-to-image tool which has since become so normal.

With the release of DALL-E came other popular image generation tools that all have their own unique style to them. DALL-E is great for descriptive and realistic photos, Midjourney is great for abstract and artistic styling (more painting-like), and Stable Diffusion is great for colorful, illustrative depictions of art.

While DALL-E has been receiving updates under the hood throughout the last few months, a recent updated model was announced and beta access was given to a select few. The new model was released for testers to collect feedback on its usefulness, quality of generations, and to determine safety issues & instances of bias.

The experimental model is only live for the next 10 days. After then, it will be removed and the data will be used to re-train and update the public-facing DALL-E 2 model.

The final release date is not precisely known, but rumors have stated that the public launch will be deployed sometime within the next 2 months.

DALL-E 2 Facial Photorealism & Image Sharpening Update

The new DALL-E experimental update is a work in progress to a future update coming to DALL-E. It seems to focus on people and faces in addition to improving DALL-E's general image generation models.

Straight away I noticed a difference

I didn't see as many annoying text scrambles anymore & can clearly see an improvement in faces. Both of these now produce images that are feasible to use publicly. The older model almost always messes up fingers or facial structures, this new one produces them a lot better.

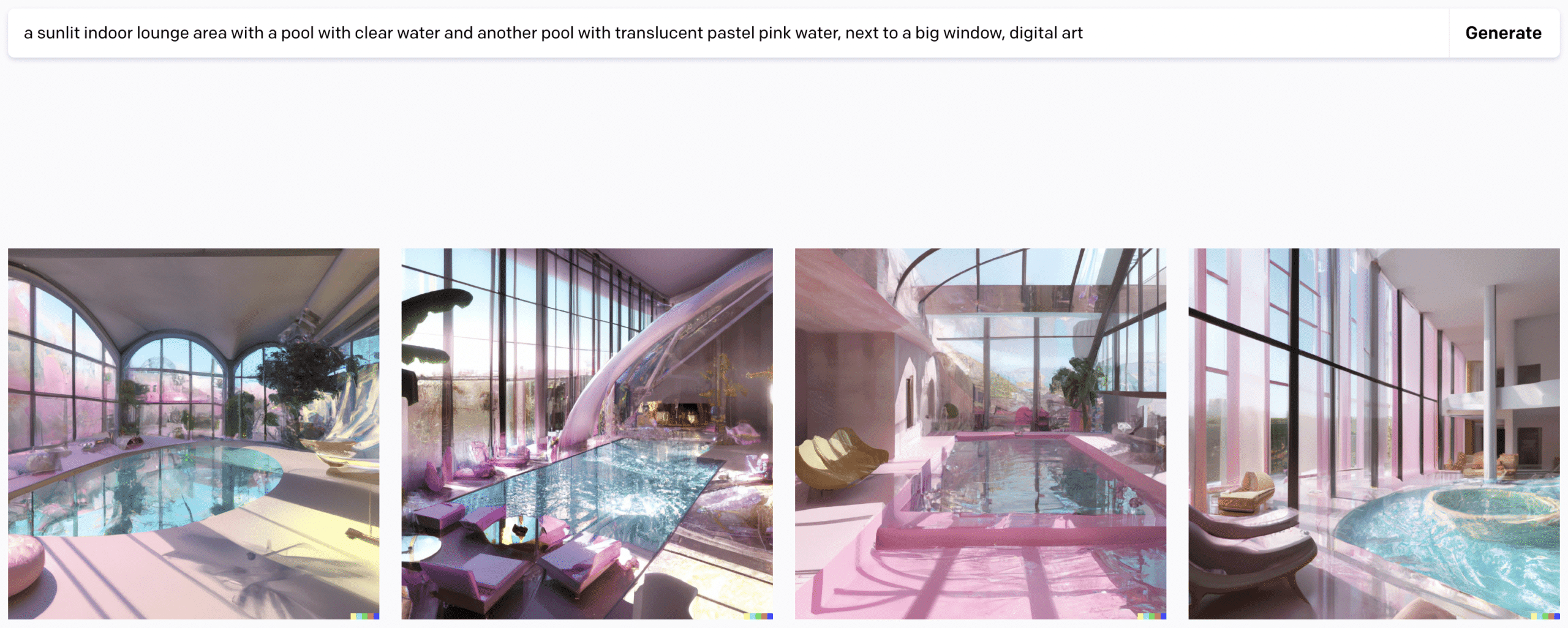

Here's a few examples of general image sharpening. I'll compare old models with the new models with the exact same prompt to show differences. These results aren't conclusive but they do a good job at highlighting some of the biggest changes. The biggest findings are detail in people, objects, and backgrounds. I've also noticed text generation/asking for specific words produces better results – but I haven't tested this exclusively enough.

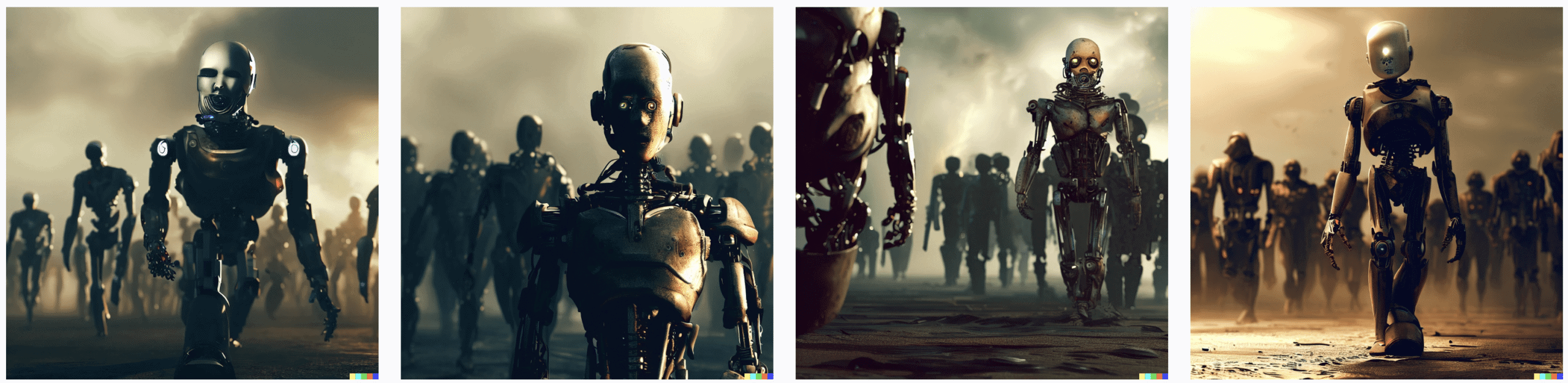

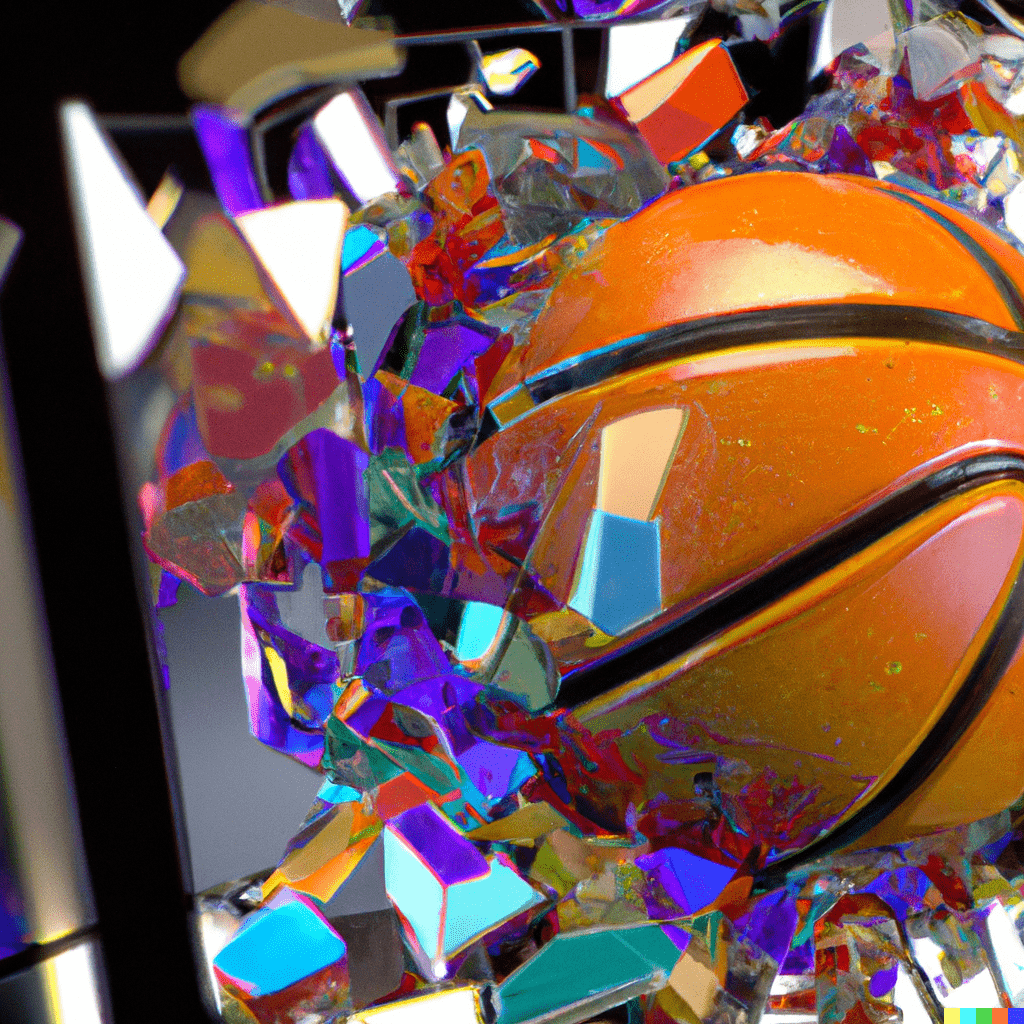

Image Sharpening

You'll notice the keen attention to detail that seems to be highlighted in this update. Take a look at this first image of basketball shattering a backboard. The first image is the old model, the second is the newer model. You'll notice the higher quality, more vivid colors, and better graphics on the ball and backboard themselves.

Prompt: a 4k render of a basketball shattering a stained glass colorful basketball backboard in a photorealistic style

Prompt: a german chef cooking in the kitchen outside of a restaurant in Munich during oktoberfest

Prompt: a dog smiling outside the window of a car on a sunny, winter day with snow on the ground

Facial Photorealism

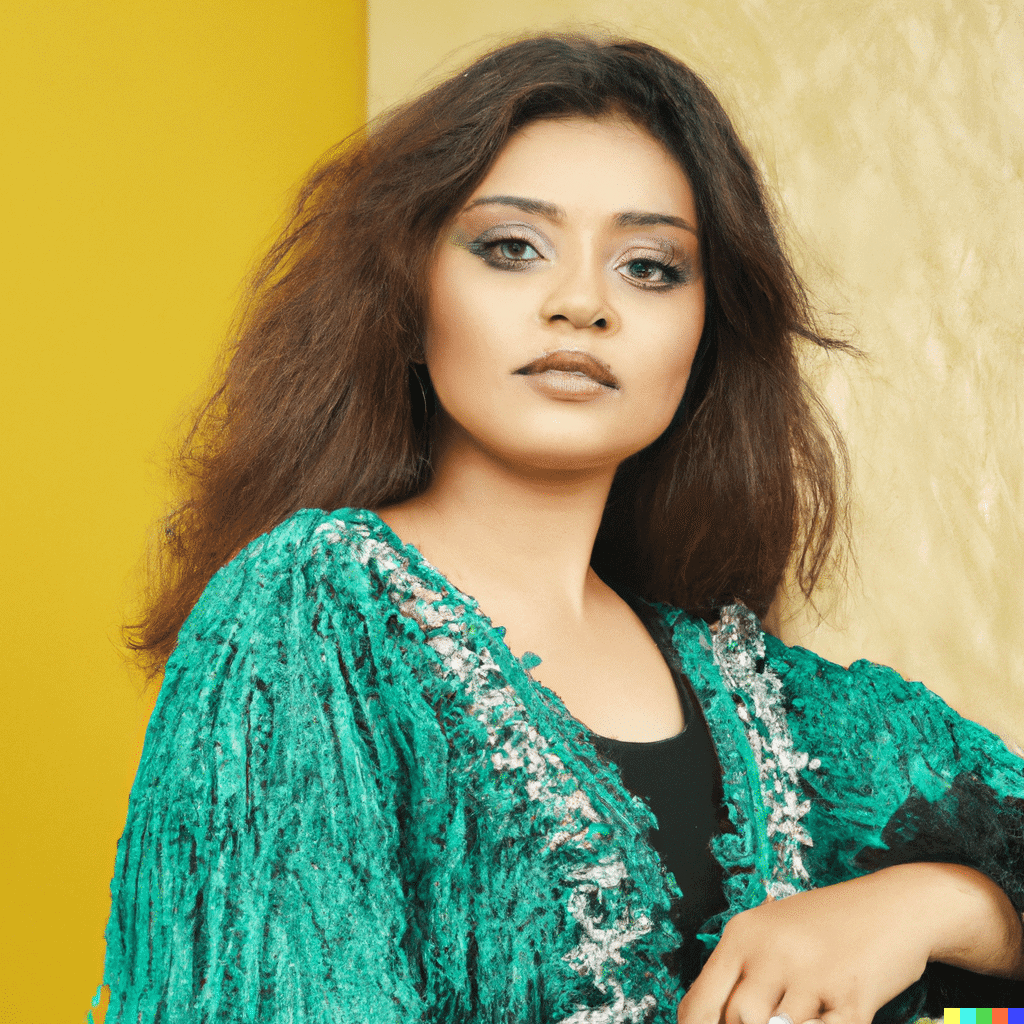

Another big part of the update is how much more detail gets included in facial images. They're definitely still not perfect, but this is a major step in the direction of what everyone imagined when they learned of DALL-E. Take a look at these. Same prompt, old model on the left and experimental on the right:

Prompt: a family portrait of a family during the great depression close-up and 8k photorealistic showing tons of emotion

Some creators had even trained their own models due to how bad faces were in the past. An example of this is PhotoAI by levelsio.

Will the next major update to DALL-E include a fix for all of this? We're on the right track, that's for sure. Here are a few more examples highlighting facial changes:

Prompt: a close up of a smiling boy holding a bunch of colorful balloons in an empty field with grass

Prompt: a 4k closeup picture of a model sitting in front of a backdrop of a photoshoot

Buggy Prompt Results

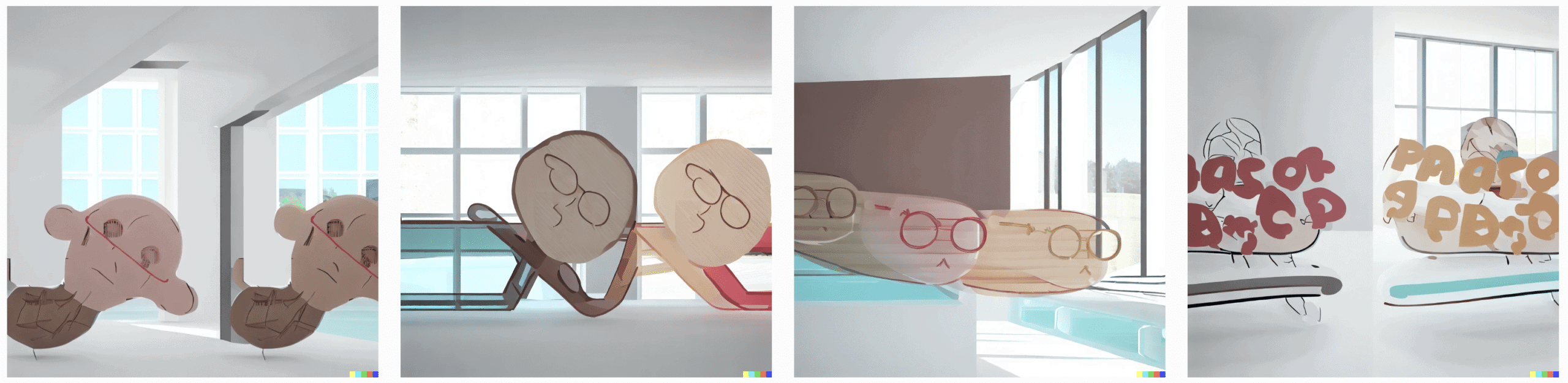

And what would be a new model without buggy pictures, right? I've noticed a few prompts just don't get understood properly. No matter how many times they get resubmitted

I used the following prompt on v2 and then experimental, which somehow got it completely messed up in the new version. Are those potatoes? What???

If you received access to the new model, make sure to report any bugs to OpenAI by emailing them at dalle@openai.com. Those with the model have also been invited to join a discord to easily share screenshots of misleading generations.

Final Thoughts

If you're an avid image generation fan or are just interested in DALL-E, this new preview shines light on what's soon to come. I've sure this has been getting developed for months on end and is really only a small preview of everything else OpenAI has in store.

It's truly been an incredible last year, DALL-E 2, GPT-3.5, ChatGPT, all by the same company! Have you been given access to the new experimental model? If so, what differences do you notice? Share your thoughts and ideas in the comments below!

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.