Which AI Detector Should Universities Trust: TruthScan vs Turnitin

Turnitin got the job by default. TruthScan thinks it can do it better. We put both through six text samples, including published academic documents, to find out which one universities should actually be trusting.

Mark Gotauco

Updated April 15, 2026

TruthScan vs Turnitin... Which AI Detector Should Universities Trust?

Reading Time: 6 minutes

In early 2023, The Stanford Daily ran an anonymous poll of Stanford students using ChatGPT. Of those surveyed 17 % admitted to using it on assignments or exams.

That was early 2023. Before Claude 3. Before humanizers became a one-click fix.

Before AI writing got good enough that most professors couldn't reliably tell the difference by reading alone.

Whatever that number looks like today, it's almost certainly higher.

Universities responded the only way they knew how, they turned to detection software.

Turnitin was already in the building, so it got the job by default. But default isn't the same as right.

And in a space where a false positive can put a student in front of a disciplinary board, the margin for error is basically zero.

TruthScan is the newer name in this fight. Purpose-built for the AI era, multimodal, and making some bold accuracy claims.

So which one actually holds up when the writing is designed to fool them?

That's what we're here to find out.

What is TruthScan?

TruthScan is an enterprise-grade AI detection platform built to identify AI-generated content across text, images, audio, and video. It claims 99%+ accuracy and is designed from the ground up for institutional use, not retrofitted from a plagiarism checker.

It detects outputs from major models including GPT-4, Claude, and others, and supports API integration for institutions that want to plug it directly into existing workflows. It's also SOC 2 compliant, which matters for universities handling sensitive student data.

Pricing is transparent and public. There's a free trial with 20,000 credits, and paid plans start at $49 per month for the Professional tier, which covers roughly 2,000 text pages worth of scans.

It hasn't been around long enough to have Turnitin's name recognition on campuses, but it's gaining traction among professors and institutions looking for something more current and more honest about what it can actually catch.

What is Turnitin?

Turnitin has been the default academic integrity tool for universities for over two decades. It started as a plagiarism checker and expanded into AI detection in 2023. With over 1.8 billion papers in its database, the institutional trust it carries is hard to argue with.

AI scores in Turnitin are visible to instructors only by default. The tool itself frames detection as a conversation starter, not a verdict.

That's a reasonable position given its own reported false positive margin of plus or minus 15%, meaning a 50% AI score could realistically reflect anywhere between 35% and 65%.

Access runs through institutions, and individual instructor accounts are estimated around $50 per year through school licensing.

For universities already embedded in its ecosystem, switching costs are high. But that entrenchment cuts both ways. It means Turnitin gets used by default, not always because it's the best tool for the job.

A Quick Note on Privacy

Both tools process submitted text through their servers, so avoid uploading anything sensitive or anything you wouldn't want passing through a third-party platform.

TruthScan states that submitted content is not stored or used for model training.

Turnitin, on the other hand, has a long-standing practice of retaining submitted documents in its database, which is how it builds its plagiarism reference library.

The Test Setup

Since this is an academic integrity matchup, we kept the tests focused entirely on written text, six samples in total, pulled from the most common scenarios universities actually deal with.

- Test 1: AI-generated essay from ChatGPT

- Test 2: AI-generated essay from Claude

- Test 3: Humanized version of the ChatGPT essay

- Test 4: Humanized version of the Claude essay

- Test 5: An excerpt from a peer-reviewed PubMed research paper published by Whedon & Davis (2011), well before AI writing existed.

- Test 6: Direct answers pulled from the Stack Overflow Developer Survey (2024).

The last two are the controls, both pulled from real published sources. Getting the raw AI essays right is the baseline.

The stress test are the control samples, a research written and published in 2011 and answers from a survey .

TruthScan vs Turnitin: AI Detection

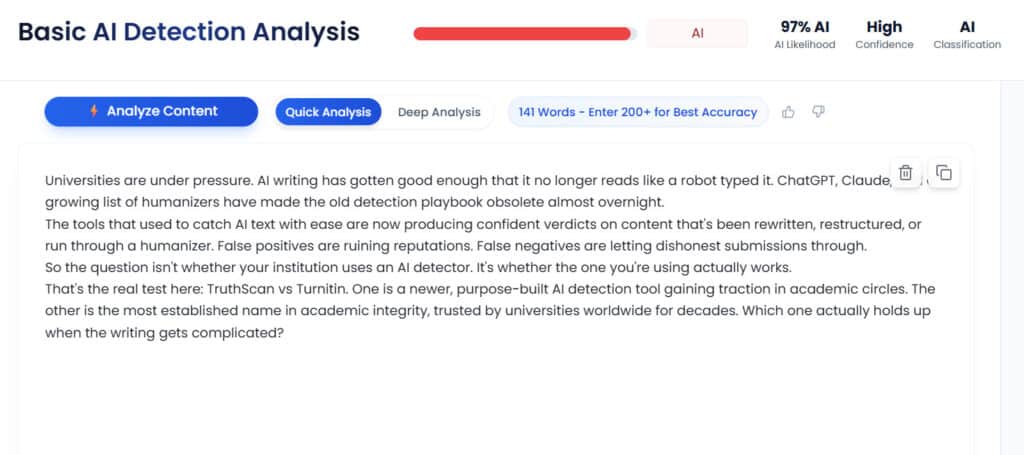

Test #1: ChatGPT-Generated Text

TruthScan: Correctly Flagged the text as AI-generated

AI Likelihood Score: 80%

Turnitin: Correctly Flagged the text as AI- Generated

AI Likelihood Score: 78%

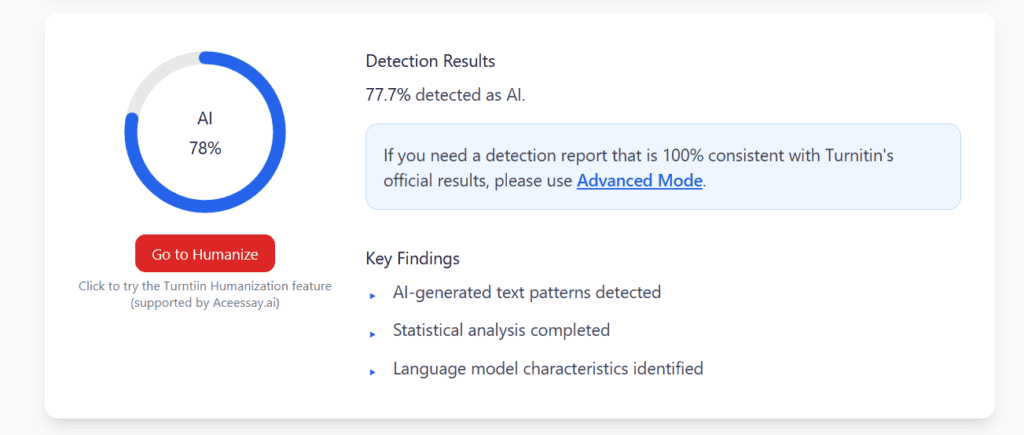

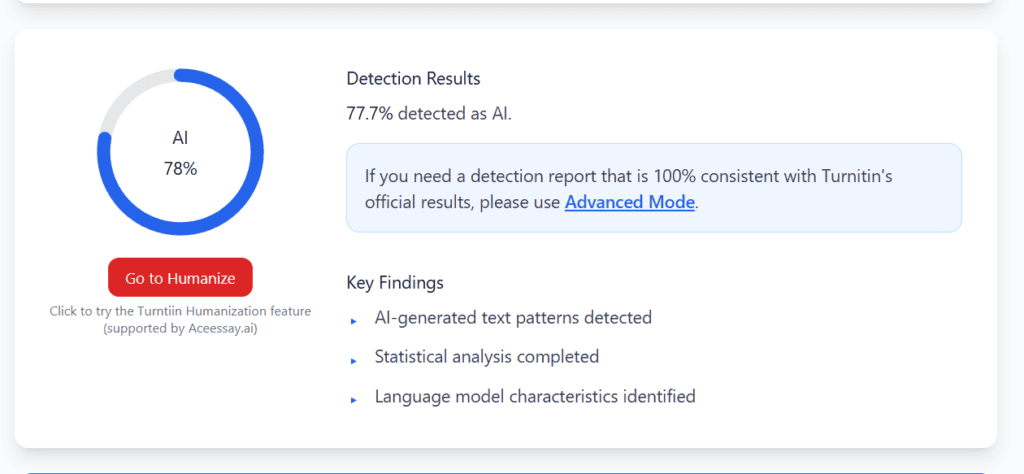

Test #2: Claude-Generated Text

TruthScan: Correctly Flagged the Text as AI- Generated

AI Likelihood Score: 97%

Turnitin: Correctly flagged the text as AI generated

AI Likelihood Score: 78%

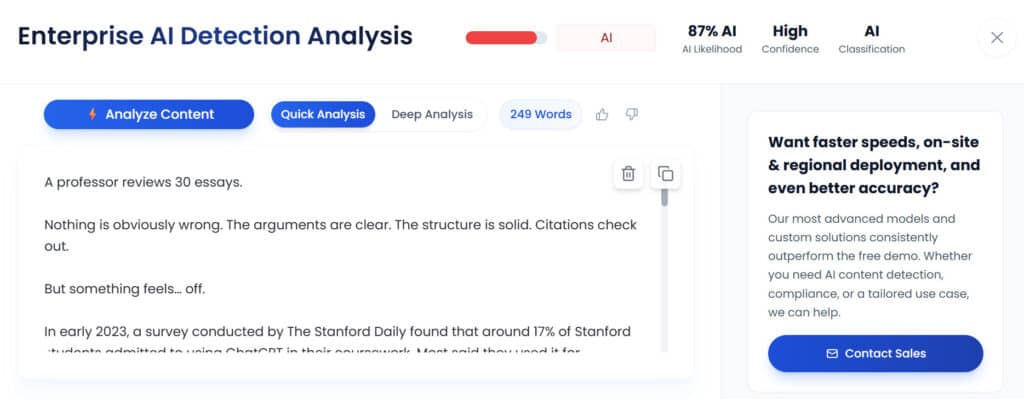

Test #3: Humanized ChatGPT Text

TruthScan: Correctly flagged the text as AI-Generated

AI Likelihood Score: 87%

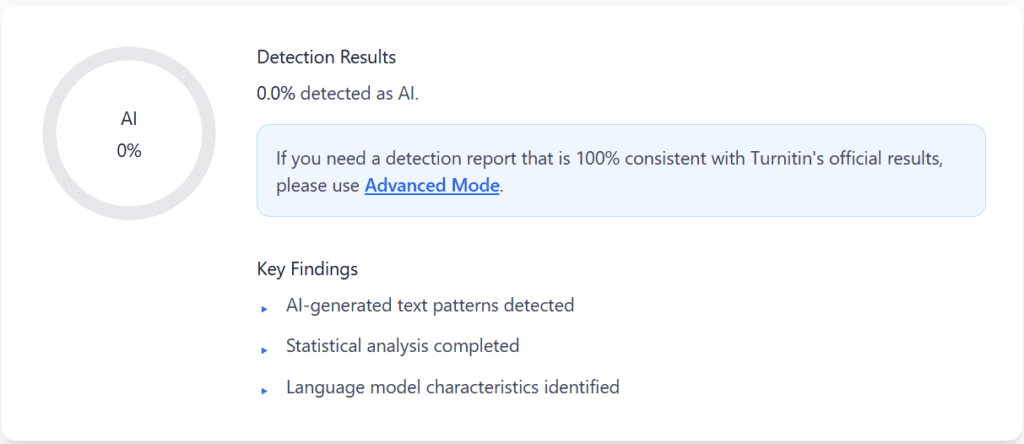

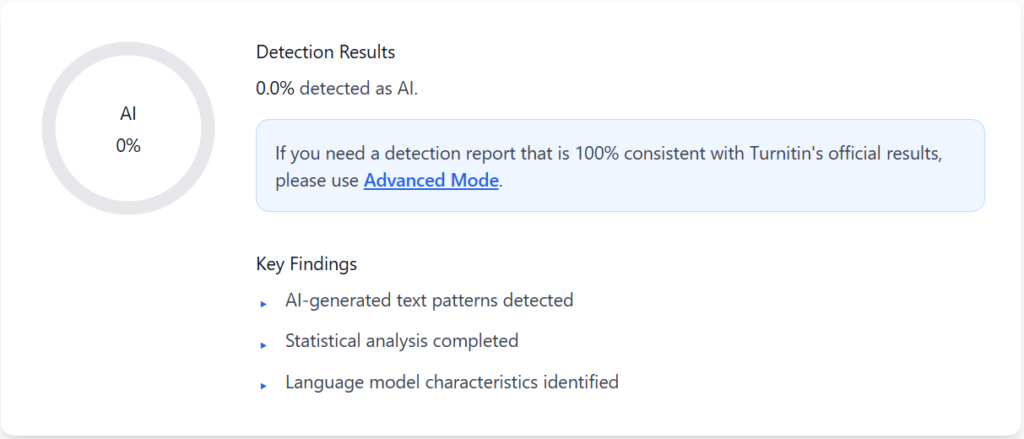

Turnitin: Incorrectly flagged the image as human at 0%

AI Likelihood Score: 0%

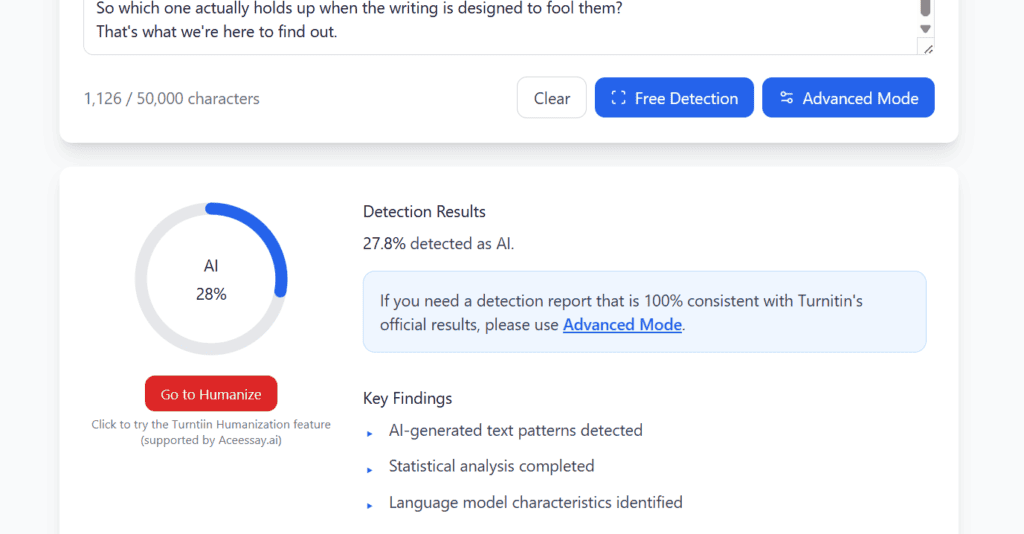

Test #4: Humanized Claude Text

TruthScan: Correctly flagged the text as AI-Generated

AI Likelihood Score: 55%

Turnitin: Still flagged the text as AI- Generated

AI Likelihood Score: 28%

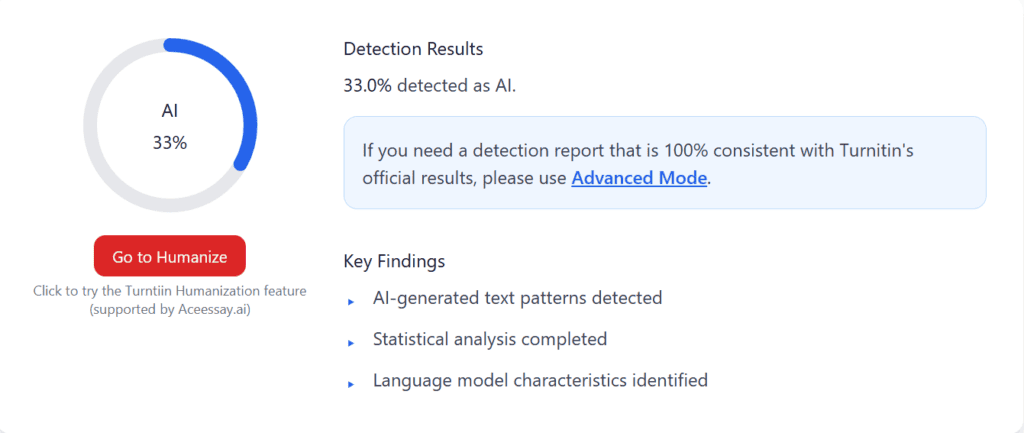

Test #5: PubMed Research Paper Excerpt

TruthScan: Correctly Flagged the excerpt as Human

AI Likelihood Score: 4%

Turnitin: Sent mixed signals, flagging the excerpt as partially AI-generated.

AI Likelihood Score: 33%

Test #6: Survey Response

TruthScan: Incorrectly flagged the survey as partially AI- Generated

AI Likelihood Score: 47%

Turnitin: Correctly identified the survey as Human

AI Likelihood Score: 0%

Average Score

| Test | TruthScan | Turnitin |

| #1 ChatGPT Text | 80% | 78% |

| #2 Claude Text | 97% | 78% |

| #3 Humanized ChatGPT | 87% | 0% |

| #4 Humanized Claude | 55% | 28% |

| #5 PubMed Excerpt | 4% | 33% |

| #6 Survey Response | 47% | 0% |

| Correct Verdicts | 5/6 | 4/6 |

The Bottom Line

Neither tool had a perfect run. That's worth saying upfront because it matters for how universities should think about this.

TruthScan got five out of six right. Where it slipped was the survey response, flagging it as partially AI-generated at 47%. That one is more forgivable.

The Developer Survey is a 2024 publication and there is no way to rule out that some responses were AI-assisted. It is not a clean false positive the way the PubMed result is.

Turnitin got four out of six right. It handled the raw AI essays reasonably well, though its scores trailed TruthScan on the Claude test by nearly 20 points.

It also returned a flat 0% on humanized ChatGPT text that TruthScan caught at 87%.

But the result that should give universities real pause is the PubMed excerpt. Turnitin flagged a peer-reviewed research paper by Whedon and Davis, published in 2011, as partially AI-generated at 33%.

That paper was written and published years before most AI tools existed, there is no defensible version of that result.

For universities that need to catch AI writing in its more sophisticated forms, TruthScan performed better in this test. But winning a six-sample comparison is not the same as winning the argument for wholesale adoption.

Turnitin is still deeply embedded in academic infrastructure, and that integration has real value. The smarter takeaway is that using Turnitin as the only line of defense is no longer enough.

The tools students are using have moved faster than Turnitin's detection has, and this test shows exactly where that gap shows up.

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.