Beautifully Edit and Inpaint Images With DALL-E 2

DALL-E 2 provides the ability to edit existing photos and add content to certain sections of them. This is a game changer for anyone who wants to create composite images or simply tweak an image they have.

Justin Gluska

Updated January 17, 2023

dalle-2 imprinted images

Reading Time: 5 minutes

With the recent introduction of DALL-E 2, we've seen a truly incredible tech innovation that's come to light after years of development. DALL-E 2 uses a 3.5-billion parameter model (which means it takes a bunch of natural text & image inputs) to interpret prompts and produce beautiful images.

If you're obsessed with all these new text-to-image AI generators like I am, you've probably dipped your toes into each of them. After using DALL-E for about 3 months now, I've noticed what its really good at (specific, photorealistic, and abstract content) compared to other text to image tools. Other generators like Midjourney and Stable Diffusion still work amazingly well, but they are a lot better tuned for artistic and visually pleasing types of art.

In addition to completely unique artistic styles, DALL-E gives you some advanced photo editing feature. For starters, you can easily edit existing photos and add content to certain sections of them. This is a game changer for anyone who wants to create composite images or something as simple as tweaking an image they already have. DALL-E is trained on millions of stock images, so it does a great job of incorporating real-world objects into your creations. Editing pictures in combination with millions of data points allows for some really mind-blowing results that I haven't been able to reproduce with other image-producing products.

Over the last few days, I've tried to master the art of inpainting with DALL-E. Inpainting is the formal term describing the process of filling in missing portions of an image. It's a technique that's been used for years by graphic designers and artists to fix photos, but only recently has AI has become good enough to do it seamlessly with generated art. And also... DALL-E is pretty good at it.

Expanding Image Inpainting with DALL-E

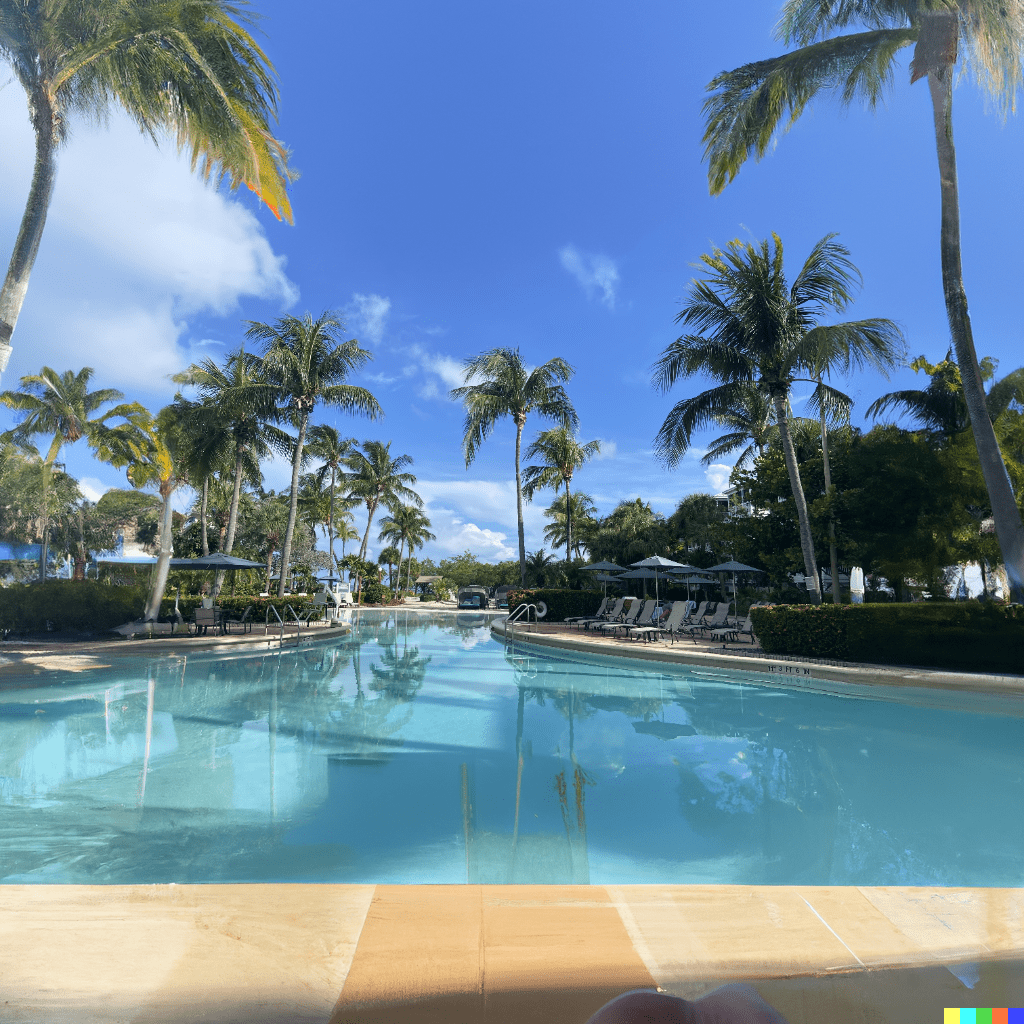

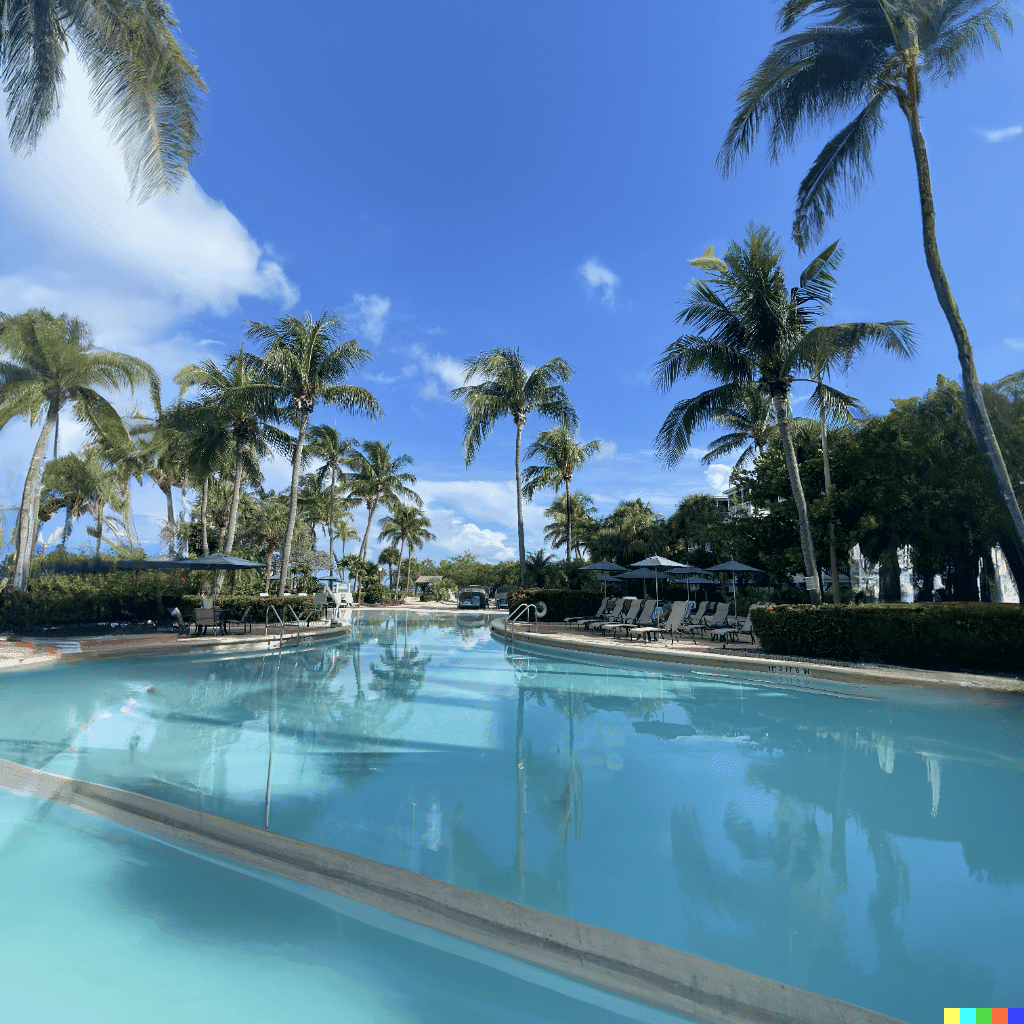

This technique was something I thought of when I was scrolling through pictures of a beautiful resort and noticed the picture was great – but would be even better if I could add more water/beach/beauty to it. I've seen people use inpainting to remove things like power lines and telephone poles from pictures, but I wanted to see if I could use it for something a little more creative. So I decided to literally fill in existing space with an interpolated version of the existing image (more water!).

I used Photoshop to increase the image by 200% in both the width & height, leaving me with the original picture in the middle & padding all around it. I then took that image and ran it through DALL-E by uploading, erasing the sides, and letting it do the rest. The results, as you can see below, are pretty unique

This seems to work better with abstract pictures over those with a lot of sharp detail. I imagine it would also be interesting to run an image that has been edited in photoshop first, as the AI would then have more to work with when expanding the image. This feature should really only be used for internally editing images, as outpainting is better for expanding outwards.

Add Object Inpainting with DALL-E

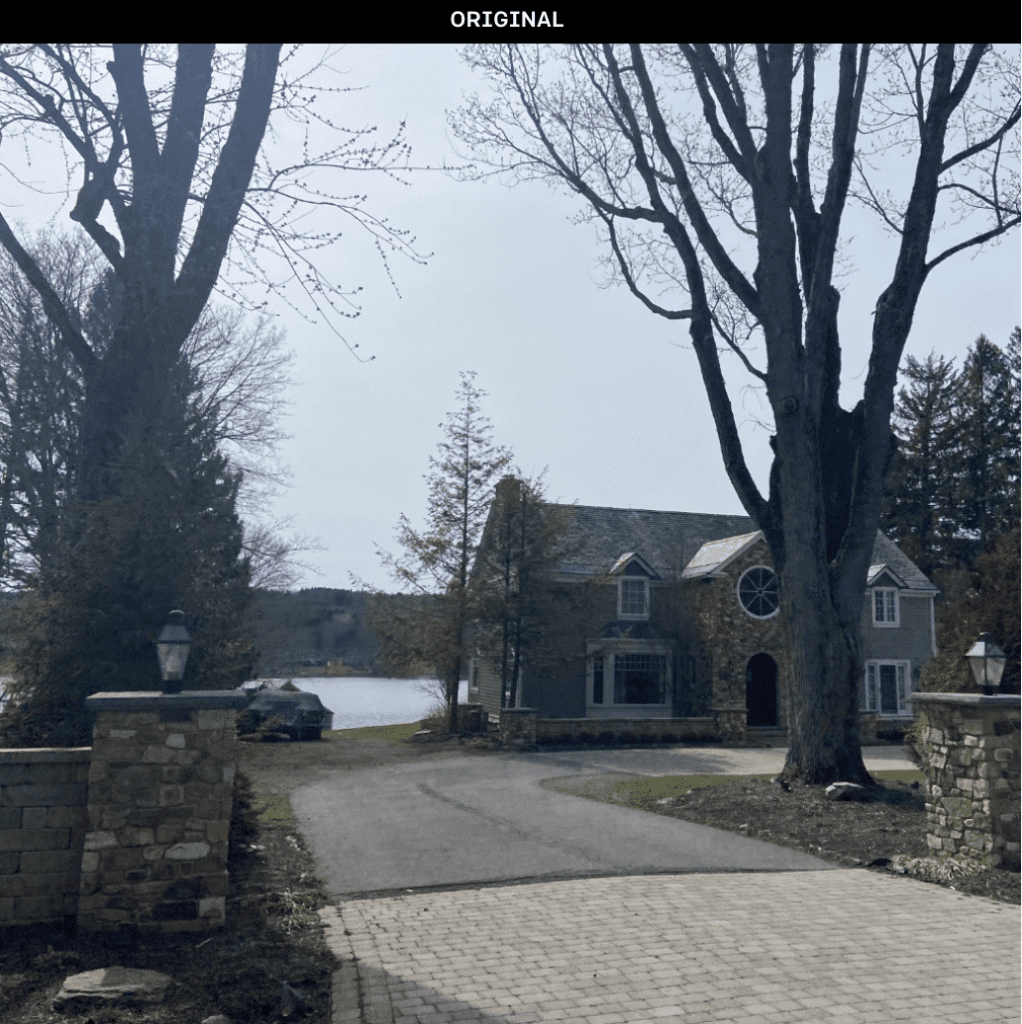

After messing around with environments, I decided to use the inpaint upload feature as it was intended: to fill in an image with an object. I found a picture I took of a house near a lake and figured I could add a garage to it. I took the original (high quality) image, uploaded the new image, erased a portion with the inpainting tool, typed 'a garage for cars', and waited for the results

The results were mixed, but I think it's definitely possible to get some interesting results with this technique. I was very impressed by the quality of these. Regardless if they'll be used for an architectural mockup or personal curiosity – this is some impressive stuff.

Default Image Variations with DALL-E

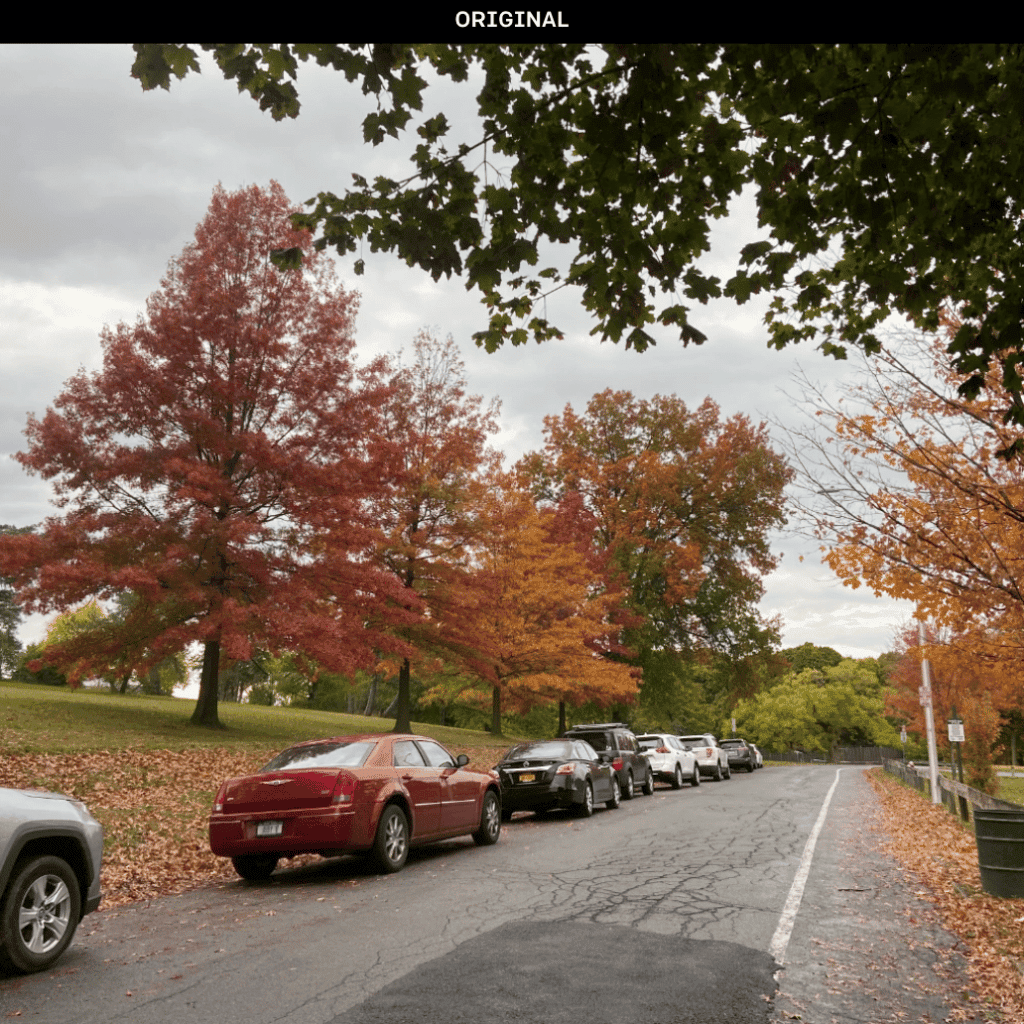

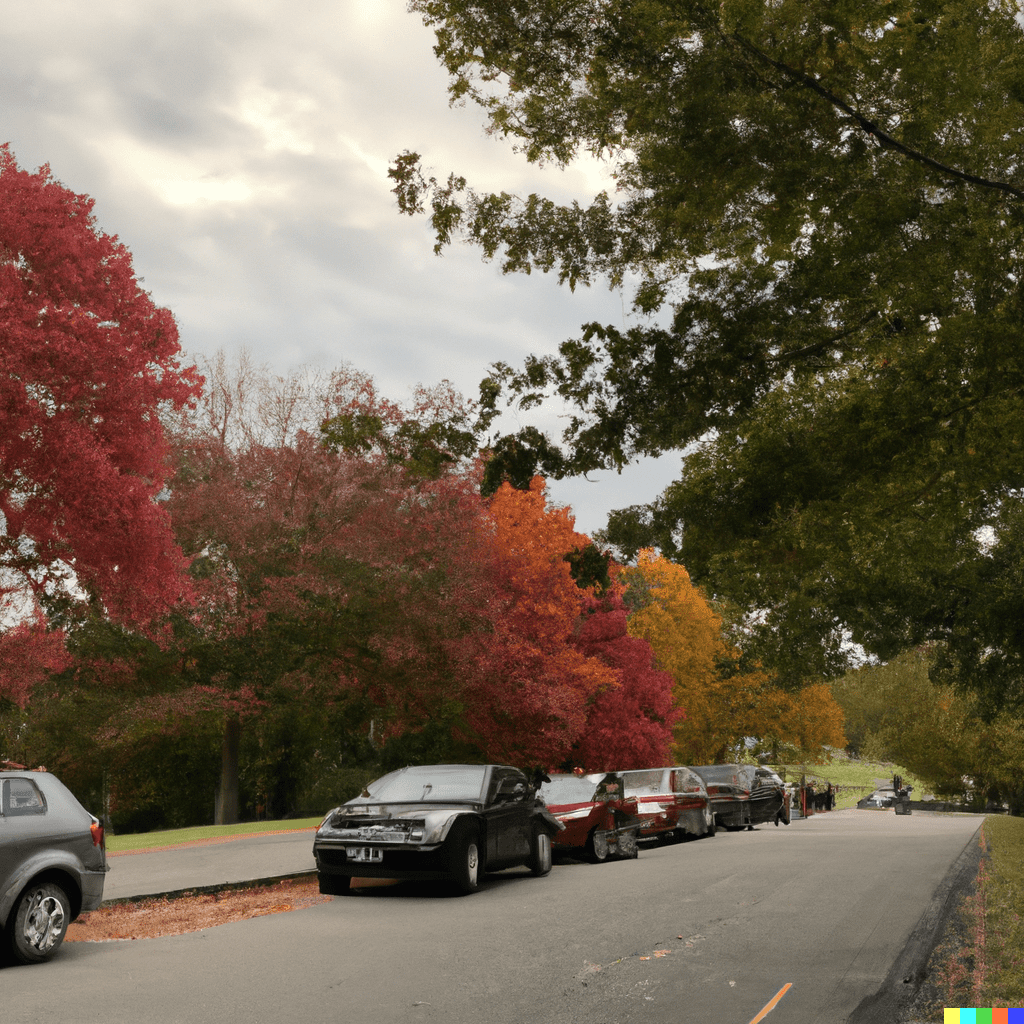

And lastly, I wanted to see what would happen if I simply took an image and instead of editing, just generated variations on the default output (of a real image). I took a picture of a road during autumn and let DALL-E do its thing.

Super cool, but still unnatural. I got a wide variety of outputs, some of which were completely different versions but still maintained the feel of the original image. You could see similar colors, car styles, and sky design but also see the discrepancies

The Final Verdict

Overall, I'm extremely impressed with DALL-E's ability to edit & inpaint upon images. I think this toolhas a lot of potential for both creative and practical uses despite it not being perfect. It's really fun to experiment with and push creative boundaries of AI image creation. So if you're looking for a new way to edit pictures or simply want to generate some interesting variations on an image, give DALL-E inpainting a try.

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.