Midjourney vs DALL-E for Text Generation – Who Does It Better?

Midjourney V6 was just released and one of its biggest improvements is text generation. Let's put it to test by directly comparing its text outputs with the best in its space: DALL-E 3.

John Angelo Yap

Updated December 27, 2023

A robot showing its writing skills, generated with Midjourney V6

Reading Time: 6 minutes

Let's take a quick history lesson and look back at the state of AI image generation a year ago. We couldn't reliably generate faces, DALL-E 2 had just been released a few months prior and had mixed results, Midjourney V4 was starting to make some noise, and Stable Diffusion's leading the way with 2.0.

In just a year, AI art has been nearly perfect except for two significant roadblocks: nuance and text generation.

Fast forward to today: we just had DALL-E 3 a few months back, and earlier this week, Midjourney V6 was finally released. Can these finally be the AI image generators that handle text perfectly? Let's find out.

Why Midjourney and DALL-E 3?

For a while now, DALL-E 3 has been the only AI image generator that can consistently create images with text. It's one of their main selling points, along with improved creativity and nuance. It's even showcased on their announcement page with this photo:

Recently, Midjourney unveiled its newest model: V6. And what do you know, they're also highlighting better nuance, creativity, and, most importantly, minor text drawing as their improvements. I've always avoided using text generation when comparing Midjourney against other generators because it would be unfair, but now that we're getting this feature, it only makes sense to pit it against the best.

Head-to-Head: Midjourney vs. DALL-E for Text Generation

Each comparison will focus on text, but we'll also analyze their nuance and creativity in applying the text. So, without further ado, here's a direct comparison of Midjourney and DALL-E 3 using the same prompts:

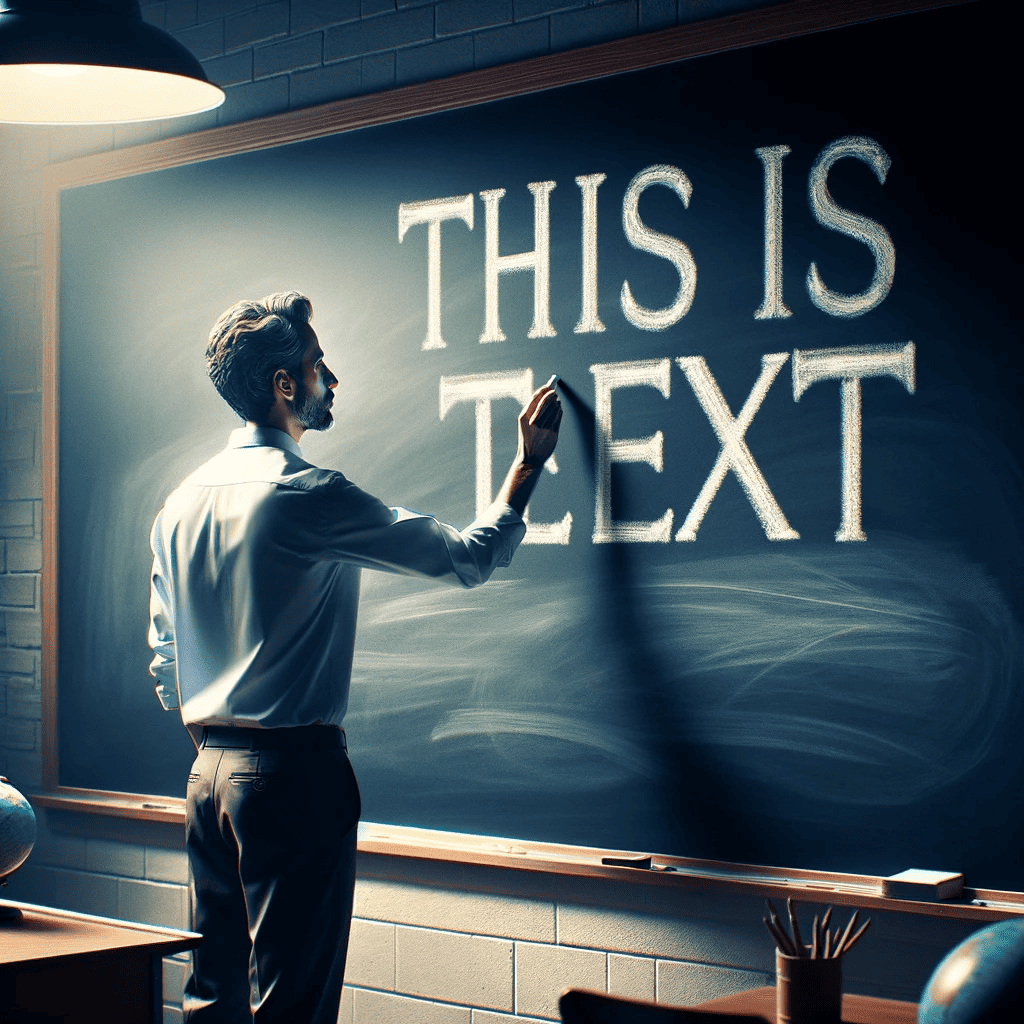

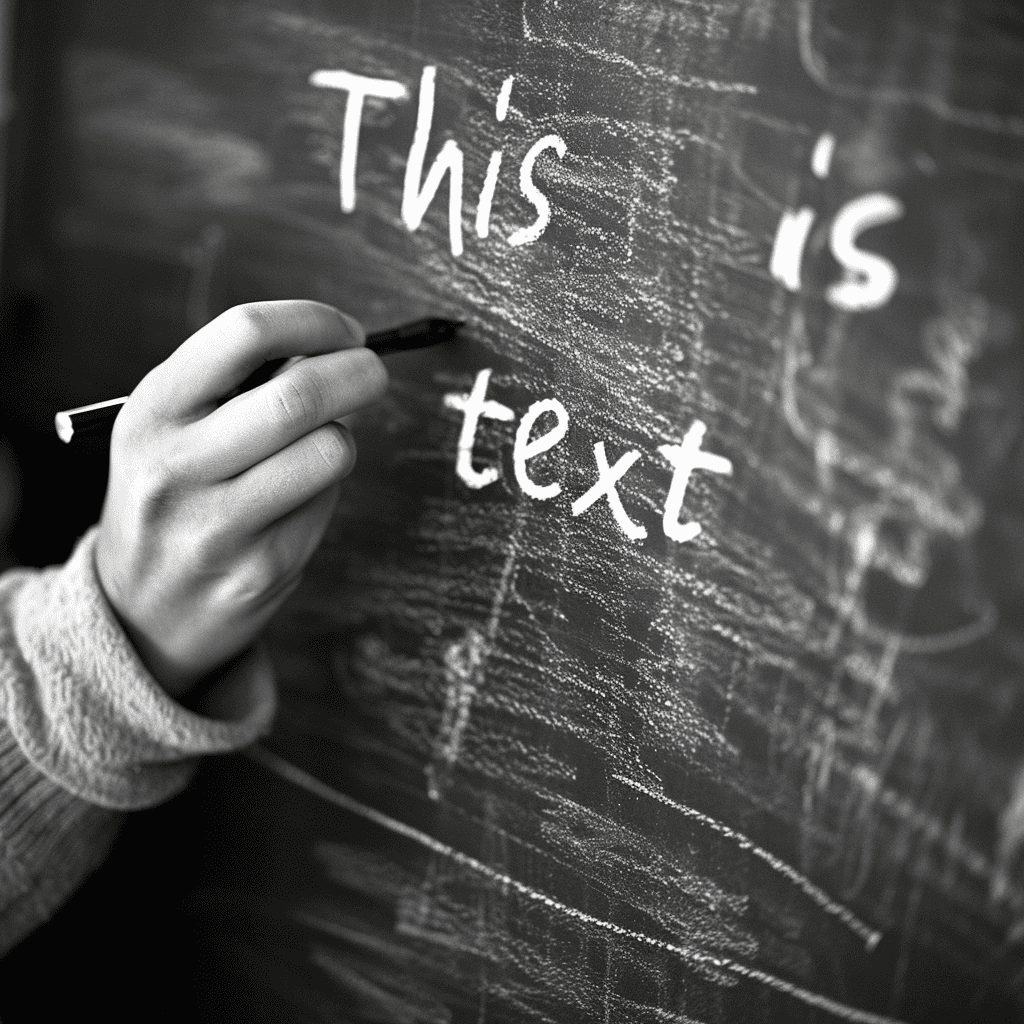

Simple Text

Text: "This is text."

In terms of the text itself, Midjourney performed better than DALL-E 3 because of a small mistake the latter made when writing the last part of the text. However, DALL-E shows more cohesion as an image because the teacher in Midjourney is using a pen to write on a chalkboard.

Winner: Midjourney V6

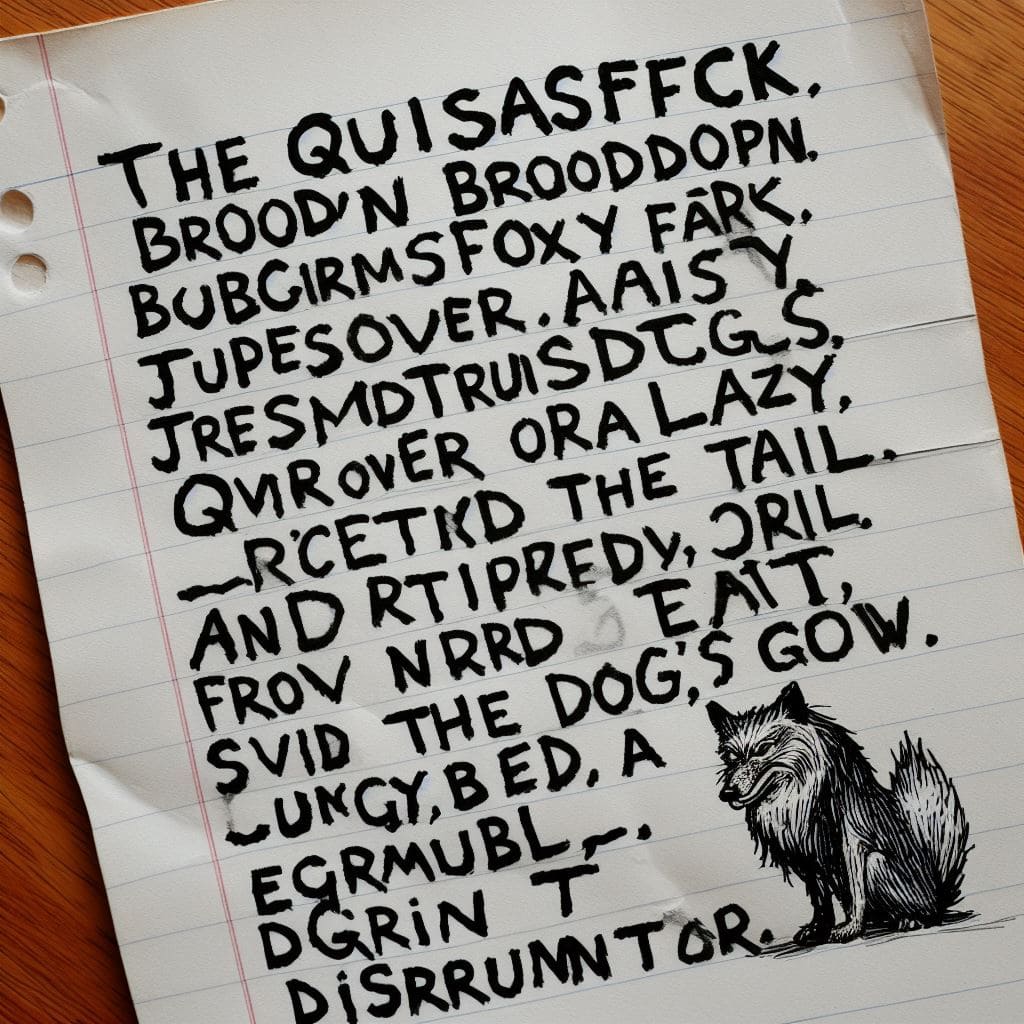

Long Text

Text: "The quick brown fox jumps over a lazy dog, and promptly tripped over the dog's tail, earning a disgruntled grumble."

Both tried to add their own flair to a simple prompt (a piece of paper with writing on it), but neither actually made readable text. This shows that AI image generators can write short phrases or sentences, but they worsen as you add more words.

Winner: None.

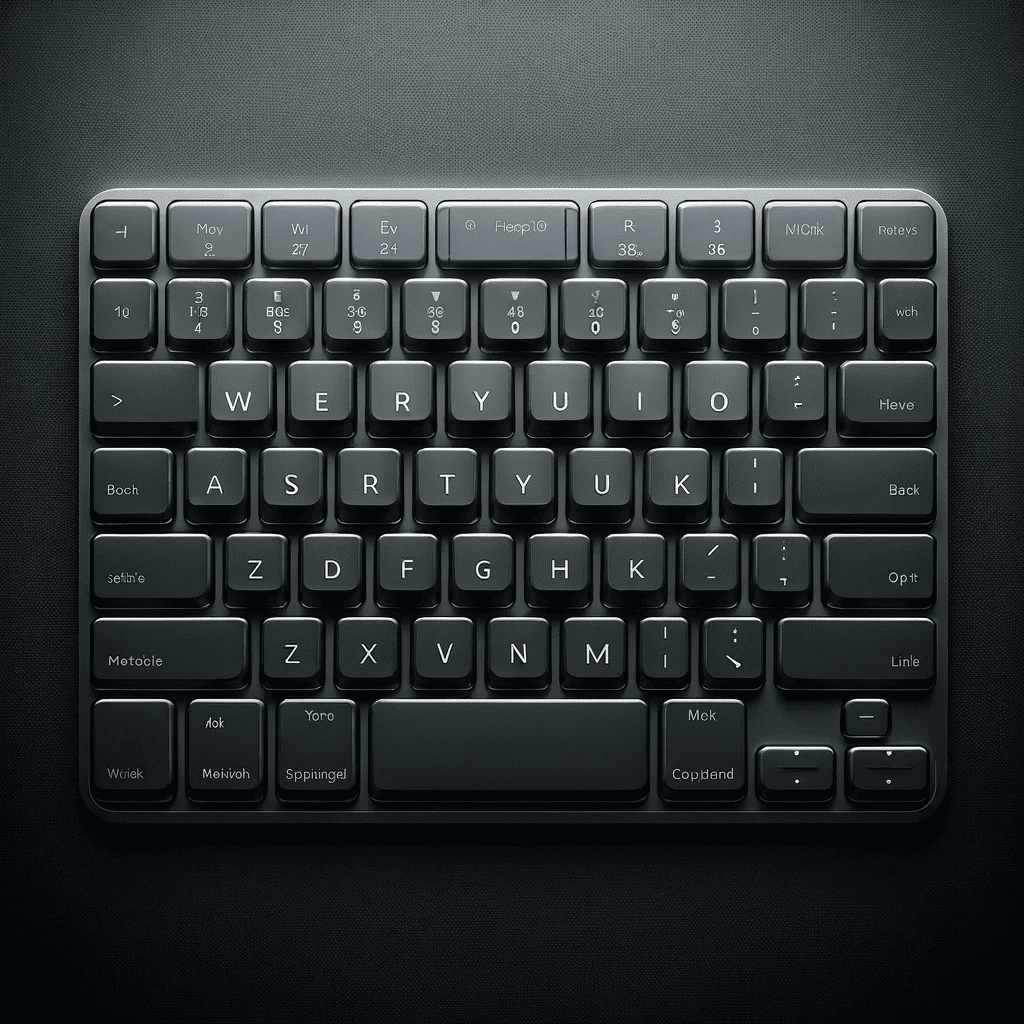

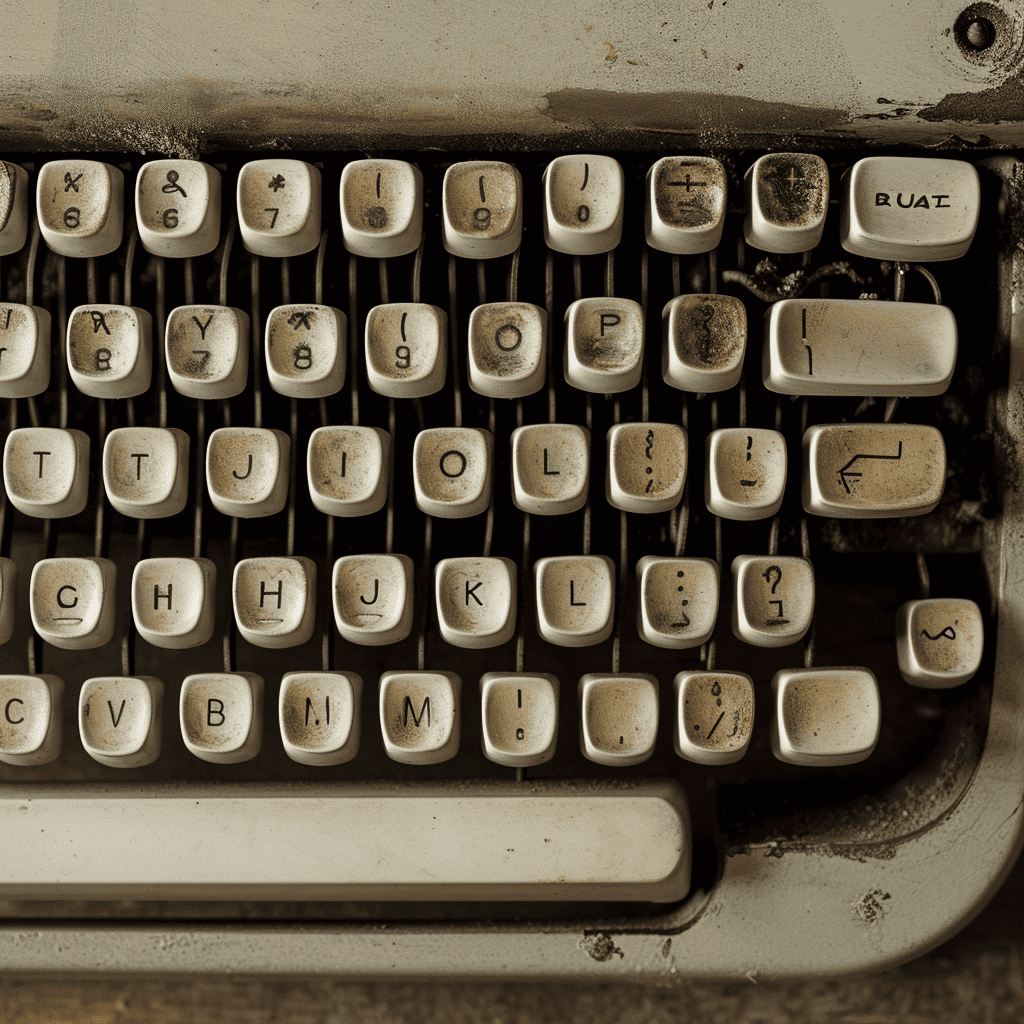

Keyboard

For this one, I didn't ask either model to write a specific word or sentence, but I tasked them to generate an accurate QWERTY keyboard. Obviously, neither is actually correct, but DALL-E couldn't even arrange the letters properly, whereas Midjourney somehow got the correct placement for more than half the letters.

Winner: Midjourney V6

Logo

Text: "Matcha."

Both of these images demonstrate a great understanding of my original prompt (a green coffee mug logo) and showcase creativity. There's nothing wrong with either text either, and it even matches the art style each generator created for their logo.

Winner: Tie round.

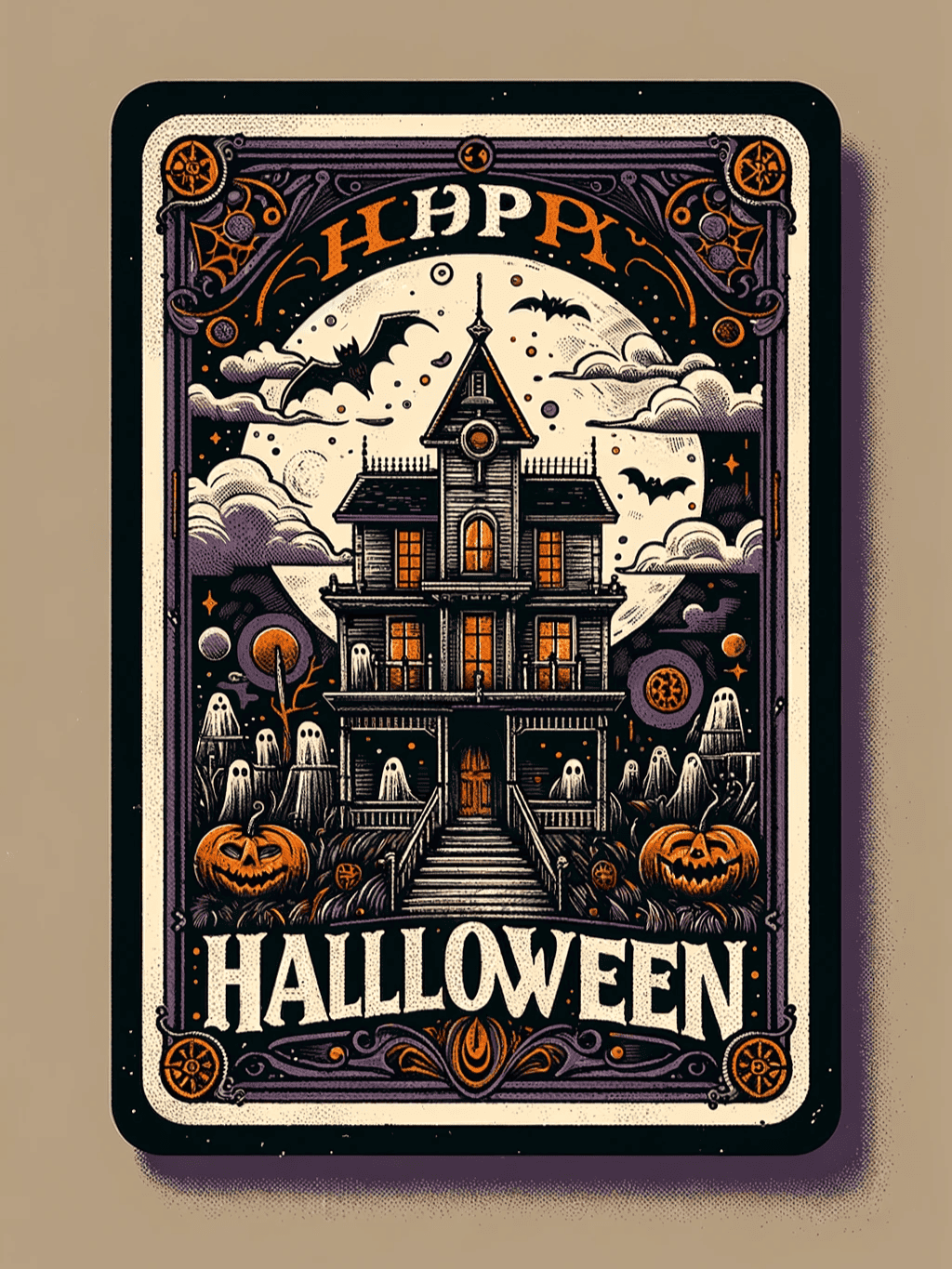

Postcards

Text: "Happy Halloween."

As AI image models evolve, I have to be extremely nitpicky with how I judge their text generation prowess. Case in point: I would love to make this a tie round, but the minor mistakes on DALL-E's output (triple Ls in "Halloween" and inconsistent coloring in "Happy") prevents me from doing so.

I will say this though: I prefer DALL-E's postcard over Midjourney.

Winner: Midjourney V6

Signs

Text: "Bacon and Eggs."

This is a clear win for DALL-E. Midjourney V6 tried its best, but the unnecessary and out-of-place yellow "and" sign stops this round from becoming a tie.

DALL-E also shows amazing nuance this round by turning "and" to an ampersand and creating a separate "Diner" neon sign without me asking. It's not just readable; it's also creative, unique, and immersive.

Winner: DALL-E 3

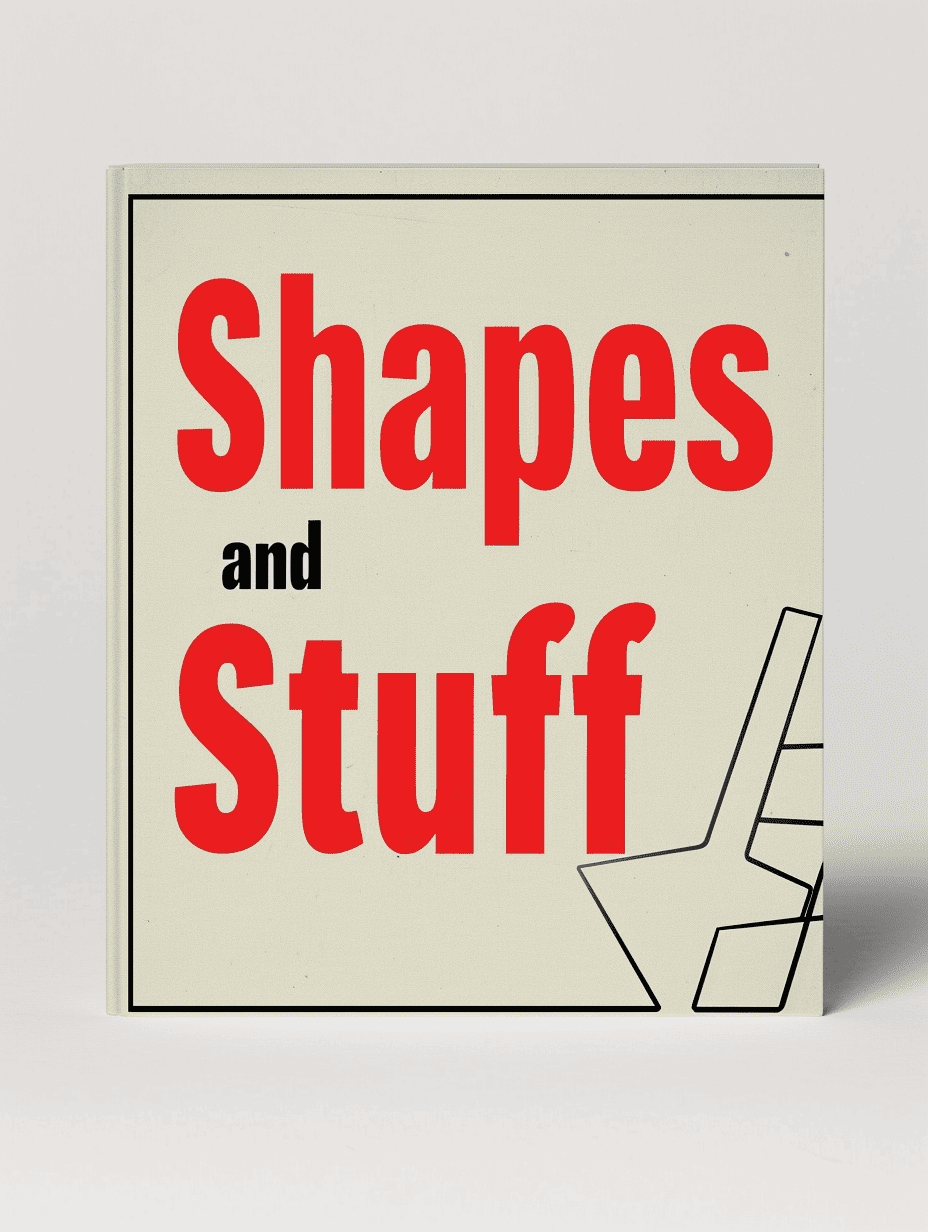

Book Covers

Text: "Shapes and Stuff."

I'll admit: DALL-E 3 created a much better book cover than V6. However, the book title generated by DALL-E has far too many mistakes, so I have to give this point to Midjourney, which perfectly rendered "Shapes and Stuff" in a consistent font. V6's cover design also showcases its improved comprehension by highlighting the text's keywords.

Winner: Midjourney V6.

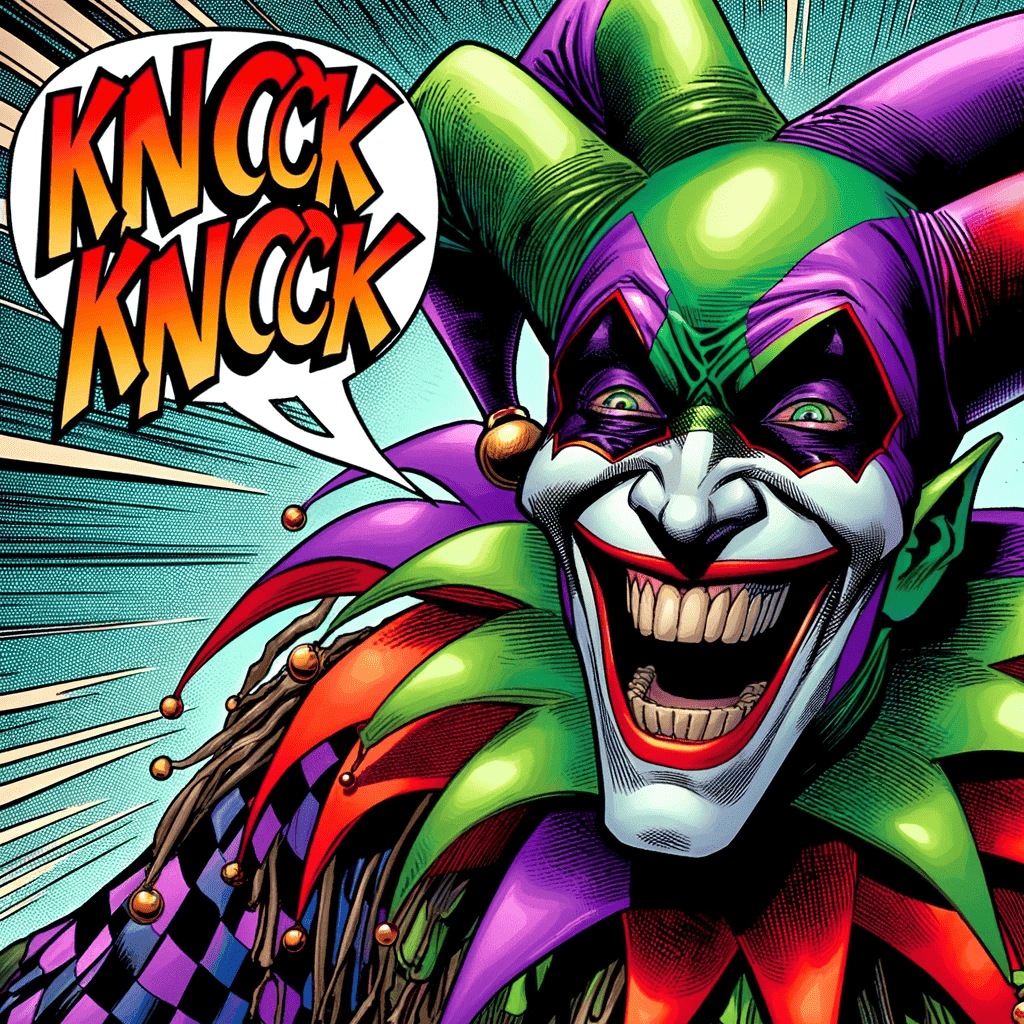

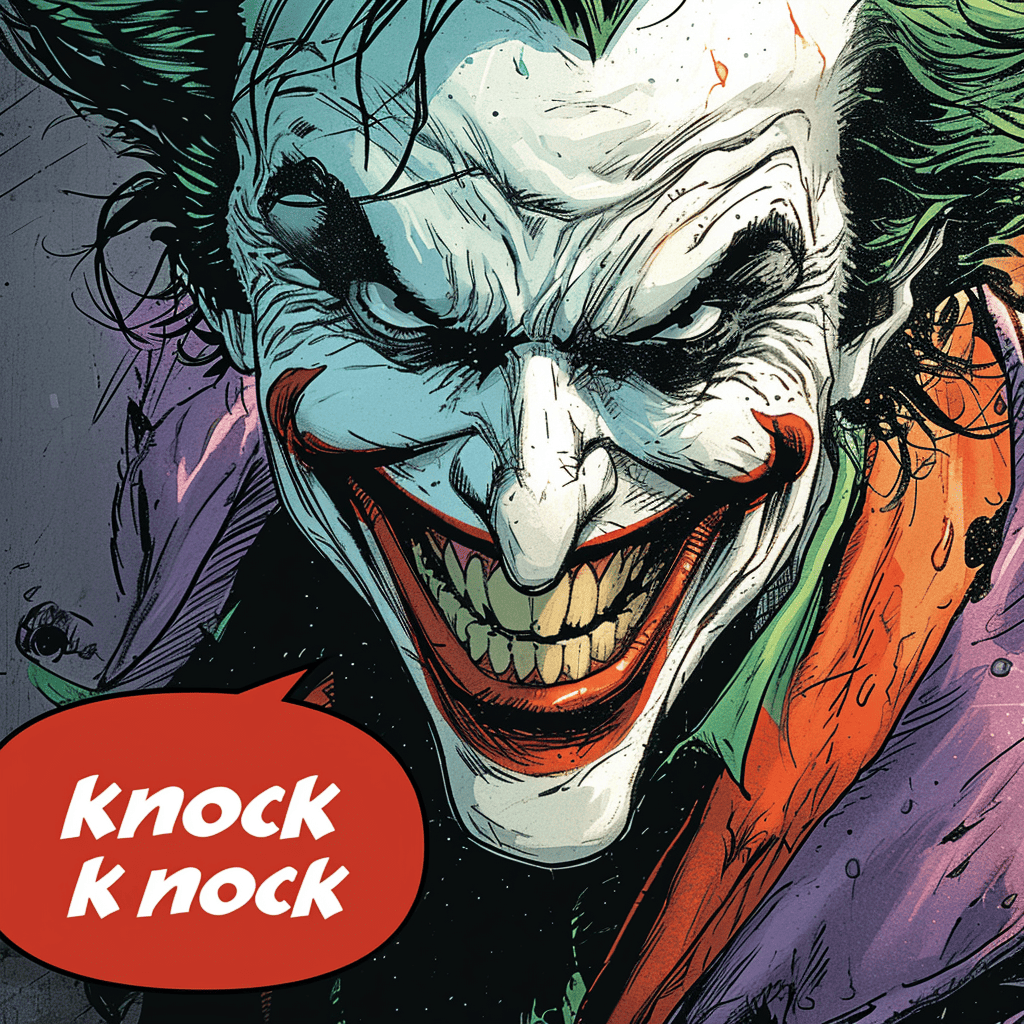

Comic Panel

Text: "Knock knock!"

Midjourney V6 and DALL-E 3 both made minor mistakes in writing the text. Since both of these are still readable and their artwork is amazingly done, I'm declaring this round another tie.

Winner: Tie round.

Surreal Settings

Text: "To infinity"

Just to provide a little background: my prompt for this round explicitly states that the text should be composed of stars. Although I mentioned that the focus would be on the text itself, which Midjourney did better this round, DALL-E's minor mistake won't prevent me from awarding this point to them because they did, in fact, create the text using stars.

Winner: DALL-E 3

The Final Tally and Observations

DALL-E 3 | Midjourney V6 | |

Simple Text | Almost perfect text, and showcases a high level of nuance and creativity. | Perfect text, and showcases a good level of nuance and creativity. |

Long Text | Unreadable text. | Unreadable text. |

Keyboard | Letters aren't placed in the right order. | Around half of the letters are placed in the correct order. |

Logo | Perfect text, and showcases a high level of nuance and creativity. | Perfect text, and showcases a high level of nuance and creativity. |

Postcards | Almost perfect text, and showcases a high level of nuance and creativity. | Perfect text, and showcases a high level of nuance and good creativity. |

Signs | Perfect text, and showcases an incredibly high level of nuance and creativity. | Almost perfect text with a noticeable mistake. Showcases good level of nuance and creativity. |

Book Covers | A good attempt with a few noticeable mistakes. Showcases great level of creativity. | Perfect text, and showcases a good level of nuance and creativity. |

Comic Panels | Almost perfect text, and showcases an incredibly high level of nuance and creativity. | Almost perfect text, and showcases an incredibly high level of nuance and creativity. |

Surreal Settings | Almost perfect text, and showcases a high level of nuance and creativity. | Perfect text but shows low understanding of the prompt. |

One things I've noticed in this testing is that DALL-E 3 appears to have a higher error rate compared to Midjourney. On the other hand, Midjourney tends to lack the same level of creativity and nuance when tasked with generating images that specifically asks for text. I believe that V6 is compromising a portion of its creativity when fed with prompts that explicitly focuses on text generation.

Wrapping Up

This head to head is a lot closer than I expected, but Midjourney V6 pulls through with a win. However, like I said earlier, V6's improved but still limited nuance is preventing it from generating text while making full use of its creativity.

However, this is to be expected because this isn't the final version of V6 yet. Midjourney is only going to get better from here as they gradually improve the model behind it. There's no concrete news on DALL-E 4 yet, but we can expect the same improvements for that model too. But for now, Midjourney's the one leading the space in text generation without a doubt.

That's it for this direct comparison. If you're looking for more articles about V6 and DALL-E 3, I highly suggest reading this article. Good luck!

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.