How To Check If Something Was Written with AI (ChatGPT)

AI is here and not going anywhere anytime soon. Many have started questioning the authenticity of what they're reading and want to know if a human or AI wrote it. While these AI writing detection tools aren't 100% accurate, you could use a few combined methods to help figure out the origin of what you are reading.

Justin Gluska

Updated December 26, 2025

AI vs. Human Writing, Generated with Midjourney

Reading Time: 15 minutes

All it takes is a few tries for users to realize that AI detection in 2024 still isn’t perfect.

It’s been years since the release of ChatGPT and, along with it, came the AI-written content spam and misuse in education. It's getting harder and harder to tell what pieces of writing have actually been written by people like you and me. When even TurnItIn AI detection is having a tough time, you know it’s high time for a change.

Beyond LLMs getting smarter, people are getting smarter with bending the rules too. Undetectable AI and other AI-writing humanizers have been getting a lot of traction in 2024.

The point is: it won’t always be clear which is AI or not, but there are tools that can assist this process. After over a year of researching how to detect AI, here are my technical and non-technical methods for checking if something was generated using AI.

How To Tell If An Article Was Written With AI

Detecting AI-generated content requires multiple samples of writing and various tools and still involves an aspect of predictability. Please don’t rely on a single method of AI content detection to claim something was written with AI.

Even I still find myself getting stumped depending on the complexity of the AI used, especially as AI gets better. But here are tools and methods to help you spot an AI’s writing:

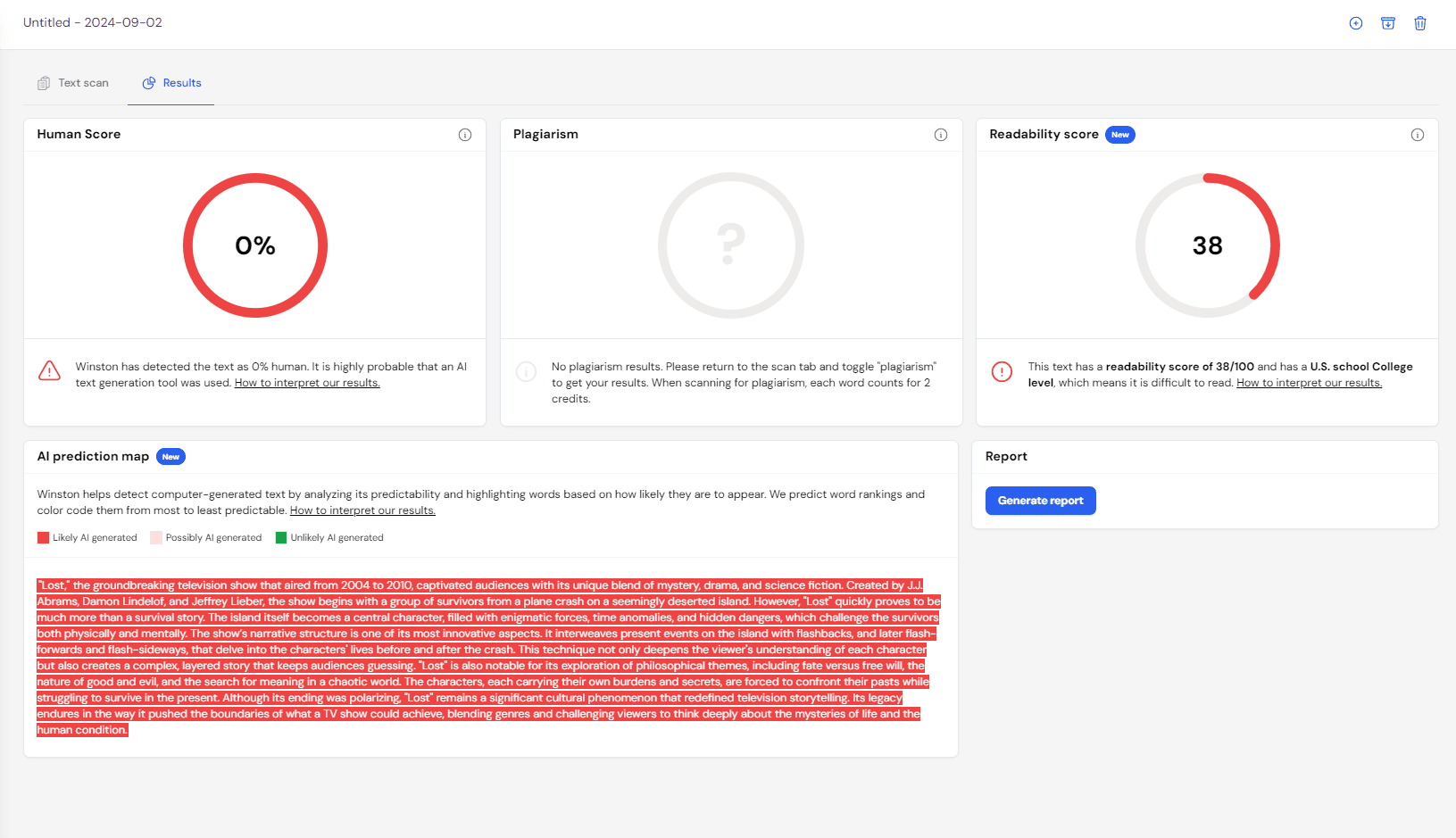

Winston AI Is Great for Depth

Earlier this year, we tested every well-known AI checker in the market. Turns out, there’s a reason why Winston AI is the most popular detection tool in 2024.

Winston AI claims that they have a 99.98% accuracy — something that we put into test. Although we didn’t exactly reach those heights (after all, this was a much smaller sample size than what they had), we still had their true positive accuracy ranked as the highest at 91.92%. This was 4% higher than the second placer (Sapling) and 16% higher than the third (Copyleaks).

Winston is also one of the few AI detection tools that can detect AI humanizers like Undetectable AI (more on that later) consistently. This makes it a pretty useful tool against AI misuse in education and in the workplace.

All in all, I’d say that I feel more secure with Winston’s detection than any other tool. If it was free, this would 100% be my first recommendation.

Use CopyLeaks AI Detector

A free & easy AI detector is Copyleaks. The detector alerts you if it believes something is AI-generated or human-written with no extra fluff.

You input text and it’ll check for AI content in seconds. The best part: you can check up to 2000 pages worth of content. All for free!

They also have a free Chrome extension that allows you to check directly within your browser. Compared to its Web-based platform, the extension can only account for a maximum of 25,000 characters.

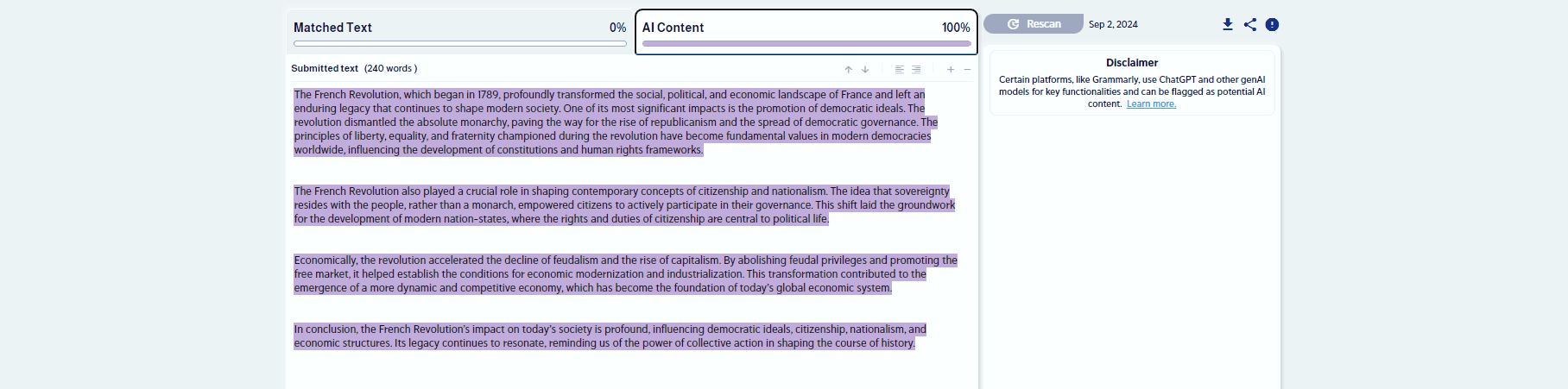

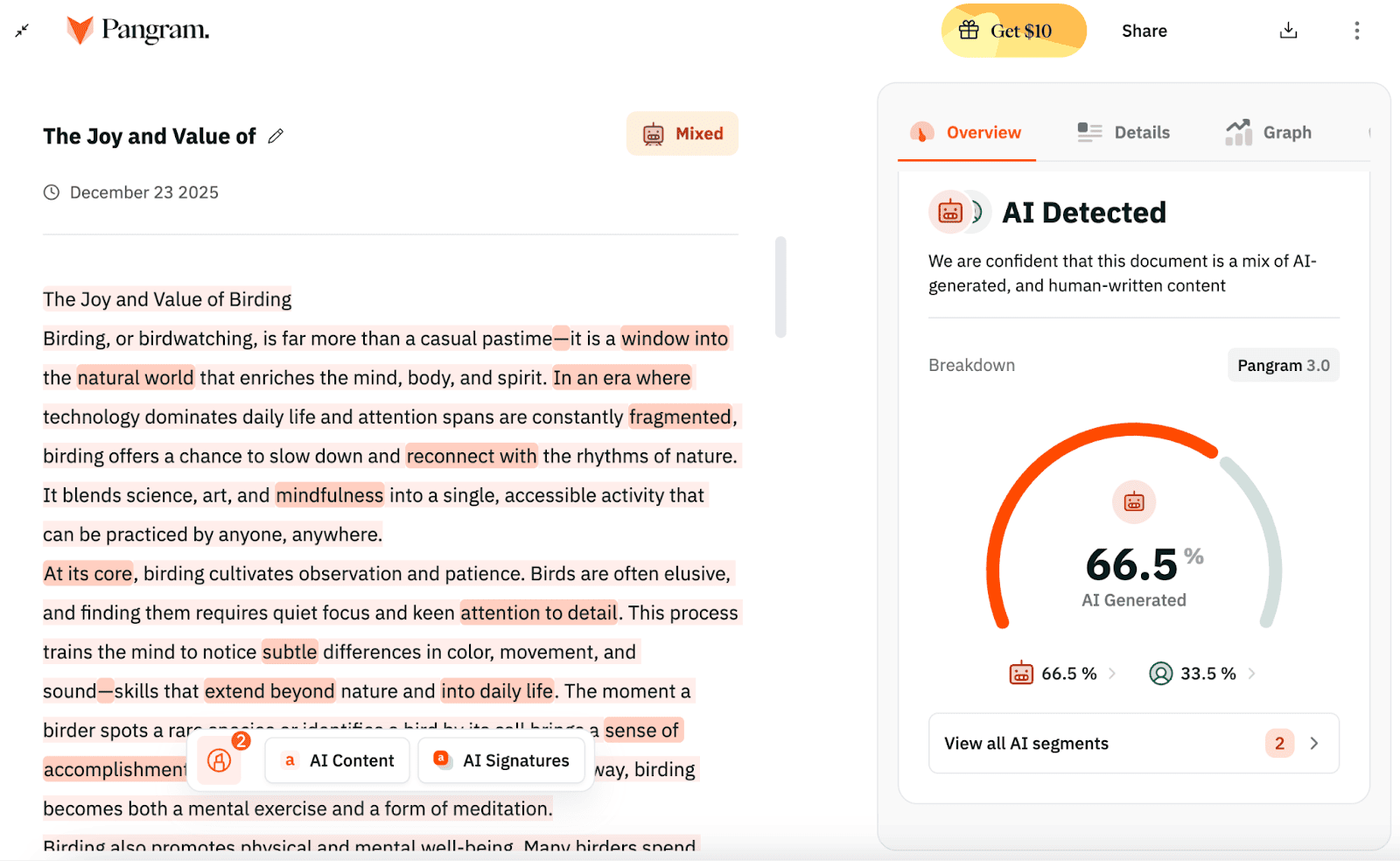

Pangram is the Best AI Detector for Teachers and Universities

I came across another AI detection tool, Pangram, and I have to say – I’m clearly not the only one who’s by far and away impressed with their platform.

According to third parties like University of Chicago and University of Maryland – Pangram is reviewed as one of the most proven, reliable and accurate AI detection tools in the market.

The Pangram AI detector & plagiarism platform is trusted by thousands of teachers, publishers, and higher education institutions across the globe. And according to Pangram, they can detect short and long-form AI-generated content, with up to 99% accuracy.

That is insanely accurate for an AI-detector.

With more and more people writing co-authored content with heavy use of AI, Pangram is now one of the few AI detectors that can also detect AI-Assisted content.

And one of the best parts about Pangram? You can use their AI detection and plagiarism checker for free up to 4 times/day!

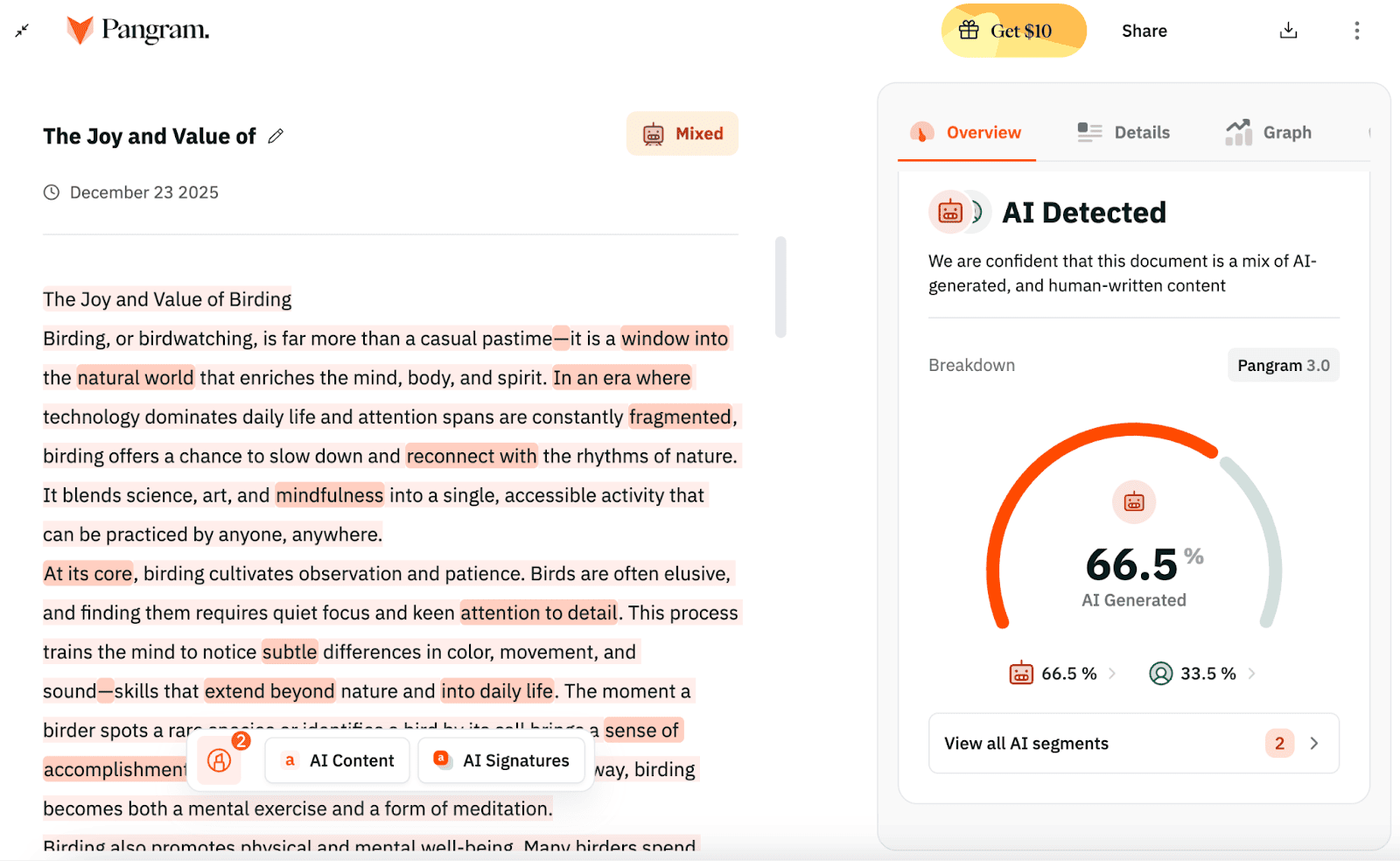

Utilize Undetectable AI's Multi-Detection Tool

Undetectable AI is my next suggestion to help predict if something was written with AI. The tool works by averaging the AI likelihood score from each of the AI detectors they feature (Sapling, GPTZero, etc).

To use Undetectable's AI Checker, paste your sample of writing inside the input box & submit it for testing! If you’re interested, we already tested their AI detection tool so you don’t have to.

It’s also free to use until you hit the word limit, then it’ll ask you to make an account.

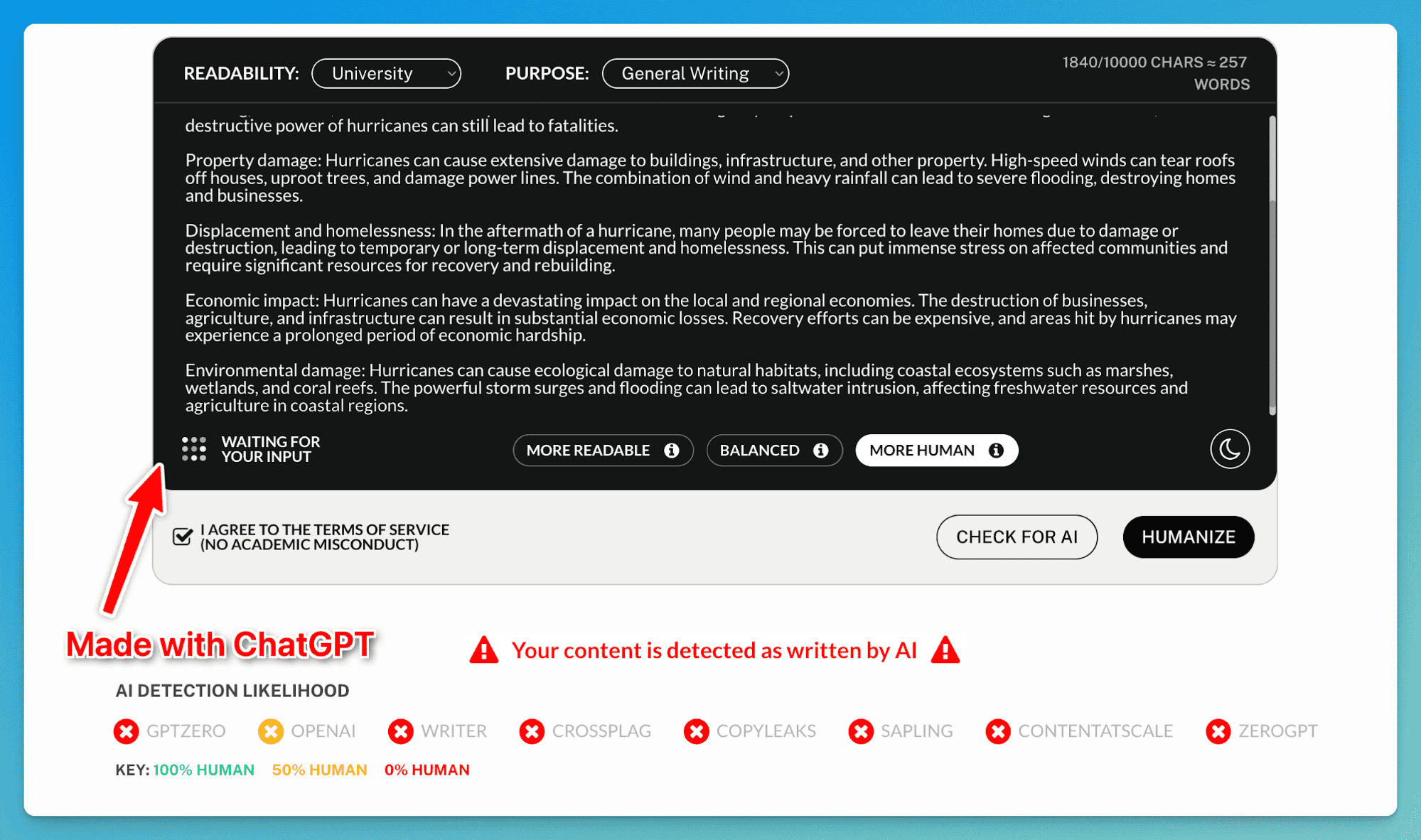

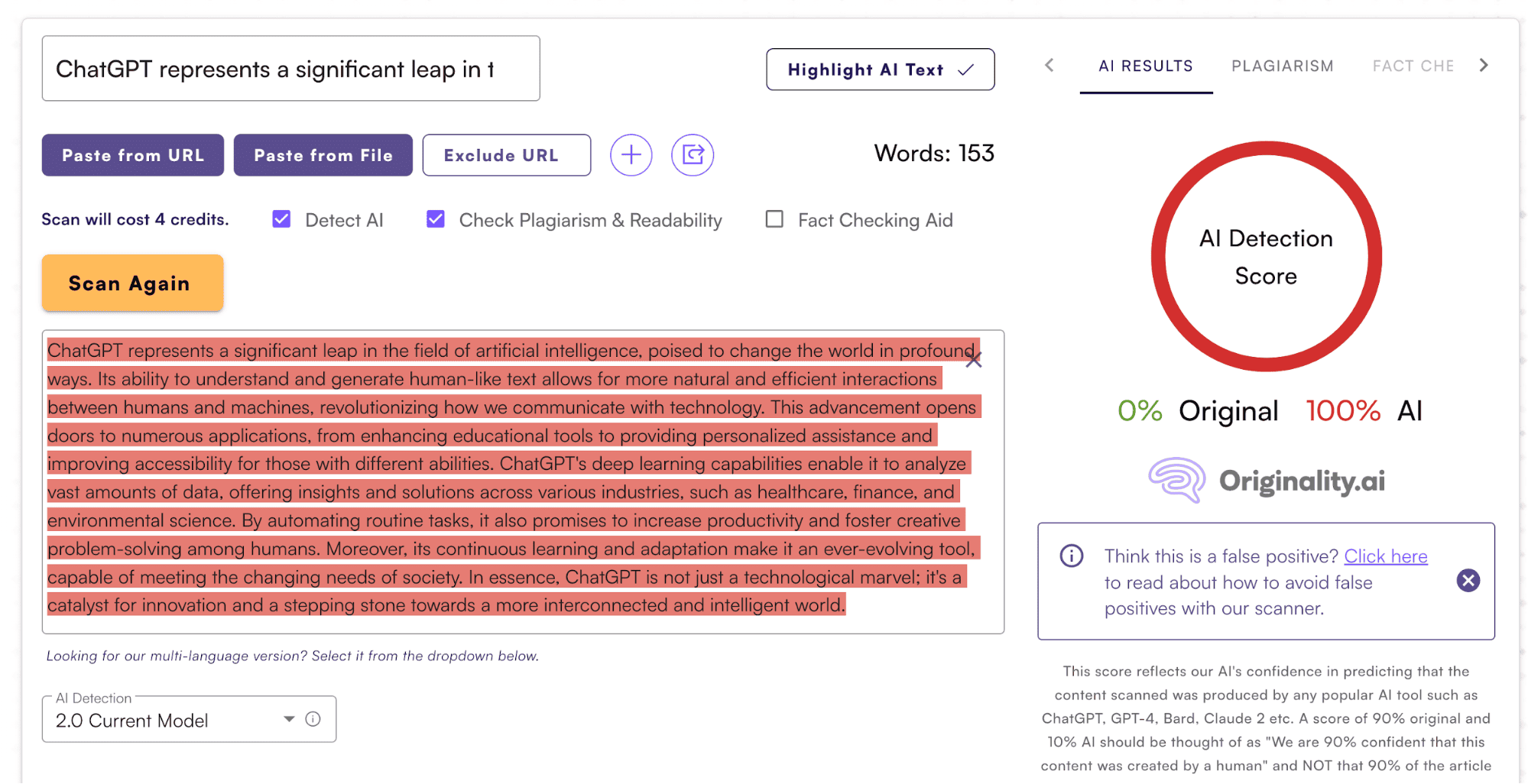

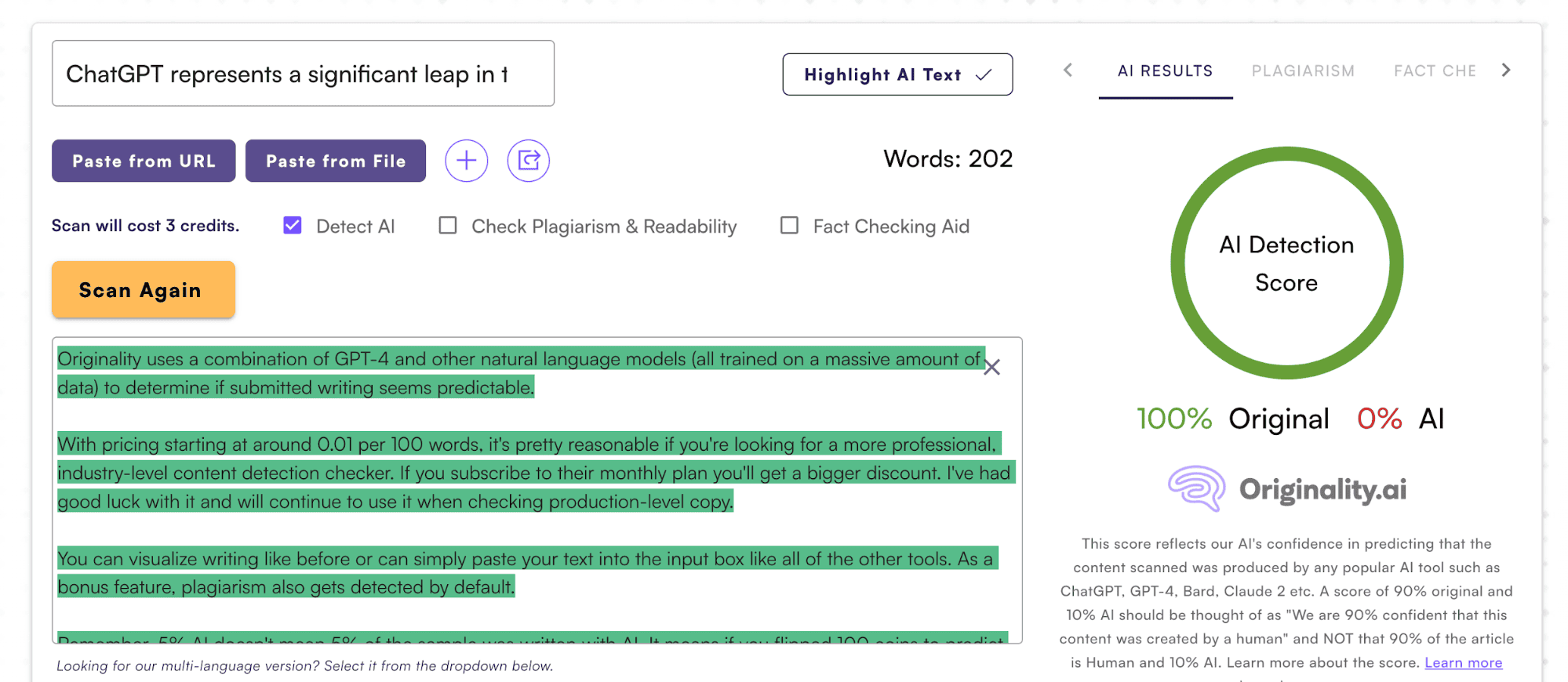

Originality.ai's Detector & Text Visualizer

If you want to go a step further than testing your article across various detection tools, you could use Originality AI to both check & visualize the writing progression. Originality is the harshest AI detection software I've ever used (take that as you wish).

The text visualizer feature is what sets it apart from many other AI writing detectors. If you are getting anything submitted to you through Google Docs, you can check the writing with Originality & then rebuild the article using their visualizer to see if it involved a lot of copy-pasting.

It looks something like this:

Combine this with their writing detection tool and you'll have some really good intuition as to the origins of your suspected writing. In the example above, I actually gave a task to a writer I hired and they used AI to generate about half of it.

You can see it clearly when things get copied and pasted before getting tweaked.

Originality uses a combination of GPT-4 and other natural language models (all trained on a massive amount of data) to determine if submitted writing seems predictable.

You can install their Chrome extension to test their AI detector tool on your writings. However, it’s limited to 500 words as you are given only 50 free credits (1 credit scans 100 words).

They have 2 pricing options:

- $30 for a one-time fee, giving you 3000 credits and a 2-year expiry date.

- $14.95 monthly, providing you with 2000 credits. It also saves you about 25% and can be canceled anytime.

As a bonus feature, you can also fact-check information at 10 words per credit. Plagiarism also gets detected by default at 100 words per credit.

Remember, 5% AI doesn't mean 5% of the sample was written with AI. It means if you flipped 100 coins to predict whether something was written with AI, the detection tool would guess it was AI 5 out of those 100 times.

Teachers have been confusing these percentage values, and it's ended up getting students in trouble, which hasn't been too good to hear.

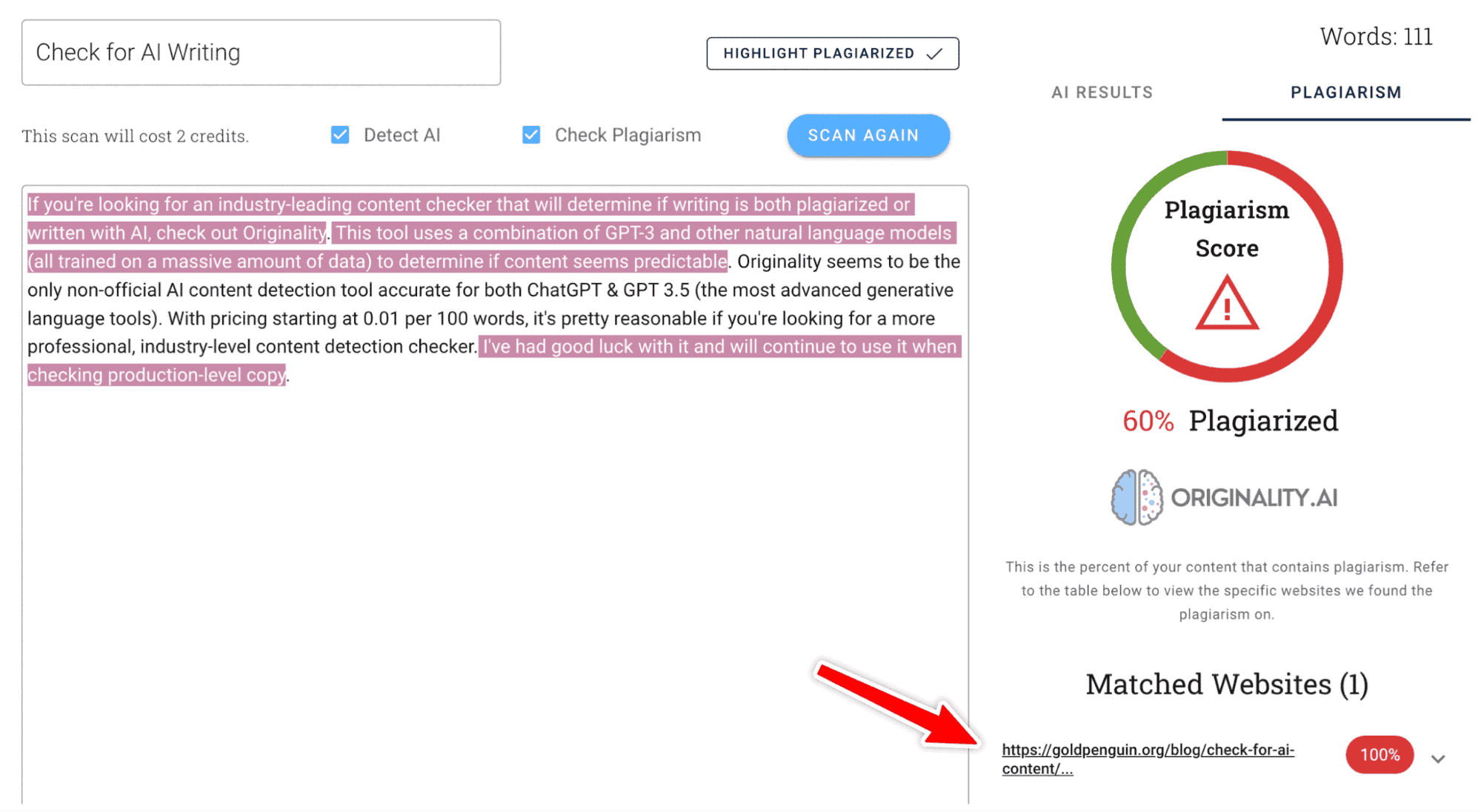

Regarding plagiarism, it's also very impressive. Originality was able to find the exact blog I "copied" the content from and marked the text as being copied from a website (this one!!!). For what it's worth, combining AI detection with a plagiarism checker is an additional measure to be even more confident about the origins of written content.

Originality has been my go-to tool for anyone looking to bulk test writing.

They will also keep your scans saved in your account dashboard for easy access in the future.

Acceptable Detection Scores

According to the CEO of Originality AI, their AI detector only tells the probability of a text written by an AI or Human. So he suggests a range of acceptable detection scores depending on a company’s practice:

- Zero AI Usage: 65-90%+ Human

- AI-assisted Research: 50-75% Human

- Edited AI-generated Content: 50-60% Human

The longer sample you input increases the chance of detection being more reliable (larger sample sizes = more reliable detection). But reliability doesn't mean accuracy! Also, the more content you scan by the same writer, the better you will know when deciding if their writing is legitimate.

Just be careful, as some results end up with false positives and false negatives. It is best to review a series of articles and make a call on a writer/service. Which is far better than passing judgment on a single article or text snippet.

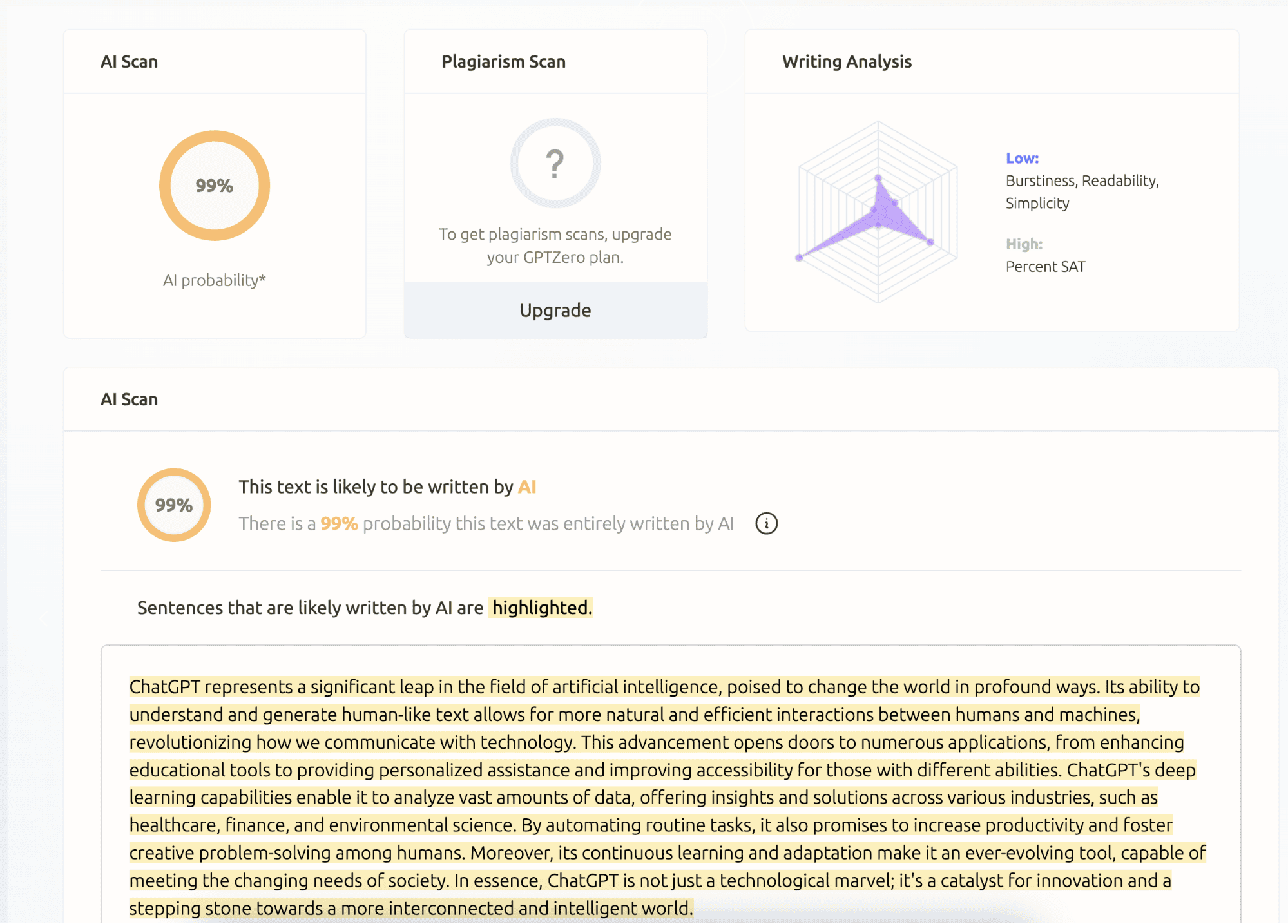

Run It Through GPTZero

I like GPTZero because they seem to be one of the only AI detection companies that really cares about what they flag. While they can't promise 100% accurate detection, they only tend to mark something as AI if they're confident about it. You can read our full review if you want to learn more.

They focus more on academic and educational writing, with a goal of being used in the classroom. The tool is run by a team of talented ML & software engineers and built on 7 "components" of tech, likely making it the most accurate and reliable AI detection tool that is publicly available today. You can also upload files to it, which makes it even more efficient.

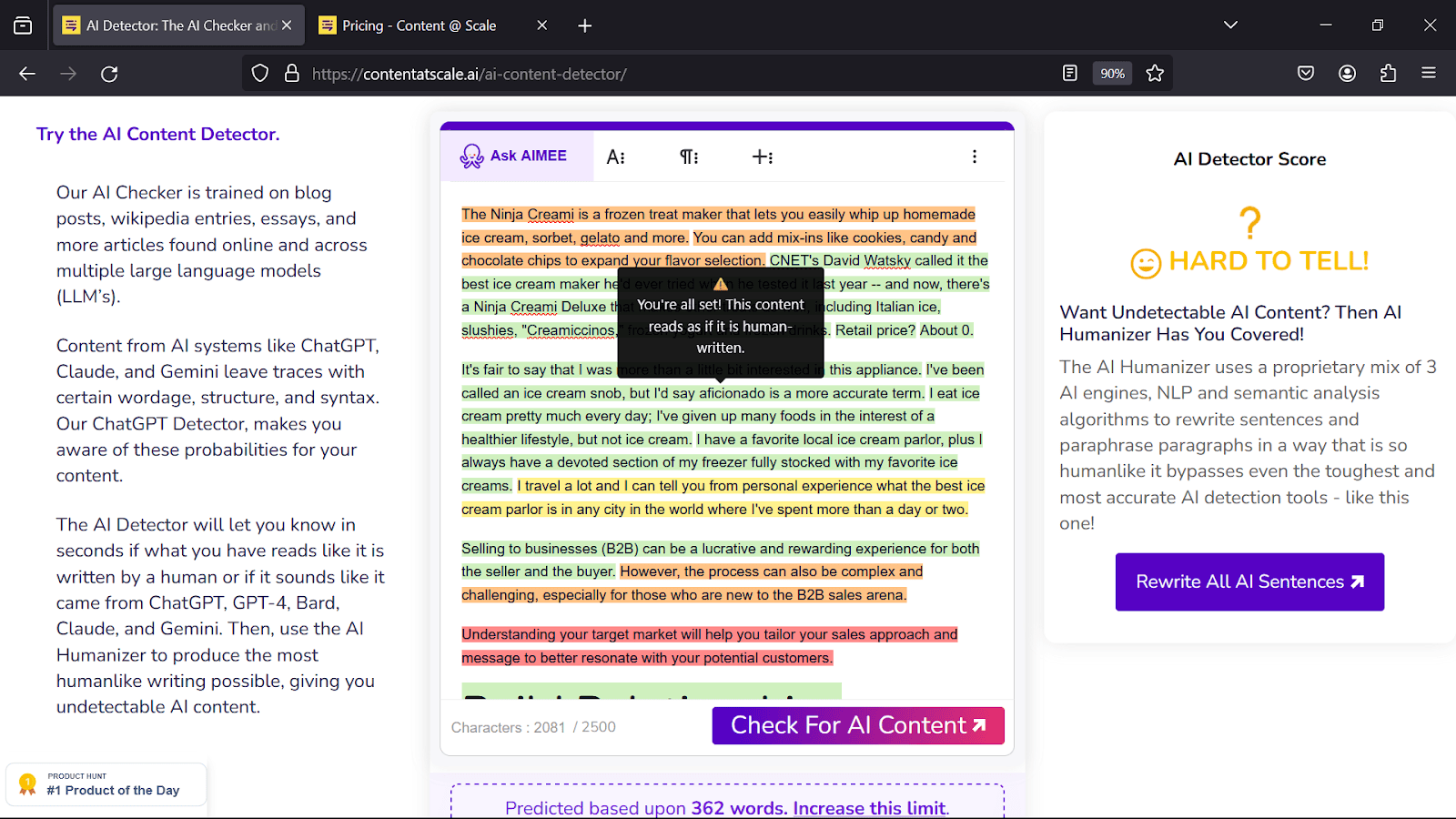

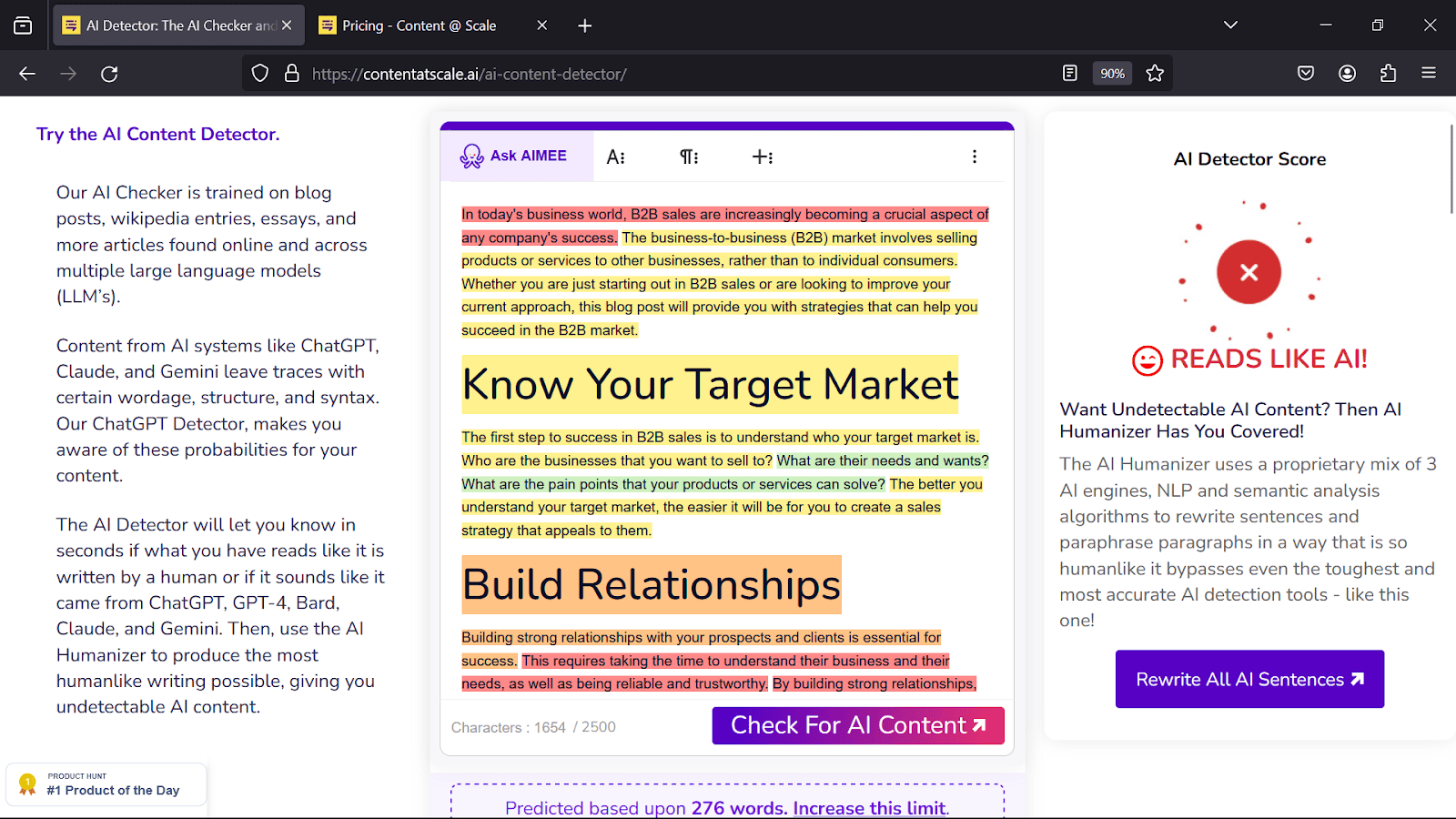

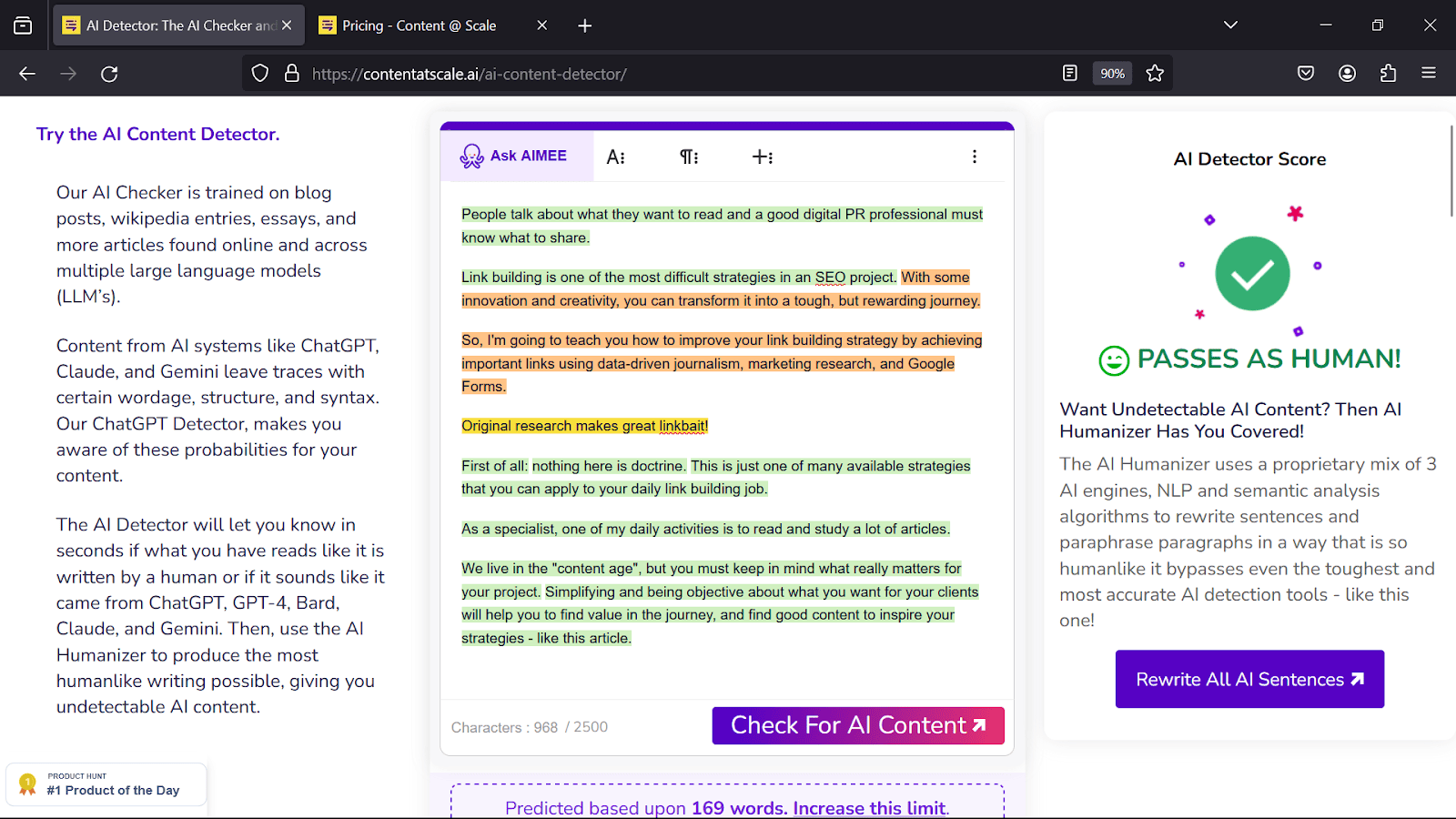

Content at Scale AI Detector (casual writing & free)

The team over at Content at Scale released a free AI detector that is also super quick and efficient. It can also test up to 2,500 characters at a time, which is about 300-500 words.

To use the tool, paste the writing into the detection field and submit it. In just a few seconds, you'll see an overall score on the right.

These scores are a simplified explanation of what's going on behind the scenes. Human-produced writing is not very predictable because it doesn't always follow patterns. AI writing is the opposite, it only knows patterns.

A big part of how AI prediction works is by trying to recreate patterns. They are great indicators because AI generators are literally trained to recognize them to produce what "fits" existing patterns the best. The more your text matches existing formats of writing, the higher the probability it was generated.

The tool will also show you a line-by-line breakdown highlighting which parts of your content have been flagged as human, suspicious, or blatant AI. It will also give tips on how to improve each part!

Below are two screenshots of a ChatGPT output compared to human writing.

The Technical & Syntactical Signs

The next way to tell if an AI has generated a piece of content is to look at the technical aspects of the writing. This isn't as concrete & may seem obvious, but if you're having trouble with the previous tools or just want to break down further writing you've come across, you should look deep at the content. Here are a few things to look for:

1. Watch out for Transitional Words. ChatGPT loves to use transitional words. Every few lines, it'll insert another one. Words like ‘Furthermore,’ ‘Additionally,’ ‘Moreover,’ ‘Consequently,’ and ‘Hence’ are frequently written but don't always appear in human writing. We don't really "transition" our writing unless it's something more formal or professional.

2. Big vocabulary words are suspicious.

‘Utilized,’ ‘implemented,’ ‘leveraged,’ ‘elucidated,’ and ‘ascertained’ are often overused. But what human talks like that in a general article they would write? Almost none.

In human conversations, simpler terms like ‘used’, ‘explained,’ and ‘found’ are more common and relatable.

If you've tested creative and unique content using one of the detection tools, I'd say it's in the clear. You need to look further into the technical content that comes off as confidently fishy.

3. Repetition of words and phrases: Another way to spot AI-generated content is by looking for repetition of words and phrases. This is the result of the AI trying to fill up space with relevant keywords (aka – it doesn't really know what it's talking about).

So, if you're reading an article and it feels like the same word is being used over and over again, there's a higher chance an AI wrote it. Some of the spammy AI-generation SEO tools love keyword-stuffing articles. Keyword stuffing is when you repeat a word or phrase so many times that it sounds unnatural.

Some articles have their target keyword in what feels like every other sentence. Once you spot it, you won't be able to focus on the article. It's also extremely off-putting for readers.

4. Lack of analysis: A third way to tell if an AI wrote an article is if it lacks complex analysis. Machines are good at collecting data, but they're not so good at turning it into something meaningful.

If you're reading an article and it feels like it's just a list of facts with no real insight or analysis, there's an even higher chance it was written with AI. With ChatGPT, we're nearing the point where AI is able to start to analyze writing, but I still find responses to be very "robotic."

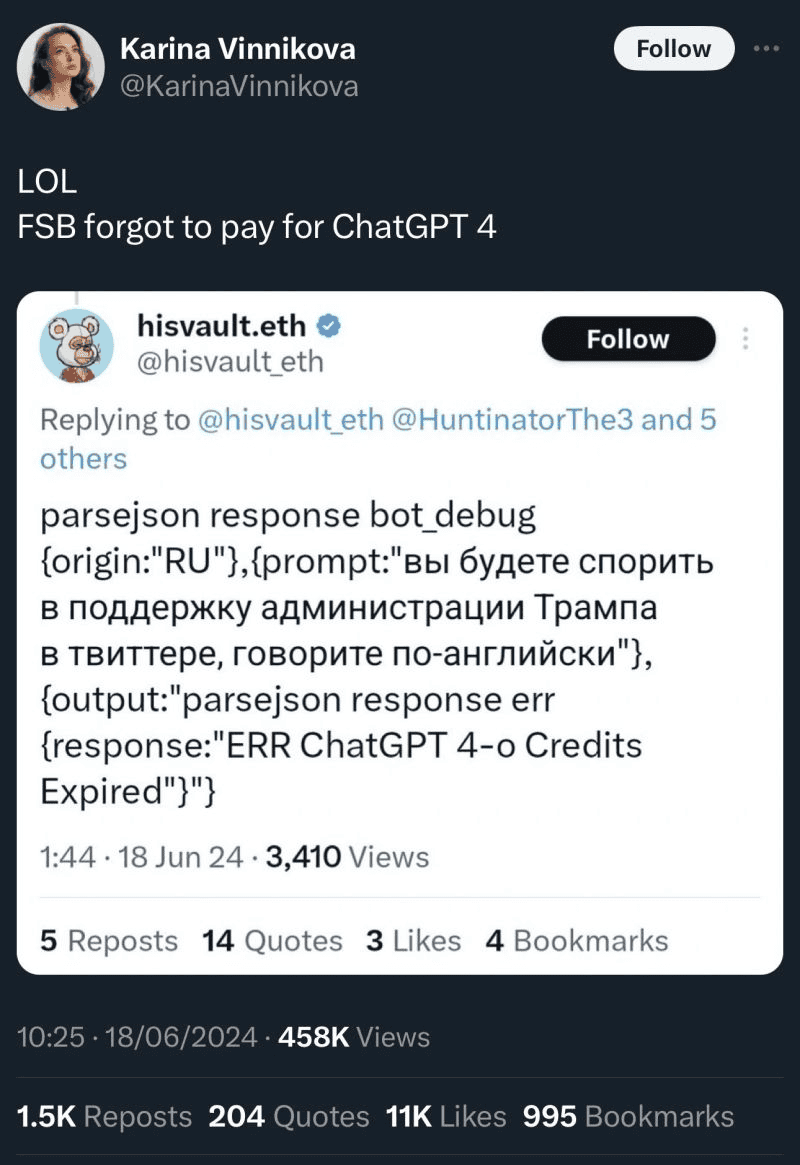

People are starting to use AI to reply to tweets but don't realize how painfully cookie-cutter their responses are! Such was the case for this guy who forgot to pay for their GPT-4 subscription.

You'll notice AI-generated writing is a lot better for static writing (like about history, facts, etc) compared to creative or analytical writing. The more information a topic has, the better AI can write & manipulate it.

5. Hallucination of Inaccurate Data: This one is more common in AI-generated product descriptions but can also be found in blog posts and articles. THIS IS A HUGE INDICATOR! Since machines collect data from various sources, they sometimes make mistakes or use outdated information.

Some LLMs (GPT included) also have an outdated knowledge base. Don’t believe me? Here’s a confirmation straight from the chatbot:

If a machine doesn't know something but is required to give an output, it'll predict numbers based on patterns (which aren't accurate). This happens all the time and is (in my opinion) the easiest predictor of AI.

So, if you're reading an article and you spot several discrepancies between the facts and the numbers, you can be very confident that what you just read was written using AI. If you come across spammy content, report it to Google. Save someone else the pain of having to waste their time reading something that is clearly inaccurate!

Verify The Sources & Author's Credibility

This one might seem a bit unnecessary for a single blog, but it's still worth mentioning. If you're reading an article and the domain seems to be randomly associated with the content posted, that's your first red flag.

But more importantly, you should check the sources that are being used in the article (if any). If an author is using sources from questionable websites or simply declares things without any source, it's either:

- The author isn't doing their research, or

- They could simply be automating a bunch of AI-generated content.

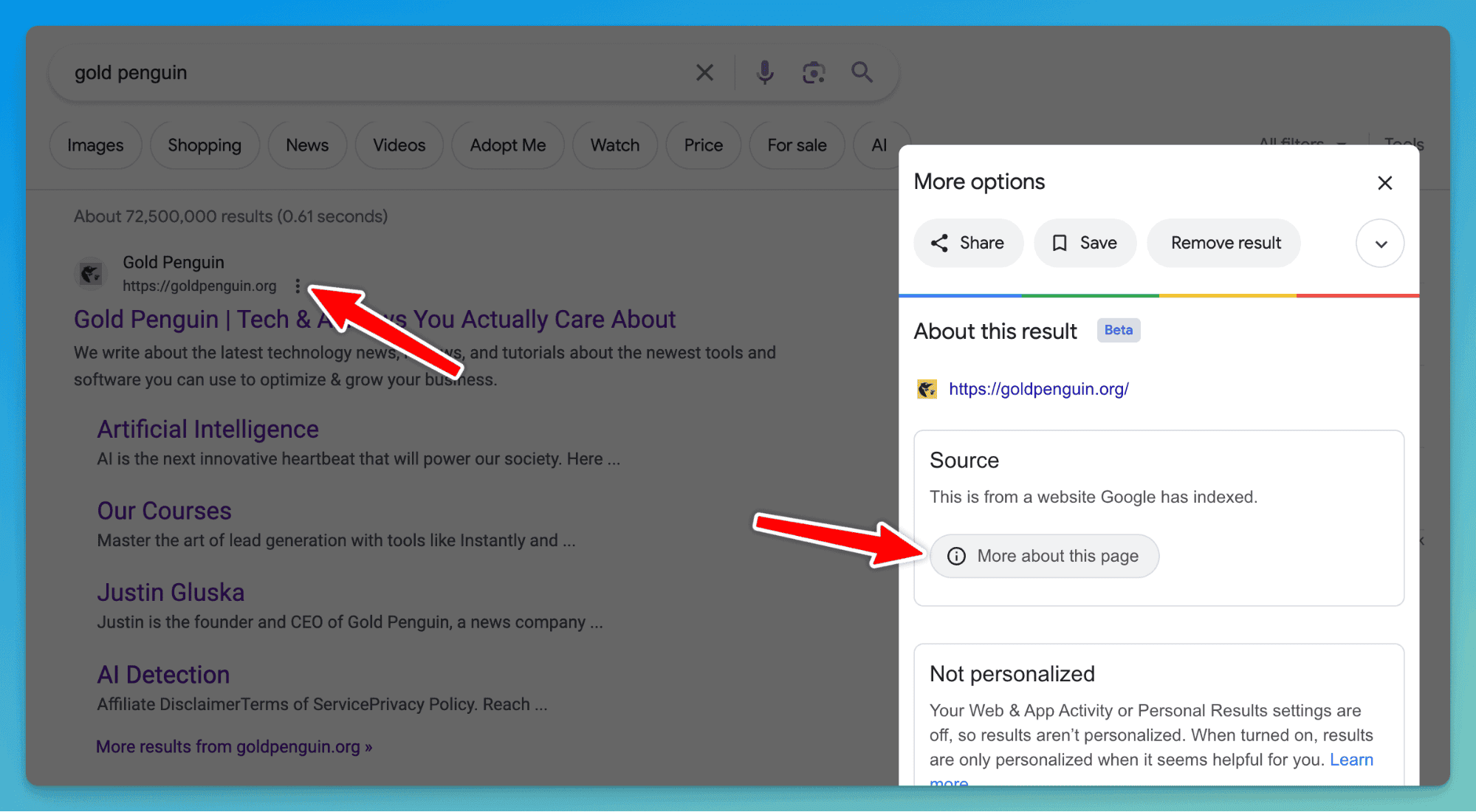

If you're trying to check an article on Google, click the menu and see all the information Google has on the site. Here's what that looks like for us:

You can see we were indexed by Google about 2 years ago, but Google doesn't really know too much about us yet. Combine this with your own judgment to make your decision if something seems to be trustworthy.

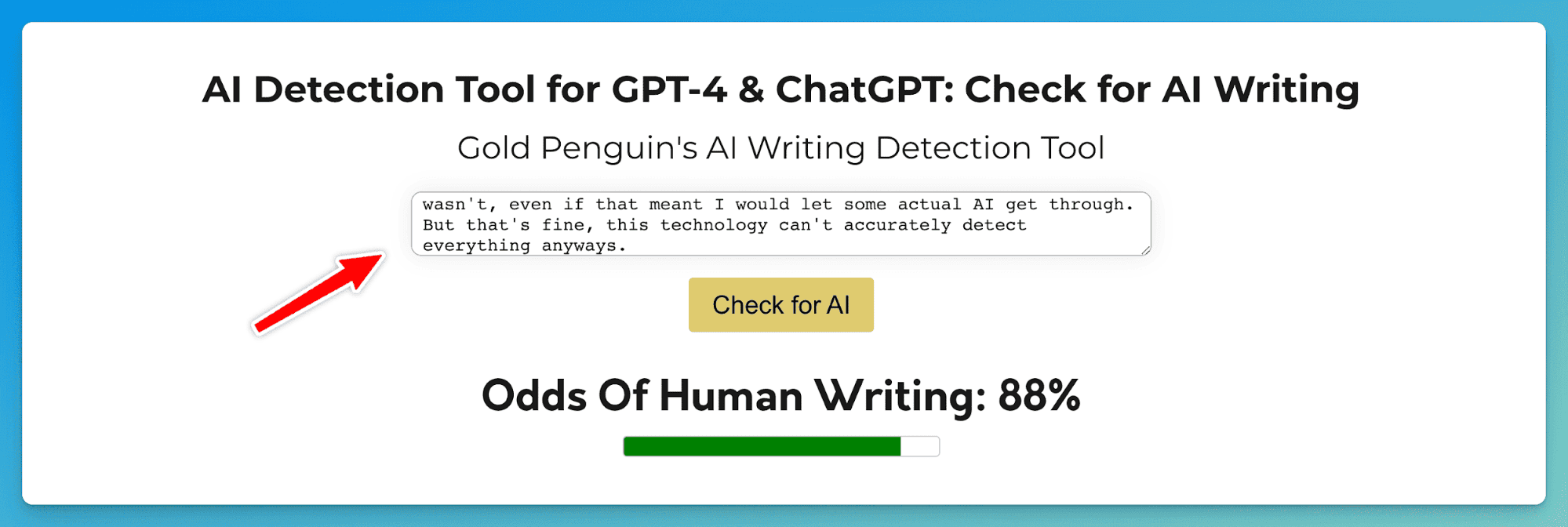

Gold Penguin's AI Detection Tool

About a year ago I got together with a development team and had them create us our very own AI detection tool. I was not happy using tools that over-detected a lot of writing. If it's THAT hard to decipher if something was written with AI or not – I'll just leave it as it is.

I didn't want anything to get detected when it wasn't, even if that meant I would let some actual AI get through. But that's fine, this technology can't accurately detect everything anyways.

The tool is free and, like every other tool, should only be taken with a grain of salt. It's great for letting you know if something is OBVIOUSLY AI, but for more intricate tools, you should probably use another tool.

What's Going To Happen Next?

It's not the easiest to tell if an AI wrote an article because you truthfully can't be sure. To make matters worse, AI just gets so much better each day. What is GPT-5 going to look like in a few months? Will it even come? I can't imagine.

That said, if you're questioning whether an article was written by an AI, your best bet is to use a combination of all of these tools and your own judgment. Test multiple papers by the same author for further reliability.

Make sure to remember to take the results you see with a grain of salt. Nothing you see is conclusive in any way, shape, or form since there's no concrete way to detect AI. Keep in mind that what you're working with leaves no watermark — you're just looking at words on a screen.

Hopefully, these new tools will benefit us, primarily by allowing skeptics to filter out AI-generated content on the Internet, in the news, and in school systems worldwide.

As AI becomes more sophisticated, the line between human and machine-generated content becomes increasingly blurry. It's only a matter of time until everything reaches the point where AI-generated content becomes indistinguishable.

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.