How To Identify and Check for Writing Made with ChatGPT

ChatGPT has blown away pretty much anyone that's tested it out. But is there any way to determine if text was originally created with it? Here's how to tell and also a few AI detection tools you can use.

Justin Gluska

Updated February 23, 2026

Reading Time: 9 minutes

With the recent surge in AI-made content, people across the world are having existential crises trying to figure out what’s real and what’s fake. I mean... have you seen deepfakes recently?

In the writing realm, ChatGPT has exploded since its release, hitting a million users within the first week of its launch and currently boasting over 180 million. It is a casual-speaking, large language model created by OpenAI – the team behind DALL-E and Sora.

After people wrapped their heads around what this actually is, it left tons of people trying to figure out how to reverse-engineer artificially produced writing. And it's fairly simple too.

Here are a few tools and tips you can follow to help figure out whether you're looking at a human’s writing or ChatGPT’s.

Look Out For Uncanny, Polished Language

AI-generated articles tend to have a certain level of polish and consistency that is often associated with perfection. They are designed to adhere to grammatical rules and maintain a structured flow. However, this perfection often comes at the cost of creativity and the human touch. Humans make mistakes, AI writing is engineered to be objectively "perfect."

Simply put, AI tools don’t “think” or “feel” in the way humans do. But instead processes and reproduces patterns from vast datasets.

Take this generated writing for example:

It’s alright, but it sounds too vague and generic. The language itself feels off and the almost human-like tone is treading into this uncanny valley territory.

From my experience, there are a few indicators that tell me intuitively a writing is likely AI-generated:

- Complex vocabulary and jargon is used.

- The tone used is often too formal.

- The content lacks depth and solely relies on surface-level information.

- The sentence structure and length is predictably consistent and lengthy.

Like so:

However, this is often not a definitive way to detect ChatGPT.

If one is excellent at prompt engineering, they can easily curate an output that sounds less “robotic”. And the opposite is also true: some humans actually write “perfectly,” especially non-American native speakers because they’re taught professional English from an early age. We can’t exactly punish people for being good writers.

Use AI Writing Checker Tools

Tools that predict AI analyze how likely each word would be predicted next, based on the previous context of words to the left. We wrote a more in-depth explanation if you want to take a deep dive.

Here are my top picks after analyzing detectors for months on end:

Tool 1: Winston AI

If you’re looking for something that’s stricter, Winston AI is worth the price.

A few months ago, we tested every popular AI detection tool in 2024. Winston AI blew me away with how accurate it was with true positive tests, ranking first with a strong 91.92% true positive accuracy test — mind you, this test included text tweaked with Undetectable AI, the most consistent AI humanizer we’ve tried so far.

So, what’s the trade-off?

It’s a little too strict sometimes.

In the same test I linked above, we found Winston to be the least effective at correctly detecting false positives with a score of 59.2%.

Tool 2: Pangram

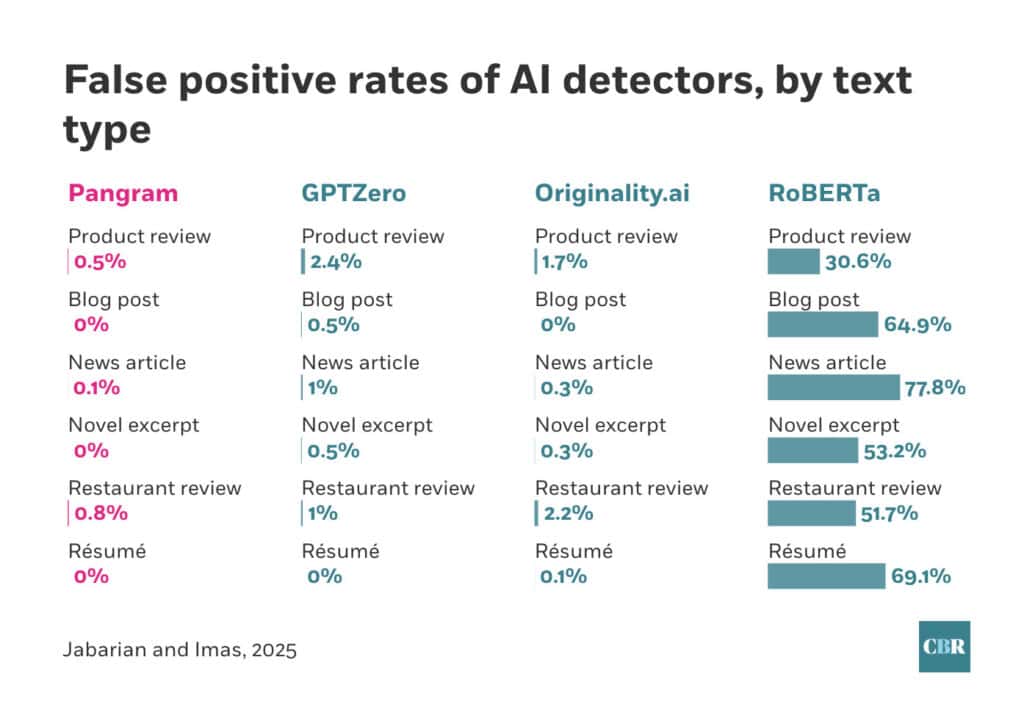

Pangram is another AI detection tool that keeps showing up in higher-education conversations. And unlike many detectors that rely on broad claims, Pangram tends to be discussed alongside third-party evaluations.

According to coverage and comparisons referenced by institutions like the University of Chicago and the University of Maryland, Pangram is often described as one of the more reliable options in the category. Pangram also claims it can detect both short and long-form AI content, with accuracy as high as 99%.

One detail worth highlighting is Pangram’s positioning around AI-assisted writing — not just fully AI-generated text. That distinction matters more in 2026, when most student work isn’t “pure AI,” but blended.

For teachers, accessibility also helps. Pangram allows free use up to four times per day, with paid plans starting around $20/month, and API credits starting at $5 for 100 credits.

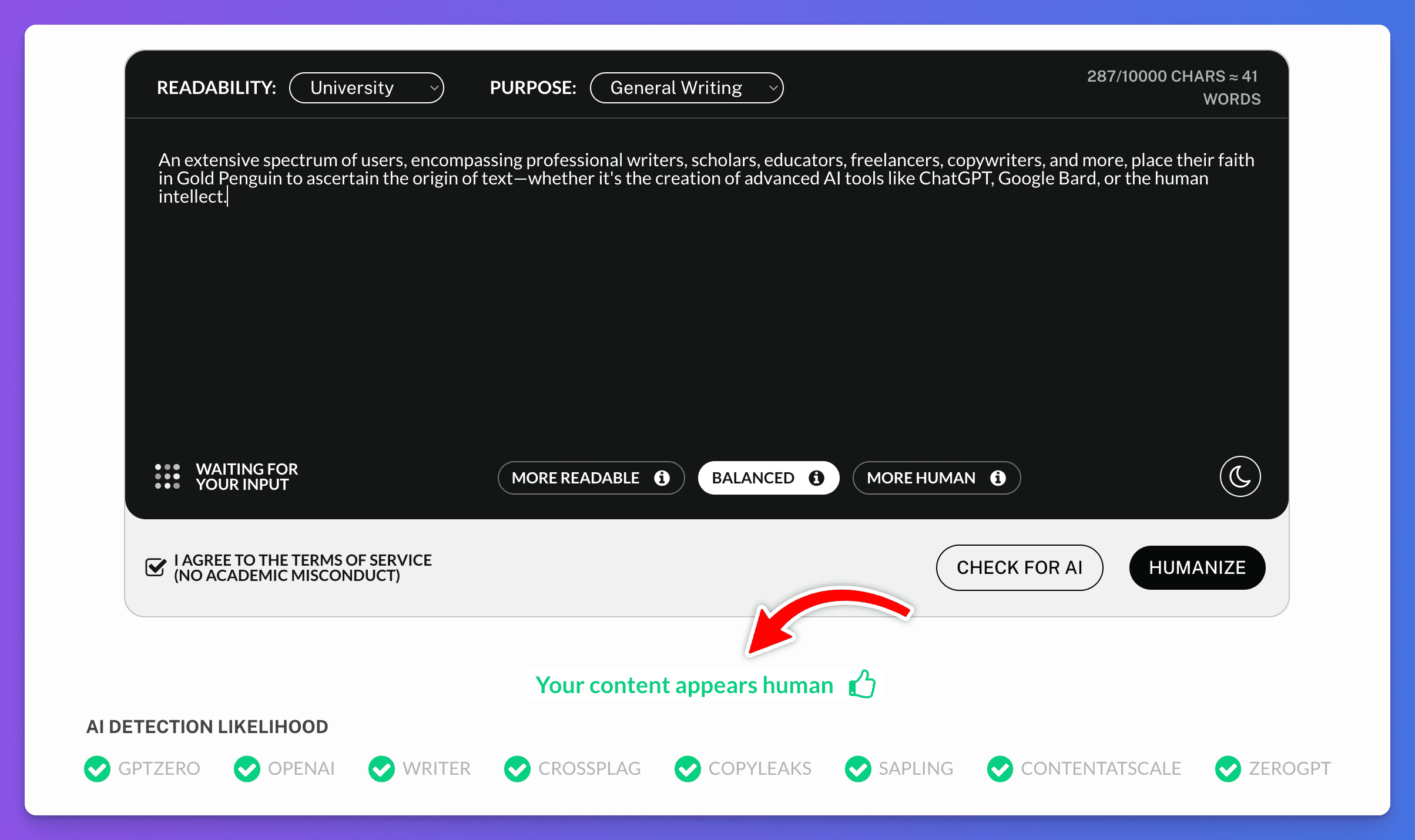

Tool 3: Undetectable.AI

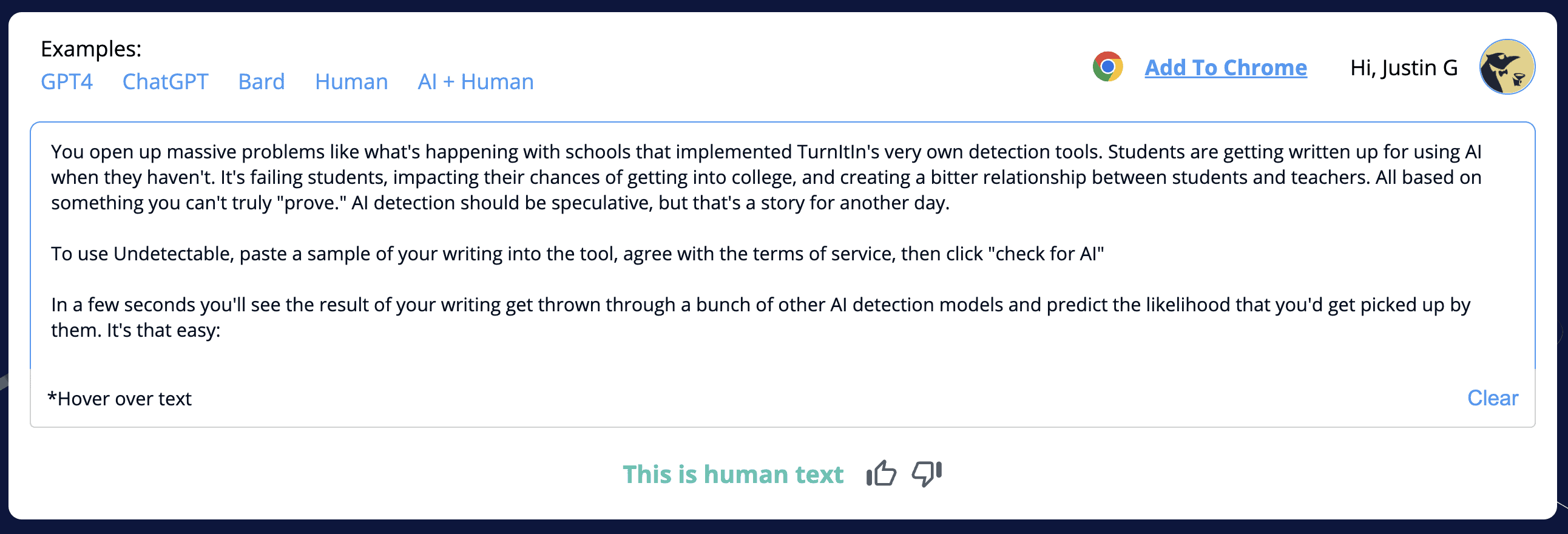

I really like Undetectable because they made a tool that analyzes every other detector you'll run into online. They created their own detector by combining existing models. It's a great way to get a better overview of the most popular tools in one fell swoop.

They run your writing through some of the most popular AI detection tools like Content At Scale and GPTZero (which we'll get into later) by analyzing how those tools would classify your writing if you tested them individually.

I've noticed both Undetectable and Content at Scale are fairly conservative with their predictions & try not to false flag anyone. So, always take these results with a grain of salt.

I also like the fact that Undetectable tries to be hesitant about writing. I don't think you should classify AI writing unless you're fairly confident about it.

You open up massive problems like what's happening with schools that implemented TurnItIn's very own detection tools. Students are getting written up for using AI when they haven't. It's failing students, impacting their chances of getting into college, and creating a bitter relationship between students and teachers. All based on something you can't truly "prove." AI detection should be speculative, but that's a story for another day.

To use Undetectable, paste a sample of your writing into the tool, agree with the terms of service, then click "check for AI"

In a few seconds you'll see the result of your writing get thrown through a bunch of other AI detection models and predict the likelihood that you'd get picked up by them. It's that easy:

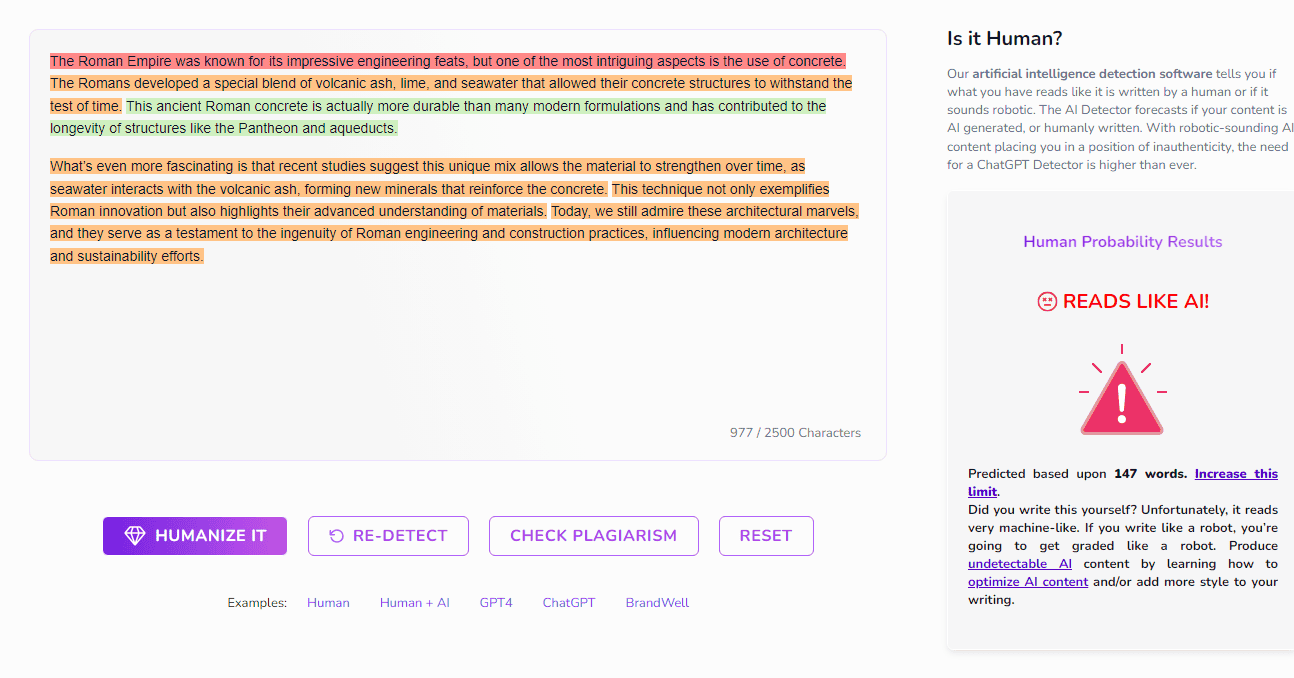

Tool 4: CopyLeaks

CopyLeaks is probably the most straightforward & fairly strict AI detection tool. It detects AI-generated writing regardless of which model somebody is using. It's quick, free to a degree, and I'd consider it to be reliable.

They also are pretty good at figuring text that's in the middle (writing that is a mix of ChatGPT & human writing)

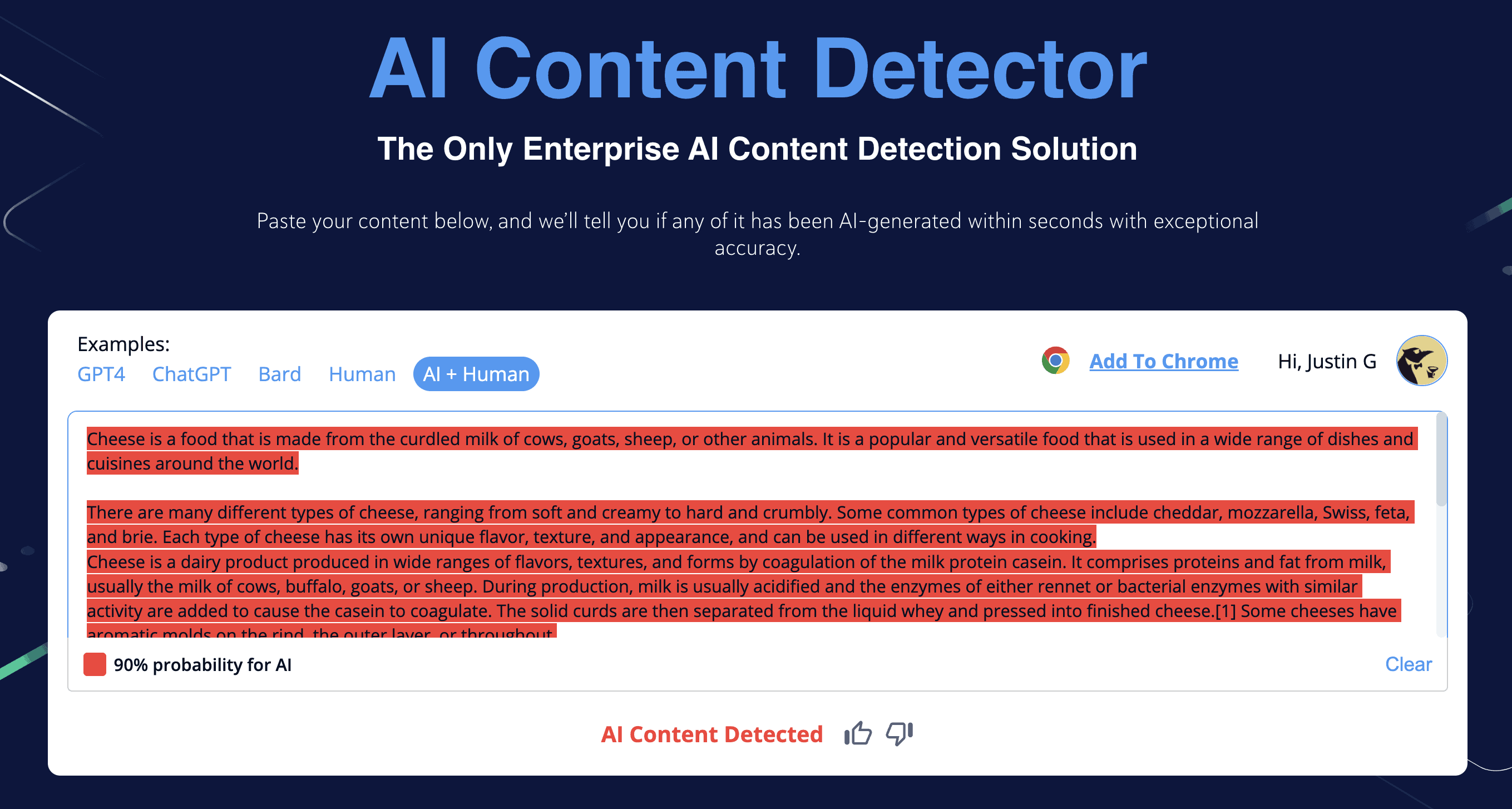

Paste your writing, press check, give it a few seconds, and you'll have a result. Here’s what it looks like:

Tool 5: Originality AI

While CopyLeaks is meant for testing quick content (and it does it pretty well), Originality AI does have the tendency to over-predict at times, which I don't like.

Originality AI is better for industry-level & bulk testing, even in 2024. It works the same as all the other tools: Copy & paste suspected ChatGPT content and let it do the rest of the work for you. However, you’ll need to subscribe to their paid plans to use it. If you want to read an in-depth review of how Originality AI works, we broke it down in this review.

For the free version, they have a Chrome extension browser you can try out. It will display a percentage showing if a text is more or less likely to have been written by an AI or human. It also has several other features such as Plagiarism score, Readability score and more.

Tool 6: BrandWell (Formerly known as Content At Scale)

The next tool you could use to check for ChatGPT is using BrandWell's AI Detector.

I've been using this tool from the very day it was released and love it because it tends to not "over-predict" writing. It's also completely free.

I'd rather let some AI get through if it meant not flagging someone on accident. If you want a more strict tool, Originality is probably the better choice.

Here's an example of a snippet I generated using ChatGPT & pasted directly into their tool:

Paste it in the tool and in seconds, you'll get a line-by-line breakdown on top of a percentage on whether it was written by ChatGPT or a human.

Once submitted, you'll see this content was very likely to be written by AI. The content was easily predictable, followed a lot of patterns, and wrote probable content. BrandWell will also highlight each line it believes to be written by ChatGPT or an AI (the red texts).

If you need something more strict though, maybe something a little harder on both ChatGPT writing & plagiarism, look into Originality.

Tool 7: Gold Penguin's AI Checker

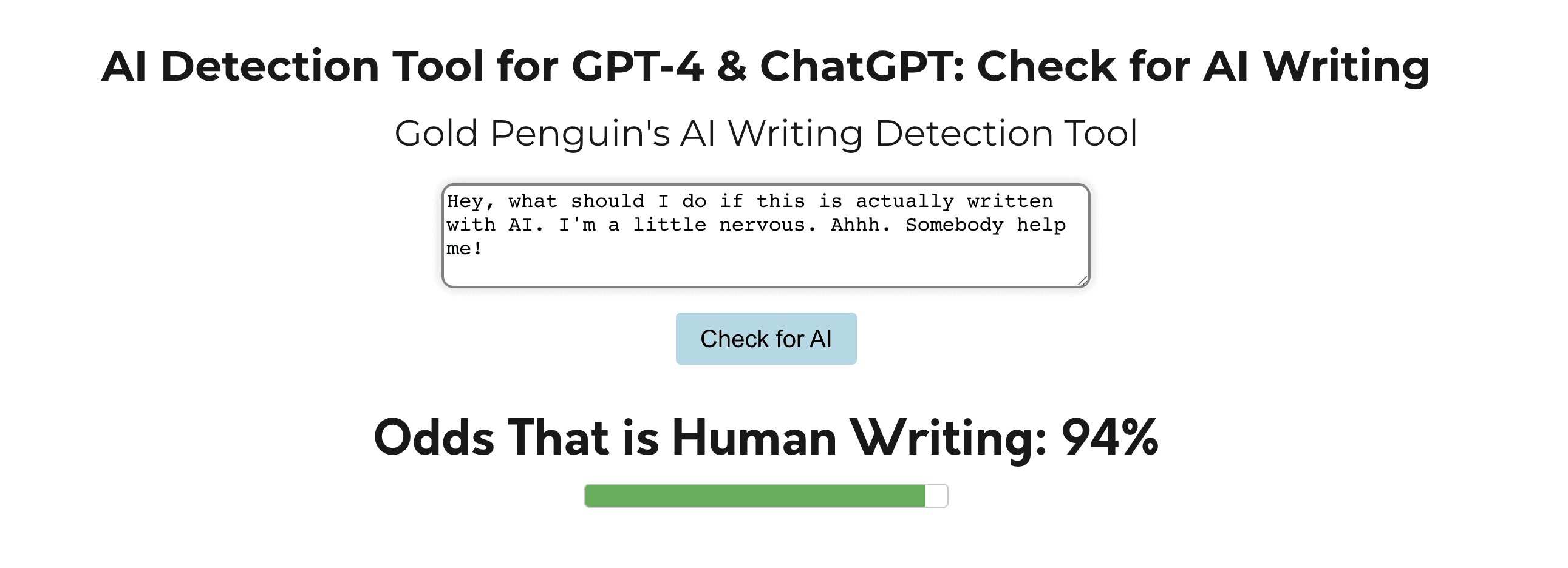

Yes... we actually made one ourselves! After testing these tools throughout the last few months it was only a matter of time. I decided to list our AI writing detection tool last because it generally under-predicts AI writing.

We created it to minimize the risk of falsely flagging someone with using ChatGPT to write something. Some of the other detectors have been caught flagging the constitution of the United States as being AI-written! That's insane!

Anyways, our tool is free & extremely simple to use. Just paste the text into the input box and check for AI:

Please keep in mind there is no mathematically definite way to confirm AI-generated writing and that all of these tools work on predictions, but they are decent indicators. A good teacher or employer should know that these are meant to supplement, and isn’t the be-all-and-end-all of detection.

What's Next?

It's hard to tell, I don't really know. Of course there are still many things ChatGPT cannot accomplish, but it seems overnight we've upgraded society's technical skills. The education or professional industry won't be ruined because of this – but it'll certainly cause a disruption.

Remember to take any result with a healthy amount of skepticism, as no tool can 100% determine if AI was used to generate if ChatGPT or any other AI writing tool/chatbot wrote something.

But between the two of us? I’d trust Winston and Pangram above all.

Use your best judgment and always use more than one route when determining the likelihood of something being written by ChatGPT. Also, this article wasn't pro or anti-AI but rather an educational piece describing a few ways to detect it. The next few years will certainly be exciting!

Want to Learn Even More?

If you enjoyed this article, subscribe to our free newsletter where we share tips & tricks on how to use tech & AI to grow and optimize your business, career, and life.